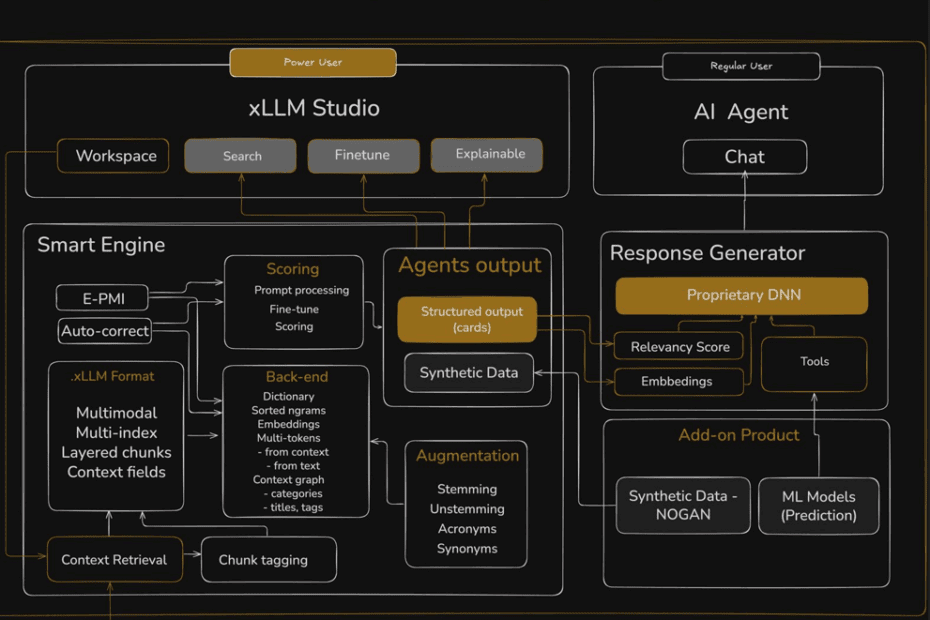

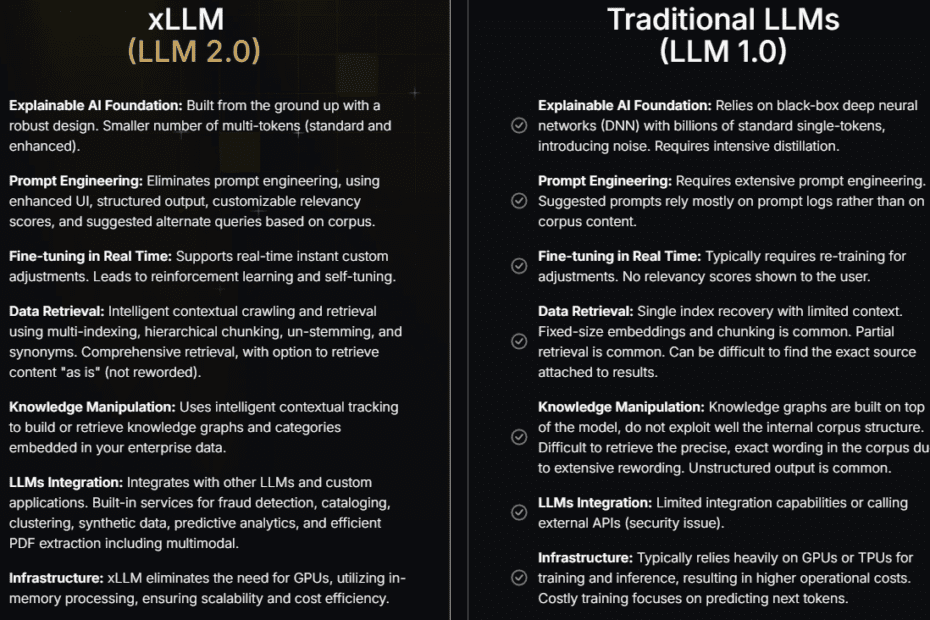

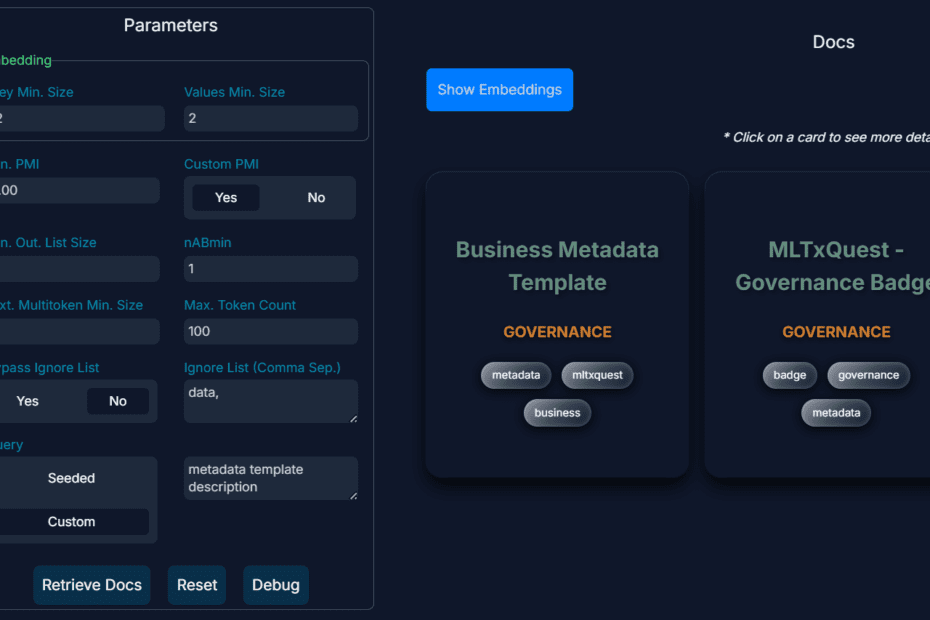

How to Get AI to Deliver Superior ROI, Faster

Reducing total cost of ownership (TCO) is a topic familiar to all enterprise executives and stakeholders. Here, I discuss optimization strategies in the context of… Read More »How to Get AI to Deliver Superior ROI, Faster