To produce a regression analysis of inference that can be justified or trustworthy in the sense that helpful. The term in the statistical methods that generate a linear the best estimator is not bias (best linear unbiased estimator) abbreviated BLUE. Then there are some other things that are also important to note, in which the data to be processed, must meet certain requirements. In terms of statistical methods some terms or conditions of the so-called classical assumption test. Because they meet the assumptions of classical statistical coefficient will be obtained which actually became estimator of parameters that can be justified or accurate, among others:

- Must meet the assumptions of single colinearity, meaning between independent variables with each independent variable others in the regression model no multicollinearity, is a condition where there is a linear relationship was perfect or near perfect between the independent variables.

- Must meet homoscedasticity assumptions, it means a state where the variance the existing data on every variable must be the same (constant). In the event of deviation from this phase, mean regression model are heteroscedasticity.

- Must meet homogeneity assumptions, it means a state where the sample data should be derived or obtained from a population with a range or variance of the same.

- Does not contain autocorrelation or serial correlation, the correlation between data samples are arranged in order of time, for example in the form of time series data. This means that there is no influence between the variables included in the model through the grace period (time lag). Where, deviations occur when it is known that the value of the current variable will affect the value of other variables in the future.

- The independent variables in the model must have a constant value in each experiment carried out repeatedly, meaning that in the independent variable does not contain a correlation with an error rate in each of the observations made.

- Error to be normally distributed, ie where confounding variables has a distribution or a normal distribution, it is for the validity, stationeritas, the reliability of the data in the available variables.

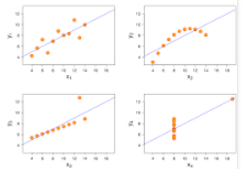

- Must meet the assumptions of linearity, which is to see whether the specification is a linear regression model is correct or not, so if convinced that the linear regression model is the best model, it is necessary to test the linearity of advance.

All terms or phases of the classical assumptions that must be met, in order to build a regression model that could be accounted for. Thus, the need to test that assumption is intended to meet some of the elements of the accuracy of the parameter estimator is not biased to reflect the efficient level of analysis results are consistent so that the regression equation can be trusted.

But what is the problem ?

That in the classical statistical assumptions are considered to have fulfilled just because what counted was to find a causal relationship between the independent variables affect the dependent variable. Whereas in the economy, the assumption is certainly not applicable, because the economic variables must have each other’s behavior that allows one to violate these assumptions.

It can be concluded that the assumptions are considered correct in the statistics need to re-examine, in the sense of doing the reprocessing data that exist, such as the increase or decrease of data, combining data, change data in a particular form (differential and integral) and other. It can be called as well as the manipulation of data with the intention of transforming the regression model for the later expected to meet the classical assumptions. For example, to meet the assumption of single linearity (collinearity) if a regression model has a double colinearity (multicollinearity) it is necessary to find a way to correct these deviations settlement. More on how to tackle the problem of double colinearity, there is some way to addressing the problem of the multicollinearity, among others:

- Checking theoretically whether between independent variables there is a connection. This relates to how to find supporters of the theory through the study of literature in selecting independent variables.

- Doing merger between places or cascading series data space (cross-section) and time series that can be referred to as the polling data.

- Remove one of the variables of the model.

- Transform the existing variables in the model.

- Adding new data, namely by increasing the number of observations

More on ways to tackle the problem of the fulfillment of these classical assumptions. It is known that in fact these problems arise because of the certain things. For example on the assumption that a regression model may not contain autocorrelation, where the occurrence of autocorrelation in fact caused by several things. The cause of autocorrelation, among others:

- Inaction, this occurs especially in the nature of time series data. That is not change the economic situation is usually not immediately occurring. For example, when the BI rate experienced a rate of change of the other banks need to make adjustments for at least three months running.

- Specifications bias, in which a regression model with certain reasons do not include one or a couple of variables, but these variables are relevant may cause autocorrelation. Such models are specified bias. So that an unknown variable although autocorrelation result, must remain inserted into the model, so as not to bias (unbiased).

- One determines the shape function, autocorrelation arise due to errors in determining the function, which cause nuisance autocorrelation in error. For example, should the model expressed in the function is not linear, but is expressed in linieir function.

- The influence of the pause time (time lag), it is actually related to the first cause that inaction. That is, if known, turns the dependent variable is not only influenced by the independent variable, but is also influenced by the dependent variable in the previous period can lead autocorrelation. For example, the amount of exports is not only influenced by inflation in the period, but also by exports in the previous period.

Conclusion

If concluded in fact still found some problems, related in terms of examining the economy and the use of methods of analysis. Broadly speaking the proficiency level in these issues, among others:

- Risk of uncertainty economy is believed to still be perceived by the public, such as entrepreneurs, investors and other business people. In addition, the risks of economic uncertainty is also believed by the government, can be a barrier in achieving economic goals. Wherein, the government should solve the economic problems with decrees and regulations of unilateral or democratically by the legislative process.

- Information is data on the economic factors available from the real world it is still not used optimally in the case to support the achievement of economic goals. For methods that exist today have not been able to make optimum use of information. For example, it is known theoretically for multiple regression analysis is considered no longer effective, if in models include more than seven independent variables.

- Theoretically, that the relations in economic theory that ignores the effect of random variables or can be interpreted only deterministic. That in economic theory beyond the influence of the variables included in the analysis were considered constant. So the analysis targets a general nature only.

- Regression analysis as a method suggested by economists and econometricians, it is still not maximized. This is due to the existence of certain conditions (the classic assumption) that must be met. Meanwhile, according to experts, these requirements may not be met, but also can not be ignored.

With adjustments being an attempt to fulfill certain requirements (classical assumption) in the regression analysis as a form of simplification in the application of modern economics, which is a form of empirical science. It turned out to be ignoring important and fundamental. Namely, with the change in the price or value of the real, the observation result of these adjustments. So the ability to maintain the condition of all-an empirical regression analysis so dubious.

Based on a description of the problem, it’s the next point, which is about questions that arise:

- Is there a tool or a method of analysis that is able to eliminate or at least minimize the risk of uncertainty in the economy ?

- Is there a tool or a method of analysis that is able to optimize the use of information in the form of data from these economic factors, of course to support the achievement of economic goals ?

- Is there a tool or a new analytical method that is able to address the shortcomings of existing methods of analysis ?

The analyst should be a problem-solvers not be troublemakers.