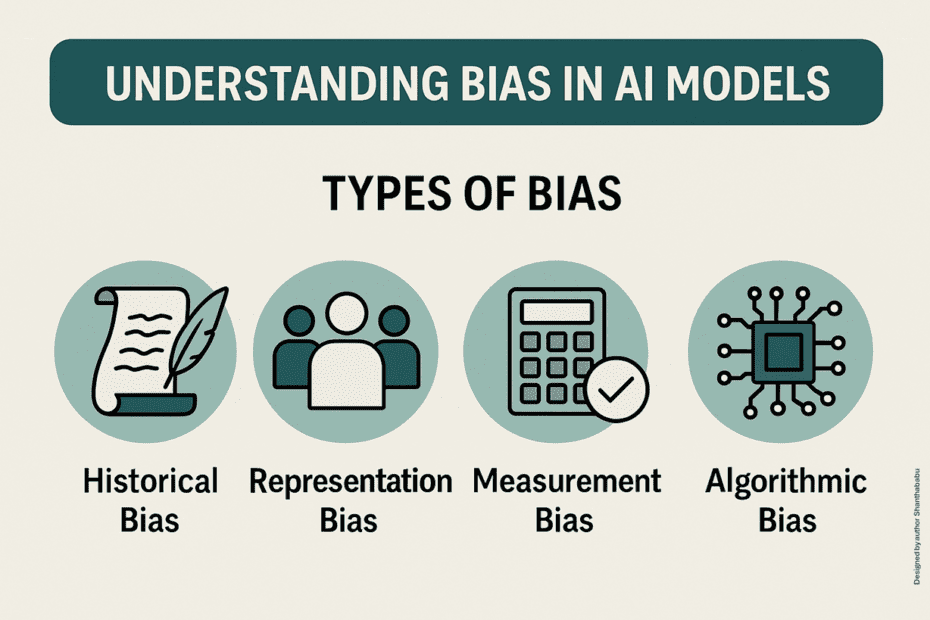

Ethics-driven model auditing and bias mitigation

Introduction Artificial intelligence (AI) and machine learning (ML) systems are becoming increasingly integral to decision-making processes across various industries, including healthcare, finance, education, law enforcement,… Read More »Ethics-driven model auditing and bias mitigation