Summary: AI/ML itself is the next big thing for many fields if you’re on the outside looking in. But if you’re a data scientist it’s possible to see those advancements that will propel AI/ML to its next phase of utility.

“The Next Big Thing in AI/ML is…” as the lead to an article is probably the most overused trope since “once upon a time”. Seriously, just how many ‘next big things’ can there be? Is your incredulity not stretched every time you read that?

“The Next Big Thing in AI/ML is…” as the lead to an article is probably the most overused trope since “once upon a time”. Seriously, just how many ‘next big things’ can there be? Is your incredulity not stretched every time you read that?

It’s tempting to say that writers starting an article in this way should be flogged …except that yours truly did recently start one with “the next most IMPORTANT thing in AI/ML…” Well that’s clearly different isn’t it – almost.

If you label something ‘next big thing’ it’s evident you have a strong opinion – or your marketing department has no imagination.

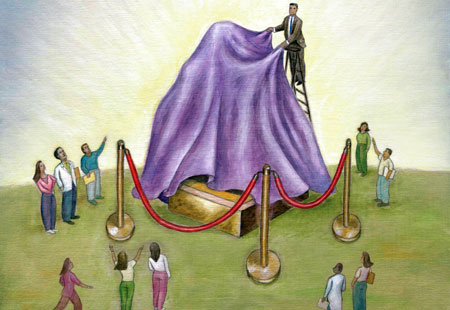

First of all, if you’re on the outside of AI/ML looking in, AI/ML clearly is the next big thing. Most next-big-thing articles are actually in this category, explaining how AI/ML can enhance everything from your dating life to your investment portfolio.

But if you’re fortunate enough to be on the inside as our readers are then you know that the future of AI/ML is developing along many different paths and some of those should be more important than others. Some are technical, some are applications, and some are even social or philosophical. So how to tell what the next big thing is or at least what the rankings should be.

Read the full article here. For more recent articles about AI, follow this link.