|

Announcements

Get the performance you need to transform massive amounts of data into insights and create amazing customer experiences with NVIDIA-powered workstations for data science. Built by leading workstation providers to combine the power of NVIDIA® RTX¢ GPUs with accelerated CUDA-X AI data science software to deliver a new breed of fully integrated workstations for data science. Learn More Earn your MS in Data Science from Northwestern and build statistical and analytic expertise as well as the management and leadership skills needed to implement high-level, data-driven decisions in a wide range of fields. Study entirely online in classes led by leading data science professionals. Learn More The Hardest Part of Machine Learning? Getting Good Source DataI talk to a lot of people involved in the data science and machine learning space every week – some vendors, some company CDOs, many just people in the trenches, trying to build good data models and make them monetizable. When I ask what part of the data science pipeline they have the hardest part with, the answer is almost invariably “We can’t get enough good data.” This is not just a problem with machine learning, however. Knowledge Graph projects have run aground because they discover that too much of the data that they have lacks sufficient complexity (read, connectivity) to make modeling worthwhile. The data is often poorly curated, poorly organized, and lacking in semantic metadata. Some data, especially personal data, is heavily duplicated, has keys that have been lost in context, and in many cases cannot in fact be collected without a court order. Large relational databases have been moved into data lakes or enterprise data warehouses, but the data within them often heavily reflects operational rather than contextual information, made worse by the fact that many programmers have at best only limited training in true data modeling practices. What this means is that the content that drives the initial training of the data model is noisy, with the signal so weak that any optimizations made in the model itself may put the data scientist into a position where they are able to reach the wrong conclusions faster. Effective data strategy involves assessing the acquisition of the data from the beginning, and recognizing that this acquisition will require the expenditure of money, time, and personnel. There are reasons why data aggregators usually tend to benefit heavily from being early adopters – they discovered this truth the hard way, and made the investment to make their businesses data scoops, with effective data acquisition and ingestion strategies rather than just assuming that the relational databases in the back office actually had worthwhile grist for the mill. As data science and machine learning pipelines become more pervasive in organizations and become more automated, through MLOps and similar processes, this need for good source data is likely to be one that every organization’s CDO needs to attend to as soon as possible. After all, garbage in can only mean garbage out. In media res, Kurt Cagle To subscribe to the DSC Newsletter, go to Data Science Central and become a member today. It’s free!

Data Science Central Editorial CalendarDSC is looking for editorial content specifically in these areas for July, with these topics having higher priority than other incoming articles.

DSC Featured Articles

TechTarget Articles

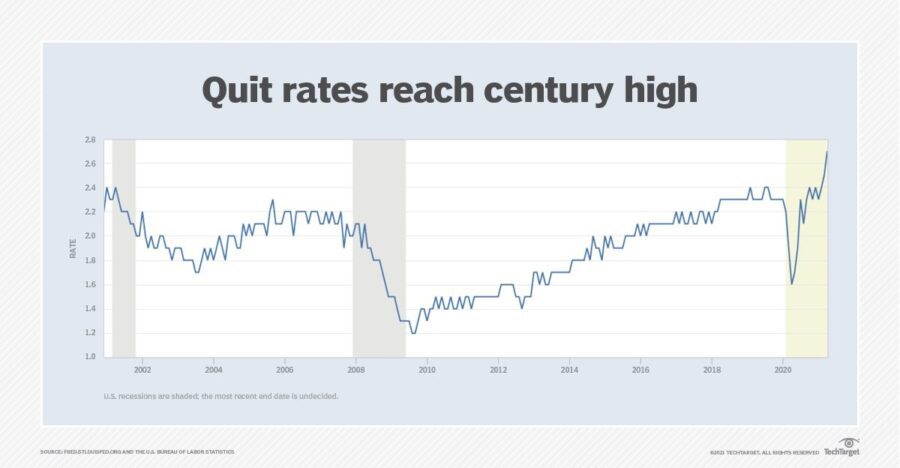

Picture of the Week

To make sure you keep getting these emails, please add [email protected] to your browser’s address book.

Join Data Science Central | Comprehensive Repository of Data Science and ML Resources

Videos | Search DSC | Post a Blog | Ask a Question Follow us on Twitter: @DataScienceCtrl | @AnalyticBridge This email, and all related content, is published by Data Science Central, a division of TechTarget, Inc.

275 Grove Street, Newton, Massachusetts, 02466 US You are receiving this email because you are a member of TechTarget. When you access content from this email, your information may be shared with the sponsors or future sponsors of that content and with our Partners, see up-to-date Partners List below, as described in our Privacy Policy . For additional assistance, please contact: [email protected] copyright 2021 TechTarget, Inc. all rights reserved. Designated trademarks, brands, logos and service marks are the property of their respective owners. |