As I completed this blog series, the European Union (EU) announced its AI Regulation Law. The European Union’s AI Regulation Act seeks to ensure AI’s ethical and safe deployment in the EU. Coming on the heels of the White House’s “Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence,” we should expect a steady stream of legislation designed to ensure that AI is being used to deliver meaningful, relevant, responsible, and ethical outcomes.

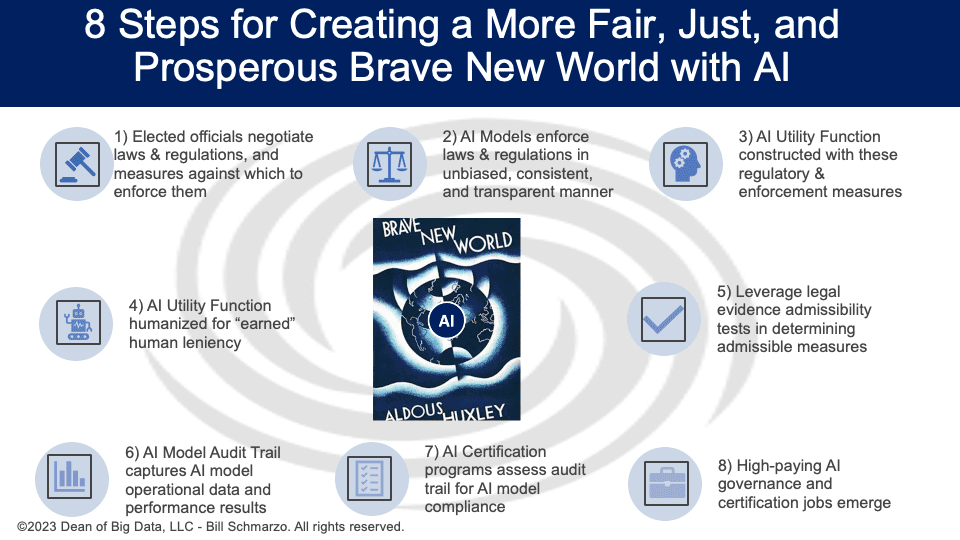

The mandate to deploy AI in a manner that creates a more fair, just, and prosperous world for everyone is upon us. What are the key concepts and actions we must undertake to achieve the goal of the just and fair application of AI? Well, here is one view of how to achieve that goal:

(1) Officials Negotiate Laws and Regulations. Elected legislators would continue to define our society’s laws and regulations. Unfortunately, the biased and opaque enforcement of today’s laws and regulations leads to manipulation, distrust, anger, and citizen disenfranchisement.

(2) AI Models Enforce Laws and Regulations. To address the issues of manipulation, distrust, anger, and citizen disenfranchisement, we would create AI models to enforce society’s laws and regulations in a more unbiased, consistent, and transparent manner. For these laws and regulations, legislators would need to collaborate across diverse groups of constituents to identify the desired outcomes, their importance (weights), and the measures against which laws and regulations compliance would be enforced.

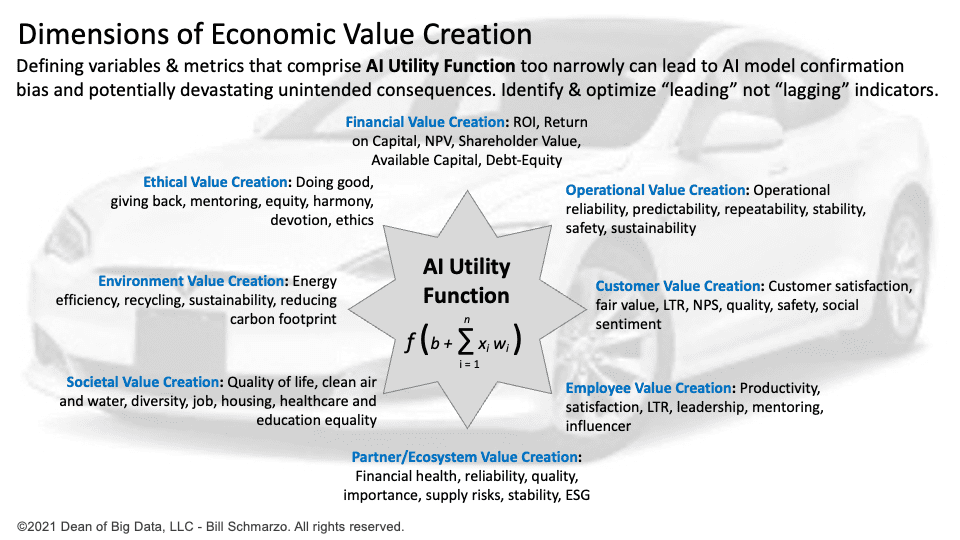

(3) AI Utility Function Engineered for Compliance. The AI Utility Function, a mathematical function that evaluates the different actions that the AI system can take, would include the desired outcomes of the laws and regulations, their importance (weights), and their supporting measures. The AI Utility Function would ensure the enforcement of the laws and regulations in an unbiased, consistent, and transparent manner.

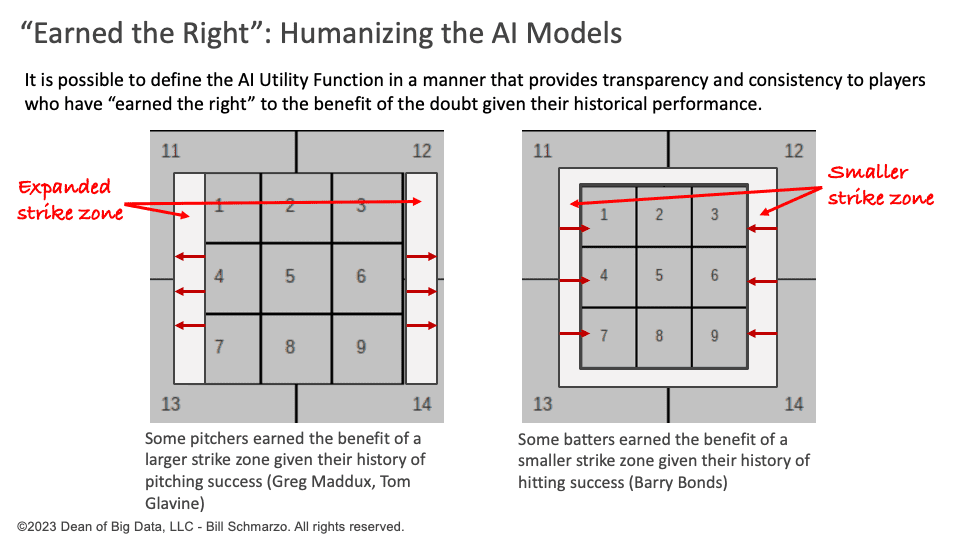

(4) AI Utility Function Becomes More Human. To address the concern of AI model authoritarian decision-making, we can expand the variables and metrics in the AI Utility Function to provide enforcement “leniency” for those “who have earned the benefit” of leniency consideration. Then, when leniency is given in enforcement, the measures that drove the leniency decision would be transparent to everyone.

(5) Leverage Legal Evidence Admissibility Tests. We would leverage time-tested legal evidence admissibility tests – like the Frye and Daubert standards – to help select the measures that comprise a healthy, unbiased AI Utility Function. This would help safeguard the protected class of constituents as defined by our legal systems.

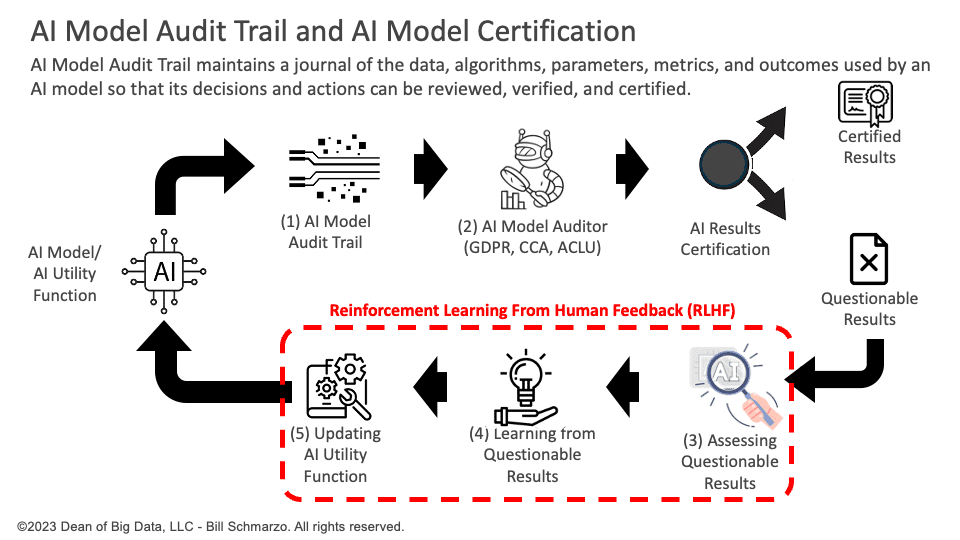

(6) AI Model Audit Trail Tracks AI Governance. To monitor the ongoing effectiveness and fairness of the AI models, we would mandate an AI Model audit trail that captures the AI models’ desired outcomes, their respective “weights,” and the metrics and variable weights at the time of any decision or action.

(7) AI Certification Programs Assess AI Compliance. Government, independent agencies, and third-party auditors would develop AI Certification models (e.g., GDPR AI Enforcement Model, CCPA AI Enforcement App, ACLU Enforcement App, EY Enforcement App) to analyze, assess, benchmark, and grade the AI models’ audit trail to officially certify responsible and ethical performance.

(8) New AI Governance Jobs. New, high-paying jobs would emerge to ensure AI’s relevant, meaningful, responsible, and ethical deployment, root out nefarious AI models, and prosecute organizations and their responsible senior executives on compliance violations in an unbiased, consistent, and transparent manner.

Eight steps to ensure the unbiased, transparent design and deployment of AI to deliver more relevant, meaningful, responsible, and ethical outcomes. A mandate in which everyone’s role, responsibilities, and rights are carefully and thoroughly articulated.

This does seem like the brave new world in which everyone would want to live (well, the nefarious evil-doers might not like this world).

=====

Note: You can find more details about these eight steps in these supporting blogs:

In part 1 of the series “A Different AI Scenario: AI and Justice in a Brave New World,” I outlined some requirements for the role that I would play in enforcing our laws and regulations in a more just and fair manner and what our human legislators must do to ensure that outcome (Figure 1).

Figure 1: Dimensions of Economic Value Creation

In part 2, I discussed a real example of how AI is being used to enforce our laws and regulations more unbiased, consistent, and transparently – robot umpires – and what we can do to humanize those robot umpires (and AI) just a little. I also tapped some time-tested standards that courts use to guide the admissibility of evidence and how those lessons can help us build more fair and just AI models in this brave new world of AI (Figure 2).

Figure 2: Humanizing AI Models for “Earned Leniency”

In part 3, I discussed the challenges of operationalizing the AI governance necessary and introduced the concept of the AI Model Audit Trail concept and how third parties could create AI certification models that ensure that the AI models follow the laws and regulations and respect the rights and freedoms of the data subjects and natural persons. Finally, we outlined the challenges to the AI Model Audit Trail concept and the steps we can take to ensure its viability.

Figure 3: AI Model Audit Trail Concept