This article was written by Vitaly Shmatikov.

Machine learning is eating the world. The abundance of training data has helped ML achieve amazing results for object recognition, natural language processing, predictive analytics, and all manner of other tasks. Much of this training data is very sensitive, including personal photos, search queries, location traces, and health-care records.

In a recent series of papers, we uncovered multiple privacy and integrity problems in today’s ML pipelines, especially online services such as Amazon ML and Google Prediction API that create ML models on demand for non-expert users, and federated learning, aka collaborative learning, that lets multiple users create a joint ML model while keeping their data private (imagine millions of smartphones jointly training a predictive keyboard on users’ typed messages).

Our Oakland 2017 paper, which has just received the PET Award for Outstanding Research in Privacy Enhancing Technologies, concretely shows how to perform membership inference, i.e., determine if a certain data record was used to train an ML model. Membership inference has a long history in privacy research, especially in genetic privacy and generally whenever statistics about individuals are released. It also has beneficial applications, such as detecting inappropriate uses of personal data.

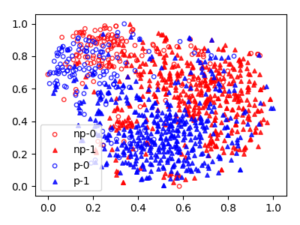

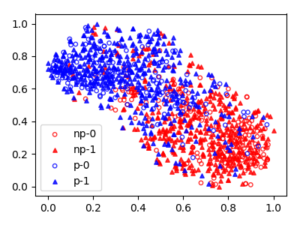

We focus on classifiers, a popular type of ML models. Apps and online services use classifier models to recognize which objects appear in images, categorize consumers based on their purchase patterns, and other similar tasks. We show that if a classifier is open to public access – via an online API or indirectly via an app or service that uses it internally – an adversary can query it and tell from its output if a certain record was used during training. For example, if a classifier based on a patient study is used for predictive health care, membership inference can leak whether or not a certain patient participated in the study. If a (different) classifier categorizes mobile users based on their movement patterns, membership inference can leak which locations were visited by a certain user.

There are several technical reasons why ML models are vulnerable to membership inference, including “overfitting” and “memorization” of the training data, but they are a symptom of a bigger problem. Modern ML models, especially deep neural networks, are massive computation and storage systems with millions of high-precision floating-point parameters. They are typically evaluated solely by their test accuracy, i.e., how well they classify the data that they did not train on. Yet they can achieve high test accuracy without using all of their capacity. In addition to asking if a model has learned its task well, we should ask what else has the model learned? What does this “unintended learning” mean for the privacy and integrity of ML models?

Deep networks can learn features that are unrelated – even statistically uncorrelated! – to their assigned task. For example, here are the features learned by a binary gender classifier trained on the “Labeled Faces in the Wild” dataset.

To read the rest of the article, click here.

DSC Resources

- Book and Resources for DSC Members

- New Perspectives on Statistical Distributions and Deep Learning

- Comprehensive Repository of Data Science and ML Resources

- Advanced Machine Learning with Basic Excel

- Difference between ML, Data Science, AI, Deep Learning, and Statistics

- Selected Business Analytics, Data Science and ML articles

- Hire a Data Scientist | Search DSC | Find a Job

- Post a Blog | Forum Questions