Here we discuss general applications of statistical models, whether they arise from data science, operations research, engineering, machine learning or statistics. We do not discuss specific algorithms such as decision trees, logistic regression, Bayesian modeling, Markov models, data reduction or feature selection. Instead, I discuss frameworks – each one using its own types of techniques and algorithms – to solve real life problems.

Most of the entries below are found in Wikipedia, and I have used a few definitions or extracts from the relevant Wikipedia articles, in addition to personal contributions.

Source for picture: click here

1. Spatial Models

Spatial dependency is the co-variation of properties within geographic space: characteristics at proximal locations appear to be correlated, either positively or negatively. Spatial dependency leads to the spatial auto-correlation problem in statistics since, like temporal auto-correlation, this violates standard statistical techniques that assume independence among observations

2. Time Series

Methods for time series analyses may be divided into two classes: frequency-domain methods and time-domain methods. The former include spectral analysis and recently wavelet analysis; the latter include auto-correlation and cross-correlation analysis. In time domain, correlation analyses can be made in a filter-like manner using scaled correlation, thereby mitigating the need to operate in frequency domain.

Additionally, time series analysis techniques may be divided into parametric and non-parametric methods. The parametric approaches assume that the underlying stationary stochastic process has a certain structure which can be described using a small number of parameters (for example, using an autoregressive or moving average model). In these approaches, the task is to estimate the parameters of the model that describes the stochastic process. By contrast, non-parametric approaches explicitly estimate the covariance or the spectrum of the process without assuming that the process has any particular structure.

Methods of time series analysis may also be divided into linear and non-linear, and univariate and multivariate.

3. Survival Analysis

Survival analysis is a branch of statistics for analyzing the expected duration of time until one or more events happen, such as death in biological organisms and failure in mechanical systems. This topic is called reliability theory or reliability analysis in engineering, duration analysis or duration modelling in economics, and event history analysis in sociology. Survival analysis attempts to answer questions such as: what is the proportion of a population which will survive past a certain time? Of those that survive, at what rate will they die or fail? Can multiple causes of death or failure be taken into account? How do particular circumstances or characteristics increase or decrease the probability of survival? Survival models are used by actuaries and statisticians, but also by marketers designing churn and user retention models.

Survival models are also used to predict time-to-event (time from becoming radicalized to turning into a terrorist, or time between when a gun is purchased and when it is used in a murder), or to model and predict decay (see section 4 in this article).

4. Market Segmentation

Market segmentation, also called customer profiling, is a marketing strategy which involves dividing a broad target market into subsets of consumers,businesses, or countries that have, or are perceived to have, common needs, interests, and priorities, and then designing and implementing strategies to target them. Market segmentation strategies are generally used to identify and further define the target customers, and provide supporting data for marketing plan elements such as positioning to achieve certain marketing plan objectives. Businesses may develop product differentiation strategies, or an undifferentiated approach, involving specific products or product lines depending on the specific demand and attributes of the target segment.

5. Recommendation Systems

Recommender systems or recommendation systems (sometimes replacing “system” with a synonym such as platform or engine) are a subclass of information filtering system that seek to predict the ‘rating’ or ‘preference’ that a user would give to an item.

6. Association Rule Learning

Association rule learning is a method for discovering interesting relations between variables in large databases. For example, the rule { onions, potatoes } ==> { burger } found in the sales data of a supermarket would indicate that if a customer buys onions and potatoes together, they are likely to also buy hamburger meat. In fraud detection, association rules are used to detect patterns associated with fraud. Linkage analysis is performed to identify additional fraud cases: if credit card transaction from user A was used to make a fraudulent purchase at store B, by analyzing all transactions from store B, we might find another user C with fraudulent activity.

7. Attribution Modeling

An attribution model is the rule, or set of rules, that determines how credit for sales and conversions is assigned to touchpoints in conversion paths. For example, the Last Interaction model in Google Analytics assigns 100% credit to the final touchpoints (i.e., clicks) that immediately precede sales or conversions. Macro-economic models use long-term, aggregated historical data to assign, for each sale or conversion, an attribution weight to a number of channels. These models are also used for advertising mix optimization.

8. Scoring

Scoring model is a special kind of predictive models. Predictive models can predict defaulting on loan payments, risk of accident, client churn or attrition, or chance of buying a good. Scoring models typically use a logarithmic scale (each additional 50 points in your score reducing the risk of defaulting by 50%), and are based on logistic regression and decision trees, or a combination of multiple algorithms. Scoring technology is typically applied to transactional data, sometimes in real time (credit card fraud detection, click fraud).

9. Predictive Modeling

Predictive modeling leverages statistics to predict outcomes. Most often the event one wants to predict is in the future, but predictive modelling can be applied to any type of unknown event, regardless of when it occurred. For example, predictive models are often used to detect crimes and identify suspects, after the crime has taken place. They may also used for weather forecasting, to predict stock market prices, or to predict sales, incorporating time series or spatial models. Neural networks, linear regression, decision trees and naive Bayes are some of the techniques used for predictive modeling. They are associated with creating a training set, cross-validation, and model fitting and selection.

Some predictive systems do not use statistical models, but are data-driven instead. See example here.

10. Clustering

Cluster analysis or clustering is the task of grouping a set of objects in such a way that objects in the same group (called a cluster) are more similar (in some sense or another) to each other than to those in other groups (clusters). It is a main task of exploratory data mining, and a common technique for statistical data analysis, used in many fields, including machine learning, pattern recognition, image analysis, information retrieval, and bioinformatics.

Unlike supervised classification (below), clustering does not use training sets. Though there are some hybrid implementations, called semi-supervised learning.

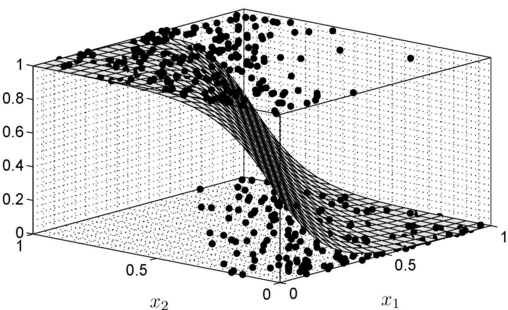

11. Supervised Classification

Supervised classification, also called supervised learning, is the machine learning task of inferring a function from labeled training data. The training data consist of a set of training examples. In supervised learning, each example is a pair consisting of an input object (typically a vector) and a desired output value (also called label, class or category). A supervised learning algorithm analyzes the training data and produces an inferred function, which can be used for mapping new examples. An optimal scenario will allow for the algorithm to correctly determine the class labels for unseen instances.

Examples, with an emphasis on big data, can be found on DSC. Clustering algorithms are notoriously slow, though a very fast technique known as indexation or automated tagging will be described in Part II of this article.

12. Extreme Value Theory

Extreme value theory or extreme value analysis (EVA) is a branch of statistics dealing with the extreme deviations from the median of probability distributions. It seeks to assess, from a given ordered sample of a given random variable, the probability of events that are more extreme than any previously observed. For instance, floods that occur once every 10, 100, or 500 years. These models have been performing poorly recently, to predict catastrophic events, resulting in massive losses for insurance companies. I prefer Monte-Carlo simulations, especially if your training data is very large. This will be described in Part II of this article.

DSC Resources

- Career: Training | Books | Cheat Sheet | Apprenticeship | Certification | Salary Surveys | Jobs

- Knowledge: Research | Competitions | Webinars | Our Book | Members Only | Search DSC

- Buzz: Business News | Announcements | Events | RSS Feeds

- Misc: Top Links | Code Snippets | External Resources | Best Blogs | Subscribe | For Bloggers

Additional Reading

- The 10 Best Books to Read Now on IoT

- 50 Articles about Hadoop and Related Topics

- 10 Modern Statistical Concepts Discovered by Data Scientists

- Top data science keywords on DSC

- 4 easy steps to becoming a data scientist

- 13 New Trends in Big Data and Data Science

- 22 tips for better data science

- Data Science Compared to 16 Analytic Disciplines

- How to detect spurious correlations, and how to find the real ones

- 17 short tutorials all data scientists should read (and practice)

- 10 types of data scientists

- 66 job interview questions for data scientists

- High versus low-level data science

Follow us on Twitter: @DataScienceCtrl | @AnalyticBridge