-

New research develops a robotic arm to restore mobility for people with disabilities.

-

Advances in machine learning make accurate, universal controllers a possibility.

- Robotic prosthetics may be just around the corner.

Brain computer interface (BCI) systems are a combination of software and hardware that can restore mobility and assist in medical diagnostics. Researchers at Cal State Northridge have recently developed a machine learning based, hands-free BCI controller for patients with paralysis and other serious physical disabilities that restrict limb movement [1]. The team created a semi-autonomous mobile robotic arm which performed imagined hand squeezes and foot taps with high accuracy. The results are set to pave the way for more research into ML-based mobility devices.

How BCI Systems Work

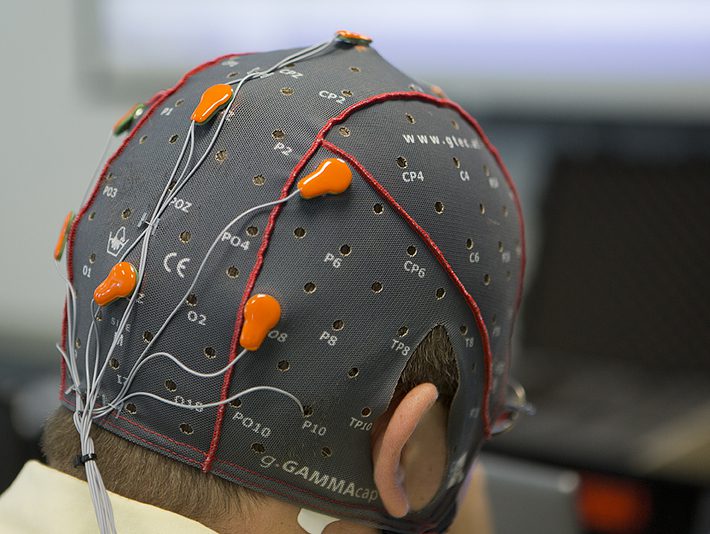

Brain Computer Interface (BCI) systems connect the brain, via a wearable cap of electrodes, to an external device—in this case, an artificial limb. A stumbling block with past research is that the sheer size and unlimited potential of the brain, which contains around 100 billion neurons, means a universal controller has been impossible to create with traditional mathematical modeling. However, recent advances in machine learning has led to the ability to create BCI controllers that can work for everyone.

BCI controllers come in two types: synchronous or asynchronous. With synchronous BCI, each signal feature is extracted and processed sequentially; one feature set must be completed before another is processed. Although highly accurate, the processing speed and response time is relatively slow. With asynchronous controllers, the BCI processes features one after another without waiting for the first instruction to be completed. Asynchronous controllers respond more quickly but suffer from lower accuracy. Despite this lower accuracy, the researchers in this study chose asynchronous controller because of the necessity of a robotic arm to respond quickly.

Creating the Machine Learning Model

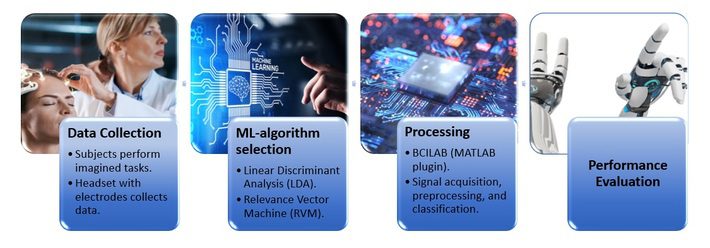

- Data Collection: Recorded data was collected from the brainwaves from three individuals. The participants performed a physical action (e.g., squeeze the left hand) followed by a mental image of the same action (this was done intentionally so the subjects could better imagine the physical action). Each person performed more than sixty trials for each task.

- ML-algorithm selection: The two algorithms chosen for the project were Linear Discriminate Analysis (LDA) and Relevance Vector Machine (RVM). While Support Vector Machine (SVM) algorithms are commonly used in BCI controller design, the researchers favored the RVM algorithm as it is probabilistic and faster for training. LDA’s decision boundary can be thought of as a hyperplane oriented to can separate two classes of data; although LDA is fast and can handle multiple classes, the plane is inflexible, which means misclassifications are common. The RVM takes a different approach, looking at how relevant data points are to each other. As such, data points that are less distinct—which LDA has trouble classifying—can be handled more accurately.

- BCI Controller Model creation: BCILAB, a MATLAB toolbox for Brain-Computer Interface(BCI) research, was used for signal acquisition, preprocessing, and classification. The model reads through the cleaned training data, solving for CSP filter weights and extracting features using spatial filters to maximize discriminability of the two classes [2].

- Performance evaluation: Both versions of the controller (from the two ML-algorithms) were evaluated. True positive, true negative, false positive, false negative, and error rate percentages were recorded.

Results

Both algorithms resulted in a BCI controller that performed accurately with simple tasks, like a two-class (hands only) tasks. However, both models had difficulties with meeting the 70% accuracy threshold needed for an effective BCI controller for five and six tasks. Although these results were disappointing, the researchers noted that there are several ways to increase the accuracy going forward. These include:

- Expand the subject pool to find generalized behavior for future models.

- Create a calibration stage between the user and controller before using the robotic device.

- Create a continuous file of data, including brain activity during scheduled breaks. Due to software limitations, the research had to be performed with spliced data recordings, which may have contributed to an increase in variance from brain signals.

- Refine the ML-algorithm to increase accuracy by adjusting the specific learning function, covariance, number of iterations, and other factors.

More research is underway to develop a more accurate, multi-class BCI system—one that has the ability to perform a variety of tasks with an accuracy exceeding 70%. As well as improving quality of life, the system has the potential to serve as a controller for able-bodied people working in extreme environments.

References

Electrode cap: Credit:NASA/Sean Smith.

All other images: Adobe Creative Cloud [Licensed]