Hyperparameter tuning is considered time-consuming and computationally expensive as it requires testing numerous combinations before attaining the optimum values. Using the available tuning libraries do not solve these issues as many of them randomly investigate the solution space while others are built for general use without adequately understand the hidden features in deep learning optimization. In this piece of writing, I am glad to introduce the state of art aisaratuners library which is the first AI-base tuning library tailored for Keras hyperparameter optimization. Functionality for sklearn and Pytorch will be introduced in the future.

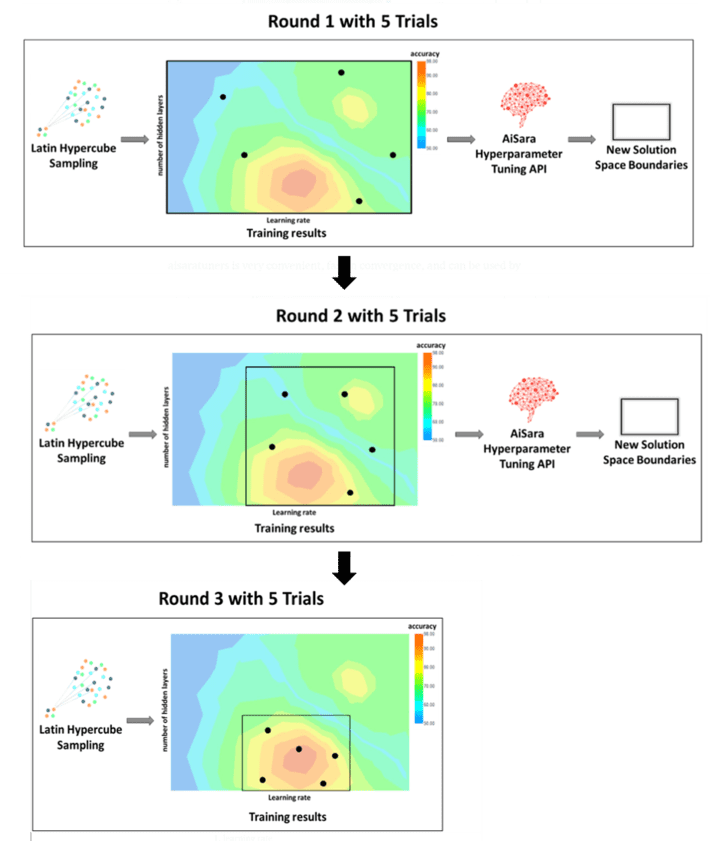

Even though aisaratuners is newly released but its innovative workflow along with the disruptive embedded technology makes it outstand compare to other tuning libraries. In plain english, this library uses AiSara Hyperparameter Tuning API to reduce the solution space boundaries in stages (rounds) during the optimization process, the analogy is like a satellite system triangulating to find the exact GPS location.

how aisaratuners reduces hyperparameters solution space boundaries during optimization

Aisaratuners is very convenient and easy to use, it requires less number of trials thus consumes less computational resources. The user needs to introduce minimal and understandable changes to the code and follow 3 simple steps which include; defining the hyperparameters, building the model, and specify the number of trials and rounds before running the optimization. For now, aisaratuners only accept numerical hyperparameters but it is in our plan to include categorical ones soon, some of the numerical hyperparameters that can be tuned using aisaratuners include:

1. learning rate

2. epoch

3. batch size

4. dropout rate

5. number of layers

6. number of units and filters

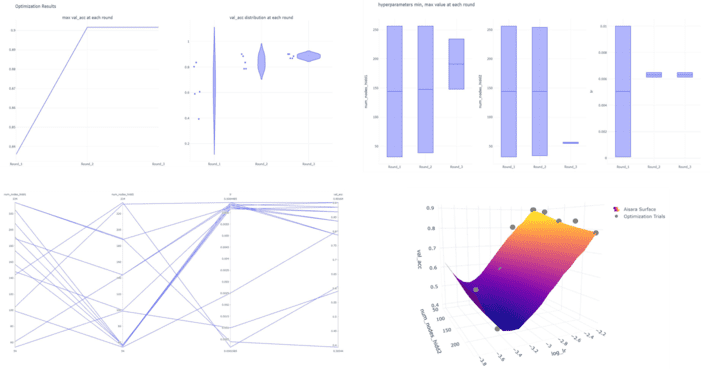

Aisaratuners contains many functions that provide the user with interactive visualizations of the results summary. Some of these visualizations include best accuracy and accuracy distribution at each round, parallel coordinates plot visualizing the performance of each trial, the convergence of each hyperparameter to its best value range during each round, and a 3D visualization of the solution space.

interactive visualizations of the results summary in aisaratuners

We invite you to try aisaratuners library for fast and convenient hyperparameter tuning. Also, we would like to hear about your experience using aisaratuners, please comment below or email us at [email protected]

Note: aisaratuners is free for private use (also it includes hackathon, Kaggle competitions, teaching materials in paid or free education platforms). For commercial use, please register via RapidApi.

To know more about aisaratuners please check the links below: