Background – How many cats does it take to identify a Cat?

In this article, I cover the 12 types of AI problems i.e. I address the question : in which scenarios should you use Artificial Intelligence (AI)? We cover this space in the Enterprise AI course

Some background:

Recently, I conducted a strategy workshop for a group of senior executives running a large multi national. In the workshop, one person asked the question: How many cats does it need to identify a Cat?

This question is in reference to Andrew Ng’s famous paper on Deep Learning where he was correctly able to identify images of Cats from YouTube videos. On one level, the answer is very clear: because Andrew Ng lists that number in his paper. That number is 10 million images .. But the answer is incomplete because the question itself is limiting since there are a lot more details in the implementation – for example training on a cluster with 1,000 machines (16,000 cores) for three days. I wanted to present a more detailed response to the question. Also, many problems can be solved using traditional Machine Learning algorithms – as per an excellent post from Brandon Rohrer – which algorithm family can answer my question. So, in this post I discuss problems that can be uniquely addressed through AI. This is not an exact taxonomy but I believe it is comprehensive. I have intentionally emphasized Enterprise AI problems because I believe AI will affect many mainstream applications – although a lot of media attention goes to the more esoteric applications.

What problem does Deep Learning address?

What is Deep Learning?

Firstly, let us explore what is Deep Learning

Deep learning refers to artificial neural networks that are composed of many layers. The ‘Deep’ refers to multiple layers. In contrast, many other machine learning algorithms like SVM are shallow because they do not have a Deep architecture through multiple layers. The Deep architecture allows subsequent computations to build upon previous ones. We currently have deep learning networks with 10+ and even 100+ layers.

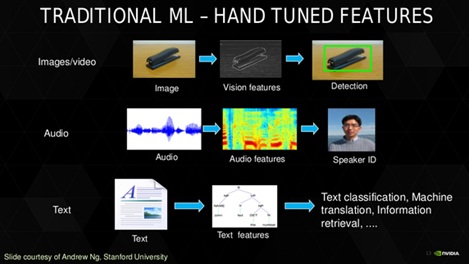

The presence of multiple layers allows the network to learn more abstract features. Thus, the higher layers of the network can learn more abstract features building on the inputs from the lower layers. A Deep Learning network can be seen as a Feature extraction layer with a Classification layer on top. The power of deep learning is not in its classification skills, but rather in its feature extraction skills. Feature extraction is automatic (without human intervention) and multi-layered.

The network is trained by exposing it to a large number of labelled examples. Errors are detected and the weights of the connections between the neurons adjusted to improve results. The optimisation process is repeated to create a tuned network. Once deployed, unlabelled images can be assessed based on the tuned network.

Feature engineering involves finding connections between variables and packaging them into a new single variable is called. Deep Learning performs automated feature engineering. Automated feature engineering is the defining characteristic of Deep Learning especially for unstructured data such as images. This matters because the alternative is engineering features by hand. This is slow, cumbersome and depends on the domain knowledge of the people/person performing the Engineering

Deep Learning suits problems where the target function is complex and datasets are large but with examples of positive and negative cases. Deep Learning also suits problems that involve Hierarchy and Abstraction.

Abstraction is a conceptual process by which general rules and concepts are derived from the usage and classification of specific examples. We can think of an abstraction as the creation of a ‘super-category’ which comprises of the common features that describe the examples for a specific purpose but ignores the ‘local changes’ in each example. For example, the abstraction of a ‘Cat’ would comprise fur, whiskers etc. For Deep Learning, each layer is involved with detection of one characteristic and subsequent layers build upon previous ones. Hence, Deep Learning is used in situations where the problem domain comprises abstract and hierarchical concepts. Image recognition falls in this category. In contrast, a Spam detection problem that can be modelled neatly as a spreadsheet probably is not a complex problem to warrant Deep Learning

A more detailed explanation of this question can be found in THIS Quora thread.

AI vs. Deep Learning vs. Machine Learning

Before we explore types of AI applications, we need to also discuss the differences between the three terms AI vs. Deep Learning vs. Machine Learning.

The term Artificial Intelligence (AI) implies a machine that can Reason. A more complete list or AI characteristics (source David Kelnar) is

- Reasoning: the ability to solve problems through logical deduction

- Knowledge: the ability to represent knowledge about the world (the understanding that there are certain entities, events and situations in the world; those elements have properties; and those elements can be categorised.)

- Planning: the ability to set and achieve goals (there is a specific future state of the world that is desirable, and sequences of actions can be undertaken that will effect progress towards it)

- Communication: the ability to understand written and spoken language.

- Perception: the ability to deduce things about the world from visual images, sounds and other sensory inputs.

The holy grail of AI is artificial general intelligence (aka like Terminator!) that allows machines to function independently in a normal human environment. What we see today is mostly narrow AI (ex like the NEST thermostat). AI is evolving rapidly. A range of technologies drive AI currently. These include: image recognition and auto labelling, facial recognition, text to speech, speech to text, auto translation, sentiment analysis, and emotion analytics in image, video, text, and speech. Source: Bill Vorhies AI Apps have also reached accuracies of 99% in contrast to 95% just a few years back.

Improvements in Deep Learning algorithms drive AI. Deep Learning algorithms can detect patterns without the prior definition of features or characteristics. They can be seen as a hybrid form of supervised learning because you must still train the network with a large number of examples but without the requirement for predefining the characteristics of the examples (features). Deep Learning networks have made vast improvements both due to the algorithms themselves but also due to better hardware(specifically GPUs)

Finally, in a broad sense, the term Machine Learning means the application of any algorithm that can be applied against a dataset to find a pattern in the data. This includes algorithms like supervised, unsupervised, segmentation, classification, or regression. Despite their popularity, there are many reasons why Deep learning algorithms will not make other Machine Learning algor…

12 types of AI problems

With this background, we now discuss the twelve types of AI problems.

1) Domain expert: Problems which involve Reasoning based on a complex body of knowledge

This includes tasks which are based on learning a body of knowledge like Legal, financial etc. and then formulating a process where the machine can simulate an expert in the field

2) Domain extension: Problems which involve extending a complex body of Knowledge

Here, the machine learns a complex body of knowledge like information about existing medication etc. and then can suggest new insights to the domain itself – for example new drugs to cure diseases.

3) Complex Planner: Tasks which involve Planning

Many logistics and scheduling tasks can be done by current (non AI) algorithms. But increasingly, as the optimization becomes complex AI could help. One example is the use of AI techniques in IoT for Sparse datasets AI techniques help on this case because we have large and complex datasets where human beings cannot detect patterns but a machine can do so easily.

4) Better communicator: Tasks which involve improving existing communication

AI and Deep Learning benefit many communication modes such as automatic translation, intelligent agents etc

5) New Perception: Tasks which involve Perception

AI and Deep Learning enable newer forms of Perception which enables new services such as autonomous vehicles

6) Enterprise AI: AI meets Re-engineering the corporation!

While autonomous vehicles etc get a lot of media attention, AI will be deployed in almost all sectors of the economy. In each case, the same principles apply i.e. AI will be used to create new insights from automatic feature detection via Deep Learning – which in turn help to optimize, improve or change a business process (over and above what can be done with traditional machine learning). I outlined some of these processes in financial services in a previous blog: Enterprise AI insights from the AI Europe event in London. In a wider sense, you could view this as Re-engineering the Corporation meets AI/ Artificial Intelligence. This is very much part of the Enterprise AI course

7) Enterprise AI adding unstructured data and Cognitive capabilities to ERP and Datawarehousing

For reasons listed above, unstructured data offers a huge opportunity for Deep Learning and hence AI. As per Bernard Marr writing in Forbes: “The vast majority of the data available to most organizations is unstructured – call logs, emails, transcripts, video and audio data which, while full of valuable insights, can’t easily be universally formatted into rows and columns to make quantitative analysis straightforward. With advances in fields such as image recognition, sentiment analysis and natural language processing, this information is starting to give up its secrets, and mining it will become increasingly big business in 2017.” I very much agree to this. In practise, this will mean enhancing the features of ERP and Datawarehousing systems through Cognitive systems.

8) Problems which impact domains due to second order consequences of AI

David Kelnar says in The fourth industrial revolution a primer on artificial intelligenc…

“The second-order consequences of machine learning will exceed its immediate impact. Deep learning has improved computer vision, for example, to the point that autonomous vehicles (cars and trucks) are viable. But what will be their impact? Today, 90% of people and 80% of freight are transported via road in the UK. Autonomous vehicles alone will impact: safety (90% of accidents are caused by driver inattention) employment (2.2 million people work in the UK haulage and logistics industry, receiving an estimated £57B in annual salaries) insurance (Autonomous Research anticipates a 63% fall in UK car insurance premiums over time) sector economics (consumers are likely to use on-demand transportation services in place of car ownership); vehicle throughput; urban planning; regulation and more. “

9) Problems in the near future that could benefit from improved algorithms

A catch-all category for things which were not possible in the past, could be possible in the near future due to better algorithms or better hardware. For example, in Speech recognition, improvements continue to be made and currently, the abilities of the machine equal that of a human. From 2012, Google used LSTMs to power the speech recognition system in Android. Just six weeks ago, Microsoft engineers reported that their system reached a word error rate of 5.9% — a figure roughly equal to that of human abilities for the first time in history. The goal-post continues to be moved rapidly .. for example loom.ai is building an avatar that can capture your personality

10) Evolution of Expert systems

Expert systems have been around for a long time. Much of the vision of Expert systems could be implemented in AI/Deep Learning algorithms in the near future. If you study the architecture of IBM Watson, you can see that the Watson strategy leads to an Expert system vision. Of course, the same ideas can be implemented independently of Watson today.

11) Super Long sequence pattern recognition

This domain is of personal interest to me due to my background with IoT see my course at Oxford University Data Science for Internet of Things. I got this title from a slide from Uber’s head of Deep Learning who I met at the AI Europe event in London. The application of AI techniques to sequential pattern recognition is still an early stage domain(and does not yet get the kind of attention as CNNs for example) – but in my view, this will be a rapidly expanding space. For some background see this thesis from Technische Universitat Munchen (TUM) Deep Learning For Sequential P… and also this blog by Jakob Aungiers LSTM Neural Network for Time Series Prediction

12) Extending Sentiment Analysis using AI

The interplay between AI and Sentiment analysis is also a new area. There are already many synergies between AI and Sentiment analysis because many functions of AI apps need sentiment analysis features.

“The common interest areas where Artificial Intelligence (AI) meets sentiment analysis can be viewed from four aspects of the problem and the aspects can be grouped as Object identification, Feature extraction, Orientation classification and Integration. The existing reported solutions or available systems are still far from being perfect or fail to meet the satisfaction level of the end users. The main issue may be that there are many conceptual rules that govern sentiment and there are even more clues (possibly unlimited) that can convey these concepts from realization to verbalization of a human being.” source: SAAIP

Notes: the post The fourth industrial revolution a primer on artificial intelligenc… also offers a good insight on AI domains also see #AI application areas – a paper review of AI applications (pdf)

Conclusion

To conclude, AI is a rapidly evolving space. Although AI is more than Deep Learning, Advances in Deep Learning drive AI. Automatic feature learning is the key feature of AI. AI needs many detailed and pragmatic strategies which I have not yet covered here. A good AI Designer should be able to suggest more complex strategies like Pre-training or AI Transfer Learning

AI is not a panacea. AI comes with a cost (skills, development, and architecture) but provides an exponential increase in performance. Hence, AI is ultimately a rich company’s game. But AI is also a ‘winner takes all’ game and hence provides a competitive advantage. The winners in AI will take an exponential view addressing very large scale problems i.e. what is possible with AI which is not possible now?

We cover this space in the Enterprise AI course