Trends to Watch Out For and Prepare Yourself

Ok. So there’s been a lot of coverage by various websites, data science gurus, and AI experts about what 2019 holds in store for us. Everywhere you look, we have new fads and concepts for the new year. This article is going to be rather different. We are going to highlight the dark horses – the trends that no one has thought about but will completely disrupt the working IT environment (for both good and bad – depends upon which side of the disruption you are on), in a significant manner. So, in order to give you a taste of what’s coming up, let’s go through the top four (plus 1 (bonus) = five) top trends of 2019 for data science:

- AutoML

- Interoperability (ONNX)

- Cyber Data Science Crime

- Cloud AI-as-a-Service

- (Bonus) Quantum Computation & Data Science

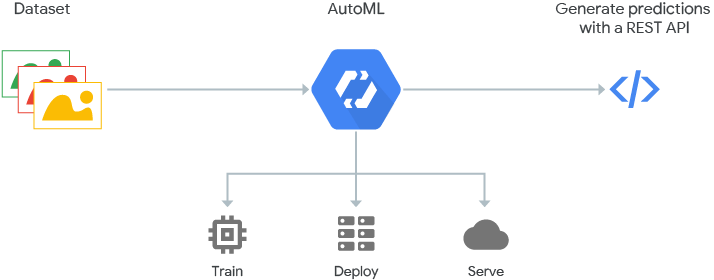

1. AutoML (& AutoKeras)

Google AutoML Architecture

From https://cloud.google.com/automl/

This single innovation is going to change the way machine learning works in the real world. Earlier, deep learning and even advanced machine learning was an aristocratic property of PhD holders and other research scientists. AutoML has changed that entire domain – especially now that AutoKeras is out.

AutoML automates machine learning. It chooses the best architecture by analyzing the data – through a technology called Neural Architecture Search (NAS), tries out various models and gives you the best possible hyperparameters for your scenario automatically! Now, this was priced at the ridiculous price of 76$ USD per hour, but we now have a free open source competitor, AutoKeras.

The open source killer of AutoML

From https://www.pyimagesearch.com

AutoKeras is an open source free alternative to AutoML developed at University of Texas A & M DATA lab and the open source community. This project should make a lot of deep learning accessible to everyone on the planet who can code even a little. To give you an example, this is the code used to train an arbitrary image classifier with deep learning:

import autokeras as ak

clf = ak.ImageClassifier()

clf.fit(x_train, y_train)

results = clf.predict(x_test )

From: https://autokeras.com/

Folks, it really doesn’t get simpler than this!

Note:Of course, the entire training and testing process will take more than a day to complete at the very least, but less if you have some high-throughput GPUs or Google’s TPUs (Tensor Processing Units – custom hardware for data science computation) or plenty of money to spend on the cloud infrastructure computation resources of AutoML.

2. Interoperability (ONNX)

For those of you are new as to what interoperability means to neural networks – we now have several Deep Learning Neural Network Software Libraries competing with each other for market dominance. The most highly rated ones are:

- TensorFlow

- Caffe

- Theano

- Torch & PyTorch

- Keras

- MXNet

- Chainer

- CNTK

However, converting an artificial neural network written in CNTK (Microsoft Cognitive Tool Kit) to Caffe is a laborious task. Why can’t we simply have one single standard so that discoveries in AI can be shared with the public and with the open source community?

To solve this problem, the following standard has been proposed:

Open Neural Network Exchange Format

One Neural Network Standard over them all.

From https://www.softwarelab.it

ONNX is a standard in which deep learning networks can be represented as a directed acyclic computation graph which is compatible with every deep learning framework available today (almost). Watch out for this release since if proper transparency is enforced, we could see decades worth of research happen in this single year!

3. Cyber Data Science Crime

There are allegations that the entire US elections conducted last year was a socially engineered manipulation of the US democratic process using data science and data mining techniques. The most incriminated product was Facebook, on which fake news were spread by Russian agents and the Russian intelligence forces, leading to an external agency deciding who the US president would be instead of the US people themselves. And yes, one of the major tools used was data science! So, this is not so much a new trend as an already existing phenomenon, one that needs to be recognized and dealt with effectively.

While this is a controversial trend or topic, it needs to be addressed. If the world’s most technologically advanced nation can be manipulated by its sworn enemies to elect a leader (Trump) that no one really wanted, then how much more easily can nations like India or the UK be manipulated as well?

This has already begun in a small way in India with BJP social media departments putting up pictures of clearly identifiable cities (there was one of Dubai) as cities in Gujarat on WhatsApp. This trend will not change any time soon. There needs to be a way to filter the truth from the lies. The threat comes not from information but from misinformation.

Are you interested in the elections? Then get involved in Facebook. What happened in the USA in 2018 could easily happen in India in 2019. The very fabric of democracy could break apart. As data scientists, we need to be aware of every issue in our field. And we owe it to the public – and to ourselves – to be honest and hold ourselves to the highest levels of integrity.

We could do more than thirty blog posts on this topic alone – but we digress.

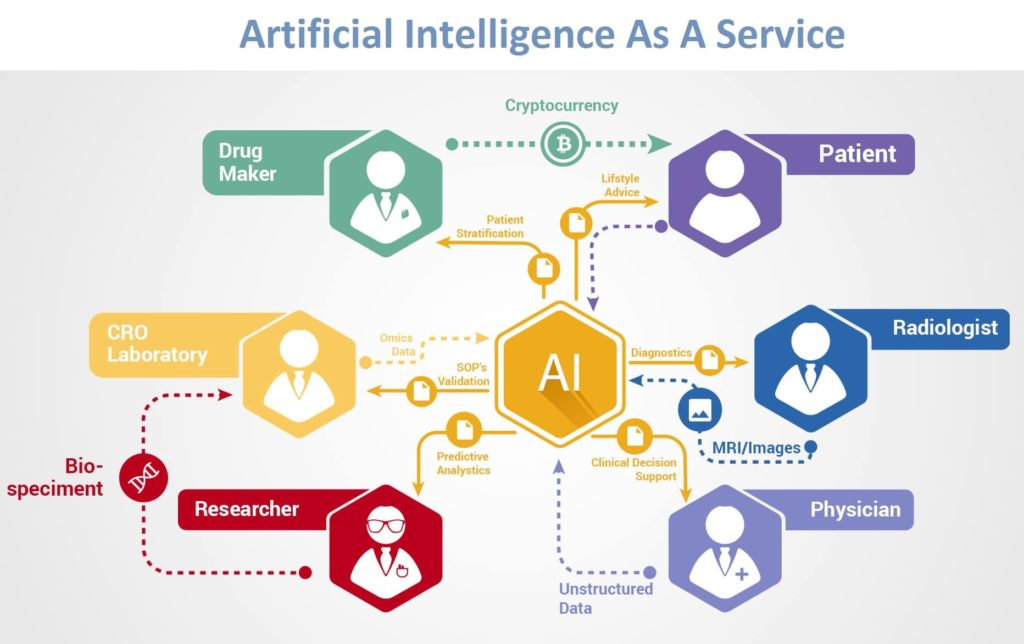

4. Cloud AI-as-a-Service

AI-as-a-Service Overview

From: http://www.digitaljournal.com

To understand Cloud AI-as-a-Service, we need to know that maintaining an AI in-house analytics solution is overkill as far as most companies are concerned. It is so much easier to outsource the construction, deployment and maintenance costs of an AI system to a company that provides it online at a far lesser cost than what the effort and difficulty would be otherwise in maintaining and updating an in-built version that has to be managed by a separate department with some very hard-to-find and esoteric skills. There are so many start-ups in this area alone over the last year (over 100) that to list all of them would be a difficult task. Of course, as usual, all the big names are extremely involved.

This is a trend that has already manifested, and will only continue to grow in popularity. There are already a number of major players in this AI as a Service offering including but not limited to Google, IBM, Amazon, Nvidia, Oracle, and many, many more. In this upcoming year, companies without AI will fall and fail spectacularly. Hence the importance of keeping AI open to the public for all as cheaply as possible. What will be the end result? Only time will tell.

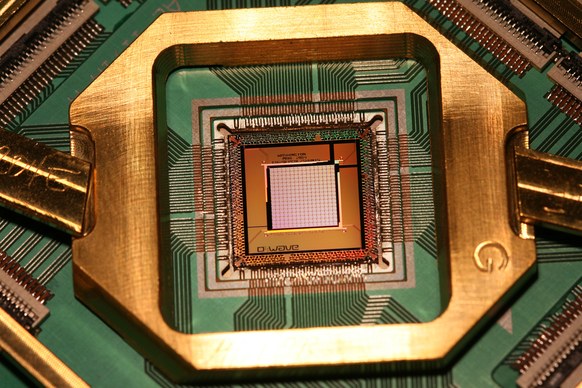

5. Quantum Computing and AI (Bonus topic)

Quantum Computing is very much an active research topic as of now. And the country with the greatest advances is not the US, but China. Even the EU has invested over 1 Billion Euros in its quest to build a feasible quantum computer. A little bit of info about quantum computing, in 5 crisp points:

- It has the potential to become the greatest quantum leap since the invention of the computer itself. (pun intended)

- However, practical hardware difficulties have kept quantum computers constructed so far as laboratory experiments alone.

- If a quantum computer that can manipulate 100-200 qubits (quantum bits) is built, every single encryption algorithm used today will be broken quite easily.

- The difficulty in keeping single atoms in isolated states consistently (decoherence) makes current research more of an academic choice.

- Experts say a fully functional quantum computer could be 5-15 years away. But, also, it would herald a new era in the history of mankind.

In fact, the greatest example of a quantum computer today is the human brain itself. If we develop quantum computing to practical levels, we could also gain the information to create a truly intelligent computer.

Cognition in real world AI that is self-aware. How awesome is that?

D-Wave Quantum Computer processor

(From wikipedia.org)

Conclusion

So there you have it. The five most interesting and important trends that could become common in the year 2019 (although the jury will differ on the topic of the quantum computer – it could work this year or ten years from now – but it’s immensely exciting).