Traffic policewoman in Pyongyang

Traffic policewoman in Pyongyang

World-famous Pyongyang policewomen, who are reportedly picked by North Korea’s Supreme Leader himself, have garnered a cult following and even have a website devoted to them, quite a rarity in the North Asia country. The job is seemingly held in high esteem, with only the best being hired.

Traffic in Pyongyang has become visibly heavier, with more trucks, taxis and cars. The once sluggish streets, where the policewomen stood in the middle of usually deserted crossings to direct what few cars came by, now look a lot livelier. Having the right traffic enforcement is fundamental to develop the economic activity in Pyongyang.

Similarly, data governance is a fundamental enabler to achieve bottom and top-line impact from analytics. Explosive data traffic in today’s organisations require:

- Putting in place traffic rules, the data policies;

- Picking up data governance stewards to monitor the increasing traffic, our Pyongyang cops; and

- Most importantly, the direct attention of the Supreme Leader, yourself, the CEO.

Growing Top / Bottom Line at Lower Risk

Data governance refers to the people, processes, and technology that an organisation needs to handle its data properly and consistently. Good data governance helps an organisation manage data as an asset, and transform it into meaningful information, resulting into three groups of benefits:

Increasing top-line:

- Increasing consistency and trust in decision making

- Maximizing the income generation potential of data

- Enable better planning

Increasing bottom-line:

- Establishing process performance baselines to enable improvement efforts

- Minimizing or eliminating re-work

- Optimizing staff effectiveness

Reducing risk:

- Decreasing the risk of regulatory fines

- Improving data security

- Defining and verifying the requirements for data distribution policies

- Designating accountability for information quality

Implementing data governance requires establishing rules and policies, which range from high-level (overall direction and strategy) to detailed (step-by-step processes and procedures).

A Data Governance Policy must at least answer the following five questions:

- What roles and committees are there for implementing data governance?

- How is data stored and maintained, and what constitutes the truth in the organization?

- How to guarantee data is accurate and suitable for analysis? Who owns the data?

- How do users get access to the data they need and no more than that, and how do they use it in modelling and reporting?

- How to guarantee data remains private, secure and compliant with regulations?

Defining Business & IT Governance Roles

At all stages of formulating and implementing governance measures, business and IT personnel should collaborate closely. Business users should state the desired level of data quality and terms based on their business needs; and technical users should have the responsibility of deciding the most appropriate technical methods to that end.

The most critical role to define is the Sponsor, who must be a well-respected and influential C-level. Without him or her any effort will be futile. The sponsor provides strategic direction and funding for data governance efforts and serves as the advocate for data governance within the organization.

The sponsor relies onto a Data Governance Lead to enforce policies; to propose which data governance projects to spend money on; to coordinate between business and technology groups; to establish success metrics; to monitor and reports data quality and governance metrics; and finally to work with business leads and IT resources to prioritize and resolve issues. The Data Governance Lead must have political acumen and know how to manage key influencers.

Additionally, Business Users are the heads of departments that use data. They contribute their departments’ inputs to all governance initiatives. Among them, Power Users are defined as the top few users in terms of influence and data volume.

Data Stewards are attached to a particular department or business unit. They provide business-side inputs from their units when drafting or amending governance requirements and serve as subject matter experts for data-related issues. When it comes to data traffic, Data Stewards are equivalent to our Pyongyang police cops. Furthermore, a Head Data Steward is appointed with responsibility over the whole organization, not only one department. He or she implements and enforces governance requirements. He or she designs and implements the architecture of data flows, oversees audits, implements follow-up actions and translates business requirements into technical requirements.

Finally, a Head Database Administrator is required to maintain technical processes like checks, formatting, integration or distribution across data systems.

Running Routines up to the C’ Suite

Supreme Leader Kim Jong Un is believed to cherry-pick policewomen himself

World-famous Pyongyang policewomen are believed to be cherry-picked by the Supreme Leader himself. Only the best can make it through. Pyongyang traffic is a big deal for Kim Jong Un. Similarly to car traffic, data governance requires the direct attention of the CEO, with a structure of governance routines which go up to the C-Suite.

“Data governance requires the direct attention of the CEO, with a structure of governance routines which go up to the C-Suite.”

A cascade of three committees is necessary to monitor data governance decisions and to supervise the implementation of a governance framework.

The highest level meeting is the Data Governance Council, which reviews and approves general governance measures. The Council meets monthly or every two months and consists of at least the Sponsor, the Data Governance Lead, the Head Data Steward and Head Database Administrator, and the Power users. Most dynamic Councils are headed by a different member on a rotating basis.

The second management structure is a Governance Office, which meets weekly. The Data Governance Office enforces data governance across all business units and IT so that data governance and strategies are common throughout the organization. It drafts general governance measures and amendments, and proposes them to Steerco for review and approval. It is headed by Data Governance Lead and consists of the Head Data Steward, Head Database Administrator, all Data Stewards and at least one Power User representative.

The third level of the structure is the data governance Working Groups, which drive data management projects. Members of each Working Group should be able to make decisions as a team. They are usually headed by a Data Steward and consists of other Data Stewards and relevant IT experts, with knowledge about data modelling, data analysis and migration.

“Doublethinking” about your Data

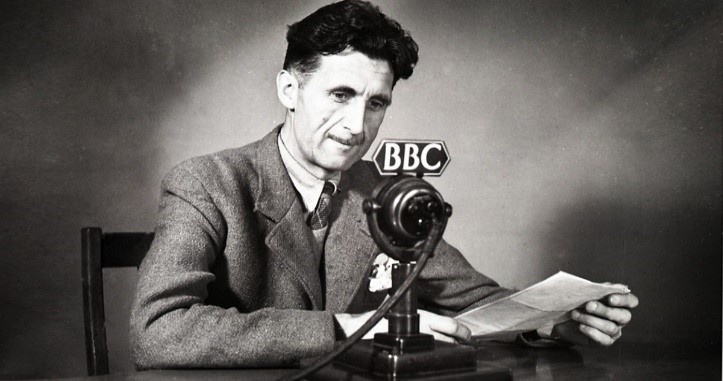

British Novelist George Orwell created the concept of doublethink

British Novelist George Orwell created the concept of doublethink

British novelist George Orwell created the word doublethink in his dystopian novel Nineteen Eighty-Four, which described a futuristic totalitarian regime in England. Doublethink means the power of holding two contradictory beliefs in one’s mind simultaneously and accepting both of them. The novel was published about the time Kim Jong Un’s grandfather created North Korea, and it really is as if he got hold of an early copy and used it as a blueprint.

As weird as it sounds, should your organization implement doublethink by enabling multiple versions of what constitutes the truth?

One of the most important requirements of data governance is to create an authoritative repository of data, according to which revenue is reported, customers are defined, and products are classified in standard, pre-agreed definitions. This logical repository that contains one authoritative copy of all crucial data is called Single Source of Truth (SSOT) and must use a common language or data dictionary, not one that is specific to a certain team. The SSOT can be distributed across multiple physical systems.

Not having an SSOT can lead to chaos because different teams would not agree on what the revenue is or who the customers are.

Although the highly centralized, control-oriented SSOT approach is effective to normalise enterprise data, it can constrain flexibility, making it harder to adapt data to the different strategies and realities of multiple business units.

“Although the Single Source of Truth approach is effective to normalise enterprise data, it can constrain flexibility, making it harder to adapt data to the different strategies of multiple business units”

This is particularly harmful for businesses with aggressive growth strategies or within particularly deregulated or competitive industries. Different business units might need to transform, label and report data differently. They may have a very different definition of what constitutes a customer, how to segment them or how to calculate margins. Flexible use of data is vital to maximize the benefits we derive from data.

Like Orwell’s doublethink, a more flexible and realistic approach involves Multiple Versions of the Truth (MVOT), which are developed from the SSOT.

In an MVOT environment, different business units or functions have the option of using SSOT data to create distinct, controlled versions of the truth to fit their respective purposes. Creating MVOT may entail filtering, combining, redefining and transforming SSOT data in a traceable way, using it in models and analyses, or combining it with other internal and external data for planning purposes.

When companies lack a robust multi-versioned data architecture, different teams may create and store the data they need in siloed repositories that vary in depth, breadth, and formatting. The process is inefficient and expensive and can result in the proliferation of multiple uncontrolled versions of truth that are not effectively reused.

Handling 1 Source with Multiple Versions

The SSOT must be defined through metadata specifications. This metadata should be exhaustive, including data dictionaries and protocols for storage and retention. The master metadata should be sufficiently specific and detailed no discrepancy can arise between different calculations.

The Data Governance Policy should define a clear procedure for creating, amending and approving metadata specifications, as well as detailed criteria that metadata must fulfil to be considered complete.

Regular audits should be carried out at least yearly to ensure that metadata specifications are complete, exhaustive, up to date and adhered to.

Where a business unit or functional department chooses to use MVOT, these versions must adhere to an amended metadata specification, which must be sufficiently clear and unambiguous to allow users to reconstruct the amended data.

MVOT must be clearly recorded and kept separate from the SSOT, to preserve its cohesion and reliability. A clear process must be in place for authorizing amended data to be stored in repositories; and for creating, storing, evaluating, approving and making available all amended metadata specifications assuring that they remain accurate and complete.

Assuring Data Quality and Ownership

Data preparation is the process of putting data into the format required for data modelling and reporting. This processes might require integrating from several sources, cleansing to assure its correctness and formatting it according to metadata specifications.

All data available to the organization should be divided according to its business meaning into data sets and assigned to a Business Owner from the department which uses each data set most. While the Head Data Steward is responsible for maintaining the overall SSOT, ownership of each data set is held by the Business Owner and cannot be delegated. Each Business Owner will be responsible for ensuring the quality, integrity and recency of his or her data set. For example, the head of a loyalty program must be the Business Owner of all loyalty data.

At all stages, the quality and integrity of data should be rigorously maintained, and data must be kept up to date. Thus, the governance policy should define:

- A methodology and metrics to measure data quality and data recency

- Minimum acceptable levels of quality and recency

- Rules to ensure that data quality is not compromised by any of its users

- A clear process by which users can provide feedback and propose changes to the Business Owner

Regular audits should be carried out at least yearly to ensure that data quality is maintained, that all quality tests of are functioning and that rules governing data quality are enforced. Additionally they should also assure that the data preparation processes are adhered to the metadata specifications, internal rules, and regulatory requirements.

Granting Access to Data

The broader data access is within an organization the larger the benefits of using data in decision making will be. Any employee, not just analytics professionals, may need access to data to make decisions. This requires the right governance and access levels.

The Data Governance Policy should ensure that the data storage architecture permits easy data access for authorized personnel. The policy should also define appropriate metrics for measuring difficulty in accessing data and regular audits should be carried out to ensure that difficulty stays acceptable.

Data should be classified into several levels of sensitivity. For example it is common to separate Personally Identifiable Information and other data.

The Data Governance Policy should define approval processes for internal personnel and third parties to obtain access to both Personally Identifiable and other data. Appropriate approvers must be identified for each case as well as designate deputies. It is recommended that third-party access to data is approved by a designated C-level or the CEO.

For third parties, access to data should be granted only if they are bound by privacy and security rules at least as strict as that of your organization.

Regular audits should be carried out at least yearly to ensure that the approval processes have been correctly applied and enforced, and that access to data has not been unnecessarily granted or denied.

Anonymizing Personal Identifiable Data

Data anonymization is a type of information sanitization whose intent is privacy protection. It requires either encrypting or removing Personally Identifiable Information from data sets, so that the people whom the data describe remain anonymous. All customer-level data should be anonymized, prior to sharing with data users. In cases where authorized personnel require personal identifiable data, this should be extracted, transferred and stored separately, with unauthorized access prevented at all times. Some companies might even prohibit having Personally Identifiable Information in public clouds.

The Data Governance Policy should define the process for tagging data as Personally Identifiable; for anonymizing it; and for measuring whether this anonymization is sufficient.

Audits should ensure that no data is available outside permitted environments or to unauthorized personnel and that all data is sufficiently anonymized.

Data Security, Privacy and Compliance

Data protection must be included at the onset of designing of systems, and not just as an addition. Some data protection regulations, such as the European GDPR (General Data Protection Regulation) or the Singaporean PDPA (Personal Data Protection Act) define requirements for corporate data governance. Requirements vary from regulation to regulation but are centred in three main areas:

Customers’ rights:

- Consent is needed before personal data is collected, used, or disclosed

- Individuals have the right to request access to their personal data and must and be allowed to correct any error

- Individuals have the right to have their personal data erased and to transmit that data to another competing organization

Obligations of the companies handling data:

- Organisations must inform individuals of the purpose for collecting, using, or disclosing data, which must not be used for anything else than this the original purpose

- Organisations must make reasonable efforts to collect accurate and complete personal data

- Reasonable security arrangements must be made to prevent unauthorised access, use, disclosure, copying, modification, and disposal of data

Restrictions to the companies:

- Companies may only keep personal data for a certain period, after which it must be deleted

- Supervisory authority and private individuals must be informed of privacy breaches promptly

- Limitations to transfer or to access data outside of national or corporates limits may apply

Getting started: prioritizing one area

It may seem optimal to tackle all governance issues across the organization in parallel. However, it is far more effective to focus on a specific department first. Implementing data governance in a targeted way sets a firm foundation for taking it across the enterprise because it helps to avoid lack of follow-through, the biggest typical problem with transformations. Additionally working initially with a single department makes it easier to take action and ensure accountability.

“It may seem optimal to tackle all governance issues across the organization in parallel. However, it is far more effective to focus on a specific department first”

Finally it is paramount to pilot and adopt a data governance software tool, which can be later expanded to the whole organization.

Kim Jong Un’s traffic policewomen are focused on Pyongyang only

About the Author

Pedro URIA RECIO is thought-leader in artificial intelligence, data analytics and digital marketing. His career has encompassed building, leading and mentoring diverse high-performing teams, the development of marketing and analytics strategy, commercial leadership with P&L ownership, leadership of transformational programs and management consulting.

References

- “A Ten-Step Plan for an Effective Data Governance Structure”, Michael Ott, Dec 2015

- “Data Governance Functions and Implementation”, July 2016, TM Forum

- “5 Essential Components of a Data Strategy”, Saas

- “What is your data strategy?”, Thomas H. Davenport, HBR, June 2017

- “GDPR and PDPA: What’s the Difference?”, April 2018, constructdigital

- “Seven Steps of Effective Data Governance: An Overview”, informationbuilders

Image Credit

Éric Lafforgue, The Economist, BBC, Associated Press

Links