Summary: This is the second in our multi-part series on Quantum computing. In this article we’ll dive a little deeper into what’s available, what’s coming soon, and some considerations for getting in early.

In the first article in this series, “Quantum Computing and Deep Learning. How Soon? How Fast?” we laid out the case that Quantum computing is commercially available today and that companies are already beginning to use it in operations. We talked a little about who is out in front (D-Wave, IBM) and who is coming soon (Microsoft, Google, University of New South Wales). We also spoke briefly about how it might be applied to deep learning and have an impact on artificial intelligence. In this article we’ll dive a little deeper into what’s available, what’s coming soon, and some considerations for getting in early.

Different Architectures of Quantum Computers

In commercial operation today there are two distinct types of Quantum computers and several entirely different types due within a year or two.

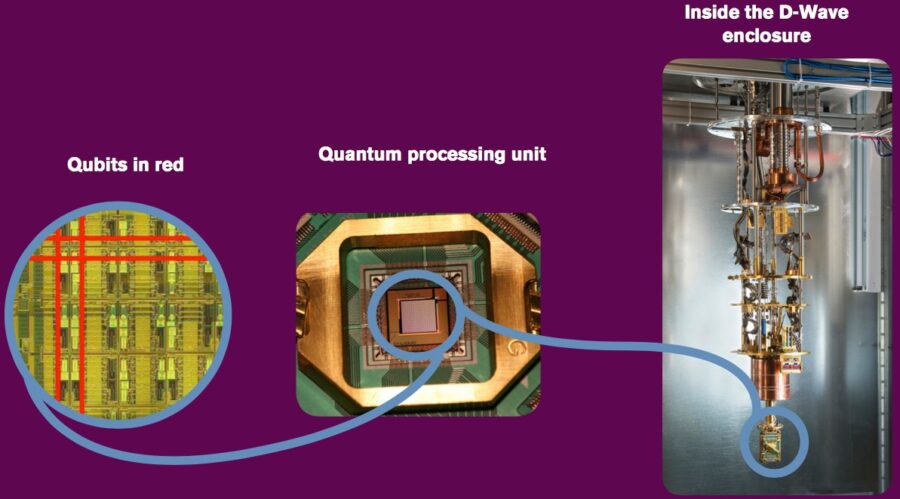

D-Wave, the market leader with a commercially available system since 2010 is pursuing a paradigm based on quantum annealing using a super-cooled magnetic field to perform qubit operations. Recently, they’ve been doubling the size of their machines every year and now stand at 2000 qubits. Originally there was some academic controversy over whether this was actually utilizing a quantum effect but that dispute has been resolved in D-Wave’s favor. The single drawback, if you can call it that, is that the D-Wave machine is good for only one of the three quantum applications, optimization.

IBM just introduced IBM Q, a commercially available gate model system that’s available in the cloud by subscription. IBM Q is intended to be a general purpose computer able to solve all the types of problems normal computers can handle, including the two other areas most commonly mentioned for Quantum, simulation and sampling. So for example, you might apply IBM Q to the problem of mimicking protein folding which D-Wave could not do.

Among those that are close but not quite in the market yet:

University of New South Wales and its commercial Australian partners committed some time back to using a straight up silicon based approach and plan a commercial release by 2020.

Microsoft and Google have slowed down their releases in pursuit of two models that are regarded as more promising, especially at being error resistant, qubits currently being quite sensitive to their environments.

Microsoft is pursuing a general purpose Quantum computer using a yet unproven concept called topological quantum computing. This technique depends on excitations of matter that encode information by tangling around each other like braids. Information stored in these qubits would be much more resistant to outside disturbance than other technologies and would make error correction easier.

Google has been working on Quantum since 2014 based on encoding quantum states as oscillating currents in superconducting loops. This is similar to the IBM approach.

IonQ: Still another model being pursued by startup IonQ and several major academic labs is to encode qubits in single ions held by electric and magnetic fields in vacuum traps which are expected to be more flexible and scalable than systems based on superconductivity. IonQ was founded exactly a year ago as a spinoff with academics from the University of Maryland and Duke University.

How Difficult is it to Own and Operate a Quantum Computer?

The data points we have all come from D-Wave where the latest version of their 2000 qubit system will set you back about $15 Million. But based on its successful usage at Lockheed Martin to almost instantly identify flaws in massive complex software programs, they’ve already spun off a subsidiary QRA (qracorp.com) to commercialize exactly this capability and it looks to be available both on-prem as well as SaaS.

It makes sense that Quantum will be widely available via API since it is both costly and difficult to maintain, not to mention releasing major new versions faster than your iPhone goes out of date.

The D-Wave system operates just a few fractions of a degree above absolute zero. Also remember that since Quantum computers are probabilistic devices users need to factor in concerns about the number and quality of the qubits, circuit connectivity, and error rates. Like any early tech, these are bound to improve rapidly.

The D-Wave system operates just a few fractions of a degree above absolute zero. Also remember that since Quantum computers are probabilistic devices users need to factor in concerns about the number and quality of the qubits, circuit connectivity, and error rates. Like any early tech, these are bound to improve rapidly.

If you do choose to own one, your cooling bill may be high but your power bill won’t be. Quantum computers promise to be 100 to 1,000 times for energy efficient than supercomputers.

How About Programming?

Up to now you needed to have a team of physicists and mathematicians working together to define and program your application. This was looking much like the earliest days of general purpose computing from the 60’s and 70’s when you needed to know your way around the bits and bytes.

As you might expect however, wherever there is user pain, someone will step in to make it easier. D-Wave recently released its open source software tool called Qbsolv so that programmers don’t also need to be physicists. Similarly, last year Scott Pakin of Los Alamos National Laboratory–and one of Qbsolv’s first users–released another free tool called Qmasm, which also eases the burden of writing code for D-Wave machines.

These programs work only with D-Wave. So far as we know no similar higher level language has been released for IBM Q but I bet it can’t be far behind. Everyone is counting on the open source community to step up to their historical role of making this better for all.

Should You Care Which One You Pick?

On one level the decision criteria is easy. If you have complex tasks worthy of Quantum computing and you want to get in now then it’s either D-Wave or IBM (or perhaps QRA as a service if debugging massive software is your thing).

For simplicity of programming with the pretty broad constraint of optimization-only the choice is D-Wave. Right now you would have to purchase a machine but I’m betting they will have a cloud service within a year due to market pressure from IBM. If your problem is in simulation or sampling (plus optimization) then IBM is your pick.

As to whether any one of these architectures, current or future will be superior, only time will tell. It’s equally likely that we’ll end up with several models specialized around different types of tasks. Just remember it only took about three years for Google’s proprietary Big Table to become open source Hadoop and only about another three to spawn the explosion in artificial intelligence and deep learning.

Is Quantum Calculation Actually Instantaneous?

Since qubits exist simultaneously as both 1 and 0, the familiar trope has been that they collapse on the solution instantaneously. This is kind of true but not quite. There are several ways to think about compute speed. One is by comparison to traditional computing.

Since qubits exist simultaneously as both 1 and 0, the familiar trope has been that they collapse on the solution instantaneously. This is kind of true but not quite. There are several ways to think about compute speed. One is by comparison to traditional computing.

Since the field is fairly thin, the only published benchmark we could find is about 18 months old and comes from Google using a D-Wave machine. Researchers at Google released a research report in December 2015 reporting the results of benchmarking their D-Wave device against a conventional computer in a number of tasks. Not surprisingly the D-Wave computer outperformed the traditional desktop by 108 times — making it one hundred million times faster. “What a D-Wave does in a second would take a conventional computer 10,000 years to do,” said Hartmut Nevan, director of engineering at Google, during a news conference to announce the results.

So the reality is that even if it’s not instantaneous, Quantum computers can accomplish complex calculations that simply could not be completed in ‘human time’.

The other test of capability is broadly known as Quantum Supremacy. No this is not about our Quantum overlords but rather when will Quantum computers be shown to be faster than any possible classical computer.

The issue is this; currently if an algorithm is run on a Quantum computer made up of relatively few qubits a classical machine can predict its output. Thinking is that when the Quantum machine approaches 50 qubits (the IBM type, not the D-Wave type) even the largest supercomputers wouldn’t be able to keep up. This is as much a psychological threshold as anything but it will demonstrate that Quantum computers are unbeatable.

Truth is that this is about as useful in the real world as Jack LaLane pulling 70 passenger laden boats while swimming with the rope (it’s a real thing, look it up). Like LaLane’s adventure it will be a huge marketing event that will draw in new customers. Any number of teams are pursuing this goal

By the Way

By the way, if you’re going to invest time following these developments things are changing fast. Take my word for it; don’t bother with any source that’s over 6 months old. On second thought, make that 3 months.

Other articles in this series:

Quantum Computing and Deep Learning. How Soon? How Fast?

Quantum Computing, Deep Learning, and Artificial Intelligence

The Three Way Race to the Future of AI. Quantum vs. Neuromorphic vs. High Performance Computing

About the author: Bill Vorhies is Editorial Director for Data Science Central and has practiced as a data scientist and commercial predictive modeler since 2001. He can be reached at: