Summary: Despite hundreds of projects and thousands of data scientists devoted to bringing AI/ML to healthcare, adoption remains low and slow. A good portion of this problem is our own fault for failing to see the processes being disrupted through the eyes of the physician users. Here we lay out the healthcare opportunity landscape but for data scientists following classical disruption strategies, it may be more of a minefield. Part 2 of 3.

There are plenty of opportunities for good in applying AI/ML to healthcare. But the track record of data scientists bringing these breakthroughs into the doctor’s office is poor. Adoption is low and slow.

We reported in our first article in this three part series what we’d learned directly from clinicians and hospital CIO/administrators attending December’s AIMed conference. First that only about 1% of all US hospitals have active data science programs. That’s only about 50 out of the roughly 5,500 hospitals in the US (2018).

Second that while financial consideration play into this, slow adoption is laid squarely at the feet of we data scientists using our standard disruption strategies.

The lessons which will be spelled out in the third article in this series are that this is a unique culture with special needs, and one not particularly open to the idea of disruption.

The Big Picture

In the first article we laid out the major segments of healthcare where AI/ML can have an impact and where it is succeeding now and can succeed in the near term. Here we’ll offer more detail on these segments. We organize these first around who pays, and then on the patient’s experience of the medical process.

- Drug Discovery and Innovation

Of all the AI/ML opportunities in healthcare this one is actually furthest along. The primary reason is that it is big pharma who pays and is funded by capital markets, not by the payer/hospital/clinician/patient financial chain.

However, the payoff from these innovations may also be furthest in the future because of the risk of innovative research and the extreme costs and long approval times for new drugs.

While the press often covers advances in genetics and genomics as precursors to AI/ML assisted medical breakthroughs, closer in are the areas of personalized medicine and precision medicine.

The difference is evident in the names and includes using the patient’s own modified cells to combat disease (personalized) as well as creating drug combinations designed for the unique physiology and condition of the patient (precision).

- The Business of Healthcare

The operational world of the clinician may be unique but at a business level hospitals and healthcare organizations share some marked similarities with the commercial world.

The operational world of the clinician may be unique but at a business level hospitals and healthcare organizations share some marked similarities with the commercial world.

First among these is that there are many repetitive tasks that can be automated with tools adapted from the commercial world, typically through robotic process automation (RPA) and NLP.

Examples of some the most obvious targets include:

- Determining patient insurance eligibility (NLP plus search RPA)

- Creating accurate payer billing (NLP plus search seeks to increase accuracy and reduce under and over billing based on complex medical codes and electronic health records (EHRs)).

- Demand forecasting based on classic time series forecasting. This well used commercial application is readily adapted to common problems including:

- Staffing and scheduling

- Maximizing OR room utilization

- Reducing infusion center wait time and increasing patient throughput

- Streamlining emergency department to in patient transfers

- Reducing patient discharge delays

From here we move directly into the world of healthcare as experienced by the patient. These are intended to be more or less in chronological order.

- Patient Intake and Referral – Before or at the Time the Patient First Sees the Doctor.

Determining whether and when a patient needs to see a doctor is a major concern in the US and also in single-payer countries where access to healthcare is intentionally throttled and where delays are long.

The initial consult in which the patient describes their ailment is readily amenable to both teleconferencing physicians as well as sophisticated virtual physicians assistants (chatbots).

Chatbot technology is now well advanced and the public has shown a willingness to utilize these virtual assistants in a broad variety of settings. The added benefit is communication through channels with which the patient is most comfortable (mobile, web, text, telephone).

Thanks to commercial applications many users are used to this means for scheduling appointments and follow-ups, billing, or processing special requests.

Also, the initial intake interview between patient and physician which forms the core of the EHR record is time consuming and in many instances can be automated thanks to NLP and chatbot technology.

Assuming the human-defined or AI-defined knowledge and decision tree behind the virtual assistant is accurate (and that’s a big if), these can be extremely useful in reducing hospital administrative costs, prioritizing in-person physician consults, and even diverting patients from emergency care to less expensive and more readily available urgent care.

- Clinical Applications – What Happens Between Clinician and Patient. AKA the AI/ML Augmented Physician.

If you want AI/ML to succeed in improving healthcare it needs to get into the space between the doctor and the patient. This is far and away the most difficult part of the healthcare process to change. This area also represents at least 80% of the potential for improvement in healthcare and the area showing least penetration and adoption to date.

4.1 Automated / Semi-automated interpretation of medical images.

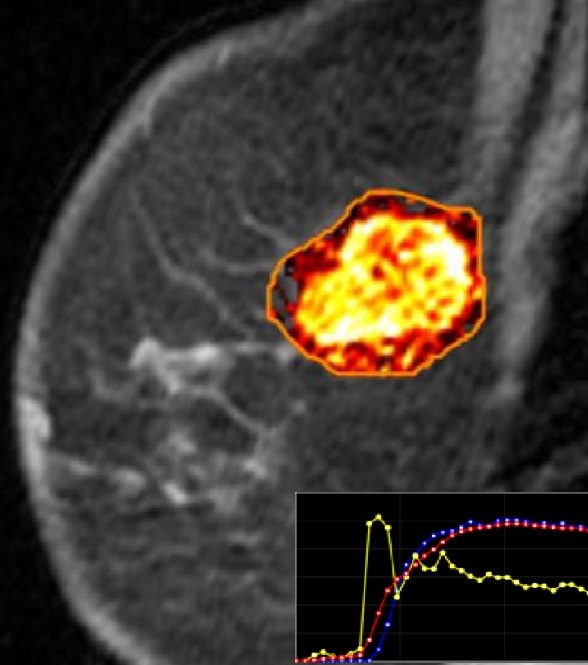

The greatest amount of enthusiasm, hype, and reportedly about 75% of prospective AI/ML healthcare applications are currently focused on the automated interpretation of medical images using deep learning CNN techniques.

The greatest amount of enthusiasm, hype, and reportedly about 75% of prospective AI/ML healthcare applications are currently focused on the automated interpretation of medical images using deep learning CNN techniques.

For example, roughly 55,000 people attended the 2018 Radiological Society of North America (RSNA) conference making it the largest conference of its kind compared to the 450 attending the AIMed conference in December.

The images include radiological images, CT scans, and even the wet-lab biopsy slides of tissue, all of which are currently evaluated by eye by highly trained clinicians who are in short supply, and not infrequently are awakened in the middle of the night to consult on an urgent image.

The press is overflowing with articles touting the success of each system in exceeding the accuracy and greatly reducing the turnaround time compared to human clinicians.

Despite these claims, hospitals and clinicians have been slow to adopt for reasons we’ll discuss in part three. From the clinician’s point of view, this is simply not as good as it looks.

4.2 Enabling more accurate identification of disease subtypes – precision medicine.

Treatment is only as good as the diagnosis and much of diagnosis still relies on symptom based and organ based descriptions dating from the 17th century.

Classical clustering-based ML techniques applied to CNN interpreted medical images is rapidly augmenting diagnostic capabilities in many sub areas of medicine such as epidemiology.

4.3 AI/ML driven triage and prevention

This is using classical ML modeling techniques sometimes aided by new AI techniques to find signals in existing patient data that separate good outcomes from bad.

You can think of these models in three main categories:

4.3.1 Models to identify and develop protocols that result in better outcomes.

Healthcare is a conservative profession that properly follows known and agreed approaches to helping patients. But there are instances of innovation which ultimately prove better and should be adopted. Finding these improvements and to which subgroups of patients they might apply is a classical application of modeling to find a signal in the patient data.

4.3.2 Models focusing on triage and prevention of bad outcomes.

Some percentage of medical procedures, especially surgeries do not end as expected. Models are being developed to identify which patients are most likely to suffer complications or even mortality following surgeries. Others are focused on identifying the likelihood of crisis events like cardiac arrests that may look out months or even years in the future and influence the patients to take steps toward their own preventive care.

4.3.3 Models focusing on anomalies and types of preventable harm.

Medication errors are the cause of considerable preventable patient harm and added healthcare cost. Trip and fall type accidents are also a significant source of additional harm that occurs most often to patients in hospital during recovery, simply getting out of bed and finding themselves dizzy or too weak to stand.

Both these are examples of error types that can be forecast and subsequently controlled by modeling the subgroups of patients most likely to be effected.

In-room video and audio monitoring using CNN and RNN deep learning techniques are also being used to create alarms and alerts to medical staff.

- The Profit Motive and Shared Savings

What is equally interesting in these outcome models is that they are used both to benefit the patient but also to benefit the hospital financially.

Obviously avoiding the additional costs associated with bad outcomes or accidents is a direct driver of reduced cost. What is less obvious is that changes in reimbursement from fee-for-service to fee-for-outcome is an even greater driver.

To greatly over simplify an example, if patient John is diagnosed with cardiac disease of a certain severity then payers will reimburse a fixed amount for John and all patients with this similar condition.

If the hospital can identify subsets of John’s group of similar patients that will have better outcomes from less expensive procedures then they essentially split the savings with the payer.

And it’s not always figuring out how to provide lesser or more efficient services for the same outcome. It may also be that some patients like John will have better outcomes if for example they have more timely follow-ups. This may even involve the hospital paying for John’s Uber ride to his appointment.

Another direct extension of this is remote patient monitoring, typically through wearables collecting IoT signals or even video processed with CNN to identify alerts to the patient or their clinicians.

The involvement of the doctor into both the upstream and downstream monitoring of patient well-being is driven by the shared savings financial model and has perhaps the greatest potential to move the overall system from sick-care to real health-care.

- Creating Accurate Data – the Electronic Health Record (EHR)

The final step in our big picture is the importance of creating accurate data through the EHR. It goes without saying that without sufficient quantities of data, the preceding applications of AI/ML in healthcare would simply not be possible.

But wait. It turns out that the introduction of the EHR over the last decade is both a blessing and a curse. Clinicians widely regard the EHR and the requirement for extensive documentation to be one of the worst things that has ever happened to their work life.

It’s now a matter of common agreement that thanks to the EHR physicians suffer two hours of administrative load for each hour of patient-facing time. Not to mention the intrusion of the keyboard between patient and doctor in the exam room.

Solutions based on NLP dictation, or even elaborate video/audio recording headsets intended to be worn by surgeons during operations to document procedures have been developed by the data science community. Penetration remains low and this pain point in the physician – patient interaction remains high.

Separate is also the issue of extracting consistent and clean data from EHRs on which analysis and modeling can be conducted. Interoperability of this data between organizations or even between similar specialties or machines in separate locations remains a hurdle to getting sufficient data.

In our next article, more specifics about how data scientists need to better understand their clinician users and some examples of why this is slowing adoption.

Other Articles in This Series

Seeing the AI/ML Future in Healthcare Through the Eyes of Physician…

Doctors are from Venus, Data Scientists from Mars – or Why AI/ML is Moving so Slowly in Healthcare

Other articles by Bill Vorhies.

About the author: Bill is Editorial Director for Data Science Central. Bill is also President & Chief Data Scientist at Data-Magnum and has practiced as a data scientist since 2001. He can be reached at: