Okay, I’m as mad as hell, and I’m not going to take this anymore! If data scientists are the modern-day alchemist who turn data into gold, then why are so many companies using leeches to monetize their data?

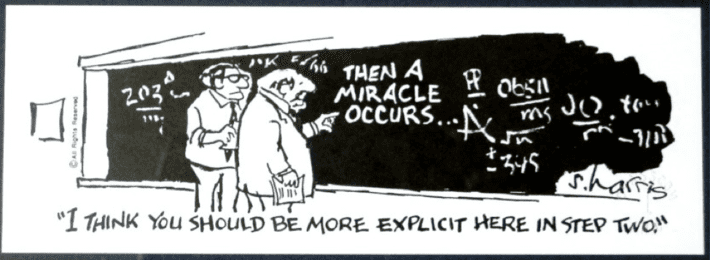

When I talk to companies about the concept of a “Chief Data Monetization Officer” role, many of them look at me like I have lobsters crawling out of my ears. “Sorry, we don’t have that role here.” Of course you don’t have that role, and that’s why you SUCK at monetizing data. That’s why you have no idea how to leverage data and analytics to uncover and codify the customer, product, and operational insights that can optimize key business and operational processes, mitigate regulatory and compliance risks, create net-new revenue streams and create a compelling, differentiated customer experience. What, you expect that just having data is sufficient? You expect that if you just have the data, then some sort of “miracle” will occur to monetize that data?

Before I give away the secret to solving the data monetization problem, I think we first need to understand the scope of the problem.

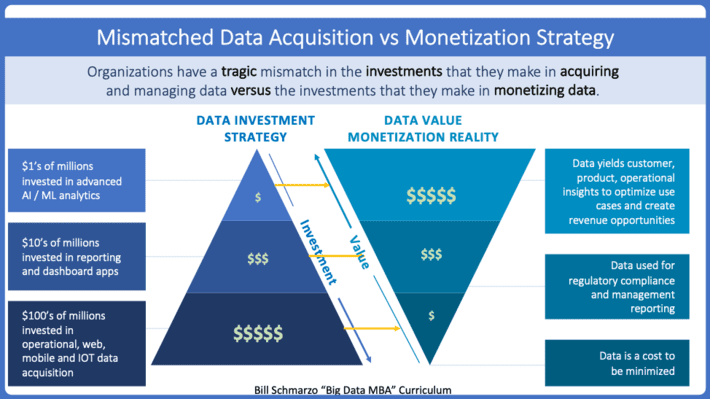

Tragic Mismatch Between Data Acquisition versus Monetization Strategies

Organizations spend 100’s of millions of dollars in acquiring data as they deploy operational systems such as ERP, CRM, SCM, SFA, BFA, eCommerce, social media, mobile and now IoT. Then they spend even more outrageous sums of money to store, manage, backup, govern and maintain all of the data for regulatory, compliance and management reporting purposes. No wonder CIO’s have an almost singular mandate to reduce those data management costs (hello, cloud). Data is a cost to be minimized when the only “value” one gets from that data is from reporting on what happened (see Figure 1).

Figure 1: “Digital Strategy Part I: Creating a Data Strategy that Delivers Value”

As the Harvard Business Review article “Companies Love Big Data But Lack the Strategy To Use It Effectively” stated:

“The problem is that, in many cases, big data is not used well. Companies are better at collecting data – about their customers, about their products, about competitors – than analyzing that data and designing strategy around it.”

Bottom-line: while companies are great at collecting data, they suck at monetizing it. And that’s where the Digital Transformation Value Creation Mapping comes into play.

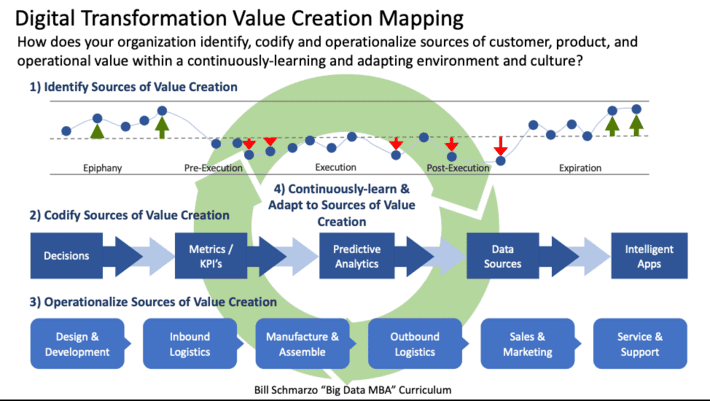

Mastering the Digital Transformation Value Creation Mapping

The Digital Transformation Value Creation Mapping lays out a roadmap to help organizations identify, codify, and operational the sources of customer, product and operational value within a continuously-learning and adapting culture. Let’s deep dive on each of the 4 steps of the Digital Transformation Value Creation Mapping so that any organization can realize the data monetization dreams…without leeches (see Figure 2).

Figure 2: Digital Transformation Value Creation Mapping

Step 1: Identify the Sources of Value Creation. This is a customer-centric (think Customer Journey Maps and Service Design templates) exercise to identify and value sources and impediments to customer value creation.

- Identify the targeted business initiative and understand its financial ramifications

- Identify the business stakeholders who either impact or are impacted by the targeted business initiative

- Interview the business stakeholders to identify the decisions that they make in support of the business initiative and their current impediments to making those decisions

- Document the customer’s sources, and impediments, to value creation via Customer Journey Maps and Service Design templates

- Across the different stakeholder interviews, cluster the decisions into common subject areas or use cases.

- Conduct an Envisioning workshop with all stakeholders to validate, value and prioritize the use cases while building organizational alignment for the top priority use case(s)

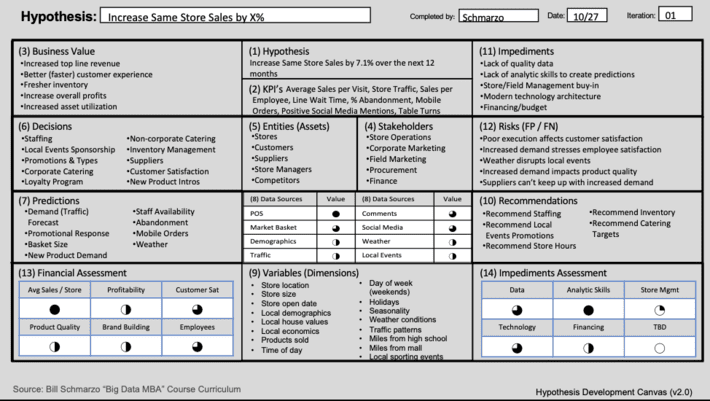

- Create a Hypothesis Development Canvas for the top priority use cases that details the metrics and KPI’s against which use case progress and success will be measured, business entities/assets around which analytics will be built, key decisions and predictions required to address the use case, data and instrumentation requirements, and costs associated with False Positives and False Negatives to reduce AI model unintended consequences.

Figure 3: Hypothesis Development Canvas

Step 2: Codify Sources of Value Creation. This the data science, data engineering and advanced analytics effort to put into math (codify) the customer, product and operational requirements defined in Step 1.

- Establish the advanced analytics environment (using open source tools such as Jupyter Notebooks, TensorFlow AI/ML framework, ML Flow for ML model management, Seldon Core for deploying ML models, Ray distributed ML model execution framework, Python, etc.)

- Ensure access to the required internal and external data sources (ideally via a data lake)

- Use agile sprints and rapid prototyping to prototype and co-create with customers the reusable analytic modules that will drive the optimization of the targeted use case(s)

- Establish Asset Model architecture and technology for the creation of analytic profiles on the key business entities around which the analytics will be applied

- Use AI/ML to create a library of reusable Analytic Modules that will accelerate time-to-value and de-risk future value engineering engagements

Step 3: Operationalize Sources of Value Creation. This step is the heart of the data monetization process – integrating the analytic models and analytic results into the operational systems in order to optimize the organization’s business and operational decisions.

- Integrate the analytic modules and a model serving environment into the organization’s operating environment

- Provide API’s that can deliver the analytic outcomes (propensity scores, recommendations) to the necessary operational systems in order to optimize business and operational decisions

- Instrument the operational systems to track the results from the decisions in order to determine the effectiveness of the decisions (AI model decision effectiveness)

- Implement a model management environment to continuously monitor and adjust for model drift

Step 4: Continuously-learning and adapting to Sources of Value Creation. This step provides the feedback loop to facilitate the continuously-learning and adapting of the analytic modules and assets models (the heart of creating autonomous assets that continuously-learn and adapt with minimal human intervention).

- Leverage AI / Reinforcement Learning capabilities to continuously monitor and adjust AI model performance based upon the actual versus predicted outcomes

- Implement a Data Monetization Governance Process that drives (enforces) data and analytics re-use and the sharing and codification of learnings

- Leverage Explainable AI (XAI) techniques to understand the AI model changes and their potential impact on privacy and compliance laws and regulations

- Revisit the costs of the False Positives and False Negatives that drive the AI models

- Create a culture of continuously learning where the organization learns and adapts with every front-line customer engagement or operational interaction.

Data Monetization Summary

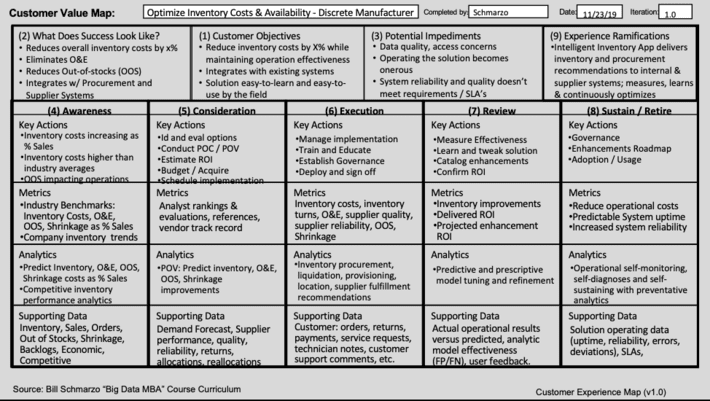

Data Scientists are the modern-day alchemists – they know how to turn data into gold. And the Digital Transformation Value Creation Mapping gives you that roadmap for turning data into gold. Heck, here is a Value Creation cheat sheet to get you started!

Figure 4: Value Creation Mapping Template

So, what are you waiting for…bigger leeches?

Extra Credit Reading. Digital Business Transformation 3-part series:

- It’s Not Digital Transformation; It’s Digital “Business” Transformation! – Part I https://www.linkedin.com/pulse/its-digital-transformation-business-…

- It’s Not Digital Transformation; It’s Digital “Business” Transformation – Part II https://www.linkedin.com/pulse/its-digital-transformation-business-…

- It’s Not Digital Transformation; It’s Digital “Business” Transformation – Part III https://www.linkedin.com/pulse/its-digital-transformation-business-…