Welcome to the second installment of our ModelOps blog series, where we dive deep into the next step in the ModelOps pipeline, Model Training. During Model Training, we feed large volumes of data to our model so it can learn to perform a certain task very well. This blog follows the first post in our series where we cover everything you need to know about Data Acquisition and Preparation and discuss how foundational it is for successful Artificial Intelligence (AI) investments. If you missed it, check as a great lead-in to this post.

Training a model to learn how to execute a task is just like any other action that requires learning. Training a dog to sit requires countless hours of practice and repetition until the dog obeys consistently. Training to run a marathon requires months of long-distance running. Learning how to ride a bike takes time with gradual improvement until it clicks. In each of these repetitive-learning scenarios, we invest a nontrivial amount of time and resources to master a given task. As you develop your model training process, it is important to consider the time, resources, and corresponding costs of each.

Three Categories of Model Training Considerations

1. Model Approach Considerations

- What is the objective for this model and how do we measure success?

- Is our objective comparable to an existing state of the art (SoA) solution on the market or completely novel?

- How will our data (structured or unstructured) impact the modeling approach we take?

- Do we need to build a solution entirely from scratch or can we use open source architectures as a starting point?

2. Tools & Frameworks Considerations

- Which machine learning framework and programming language are we going to use?

- Are we going to build custom training code, use a tool that can help us, or use a combination of both?

3. Experimental Considerations

- How long will it take to train our model and what hardware resources do we need? What is the overall investment to successfully complete this process?

- Does our experiment require hyperparameter optimization?

- How are we going to monitor the training experiments and implement early stopping if needed?

When building out a model training process, the first thing to consider is the business application and the goal of the project. Odds are high you have already defined your goal before you arrived at this step in your AI journey. However, revisiting the objective and defining how you are going to measure its success is critical when deciding on modeling approaches. The quickest way to achieve your goal while saving time and money is to start by exploring which models might be available within the open source community. Many SoA architectures exist in open source form and can serve as the foundation for your data scientists to build upon. Rather than waste investment dollars reinventing the wheel, your team can use one of these existing architectures that other AI researchers spent months developing (e.g., ResNet variants for image classification, RCNN for object detection, or BERT for Natural Language Processing). Selecting an already available model that aligns to your objective as your model base will save your teams a considerable amount of development time and costs.

Once you have defined your objective and chosen a solid base model to build upon, the next decision to consider involves the tools and frameworks you will use to build your model. Right off the bat, you will want to decide whether using a training platform is the route to take or if you instead prefer to build your pipeline by hand. Low- and no-code commercial and open source training platforms (e.g., AWS SageMaker, RunwayML) can in some cases automate most of this build process for organizations. While thinking about the tradeoffs that come with this approach, it is important to make this decision based on the maturity of your data science team and ultimately settle on a solution that will allow your team to optimize its build process.

In the case that you decide to build your training pipeline from scratch, most common programming languages (e.g., Python, R, Java) provide access to user-friendly libraries that make ML feasible to implement. Moreover, many common ML frameworks, including Tensorflow, PyTorch, scikit-learn, and others, make common AI architectures and other ML techniques easily accessible. With many of these open source languages and frameworks at your fingertips, your team should ultimately select the tools with which they are most comfortable. Tool familiarity will reduce, and hopefully eliminate, any learning curve expenses. Additionally, by using familiar tools, your team can efficiently set up all model training code and kick off their experiments without any unnecessary delays.

With a plan in place and tools identified, there are several implementation and execution factors to considerstarting with hardware. Training a ML model is computationally expensive, and takes time. To minimize this training time, developers commonly leverage GPUs because they can process multiple computations in parallel. But this hardware comes at a cost, and requires teams to balance their objectives against the amount of GPU usage they need, the number of training experiments required, and the budget. Once the hardware decision is confirmed, your development team should consider a set of experimental conditions to include:

- Will your dataset require data augmentation to increase the variation of samples your model processes? If so, your team will need to add in a preprocessing step during training that might randomly perform flips, translations, rotations, scales, crops or noise additions to the data.

- Would your model benefit from hyperparameter optimization? In other words, should you set up your experiment to test several combinations of loss functions, optimizers, learning rates, batch size, and number of layers? Free products like Ray Tune and MLflow make it easy for teams to set up these experiments and constantly monitor results during training.

Building a successful model training process requires several key considerations. As a result, this step in the ModelOps process can be the lengthiest and most computationally expensive direct cost. To minimize budgetary impact, it is important to select the correct combination of model approach, tools, frameworks, and experimental factors that best suit your use case and technical team maturity.

At the end of the day, remember that every AI investment is unique and will look different. For those reasons, it is important to always make these decisions based on what makes the most sense for your organization.

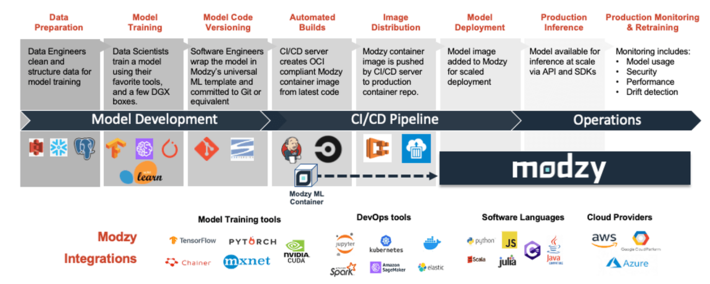

Make sure to check out our next blog in the ModelOps series: Model Code Versioning: Reduce Friction. Create Stability. Automate. To learn more, visit modzy.com.