In earlier posts, we learned about classic convolutional neural network (CNN) architectures (LeNet-5, AlexNet, VGG16, and ResNets). We created all the models from scratch using Keras but we didn’t train them because training such deep neural networks to require high computation cost and time. But thanks to transfer learning where a model trained on one task can be applied to other tasks. In other words, a model trained on one task can be adjusted or finetune to work for another task without explicitly training a new model from scratch. We refer such model as a pre-trained model.

Pre-trained Model

The pre-trained classical models are already available in Keras as Applications. These models are trained on ImageNet dataset for classifying images into one of 1000 categories or classes. The pre-trained models are available with Keras in two parts, model architecture and model weights. Model architectures are downloaded during Keras installation but model weights are large size files and can be downloaded on instantiating a model.

Following are the models available in Keras:

- Xception

- VGG16

- VGG19

- ResNet50

- InceptionV3

- InceptionResNetV2

- MobileNet

- DenseNet

- NASNet

- MobileNetV2

All of these architectures are compatible with all the backends (TensorFlow, Theano, and CNTK).

For more details and reference, please visit: https://keras.io/applications

Loading a Pre-trained Model in Keras

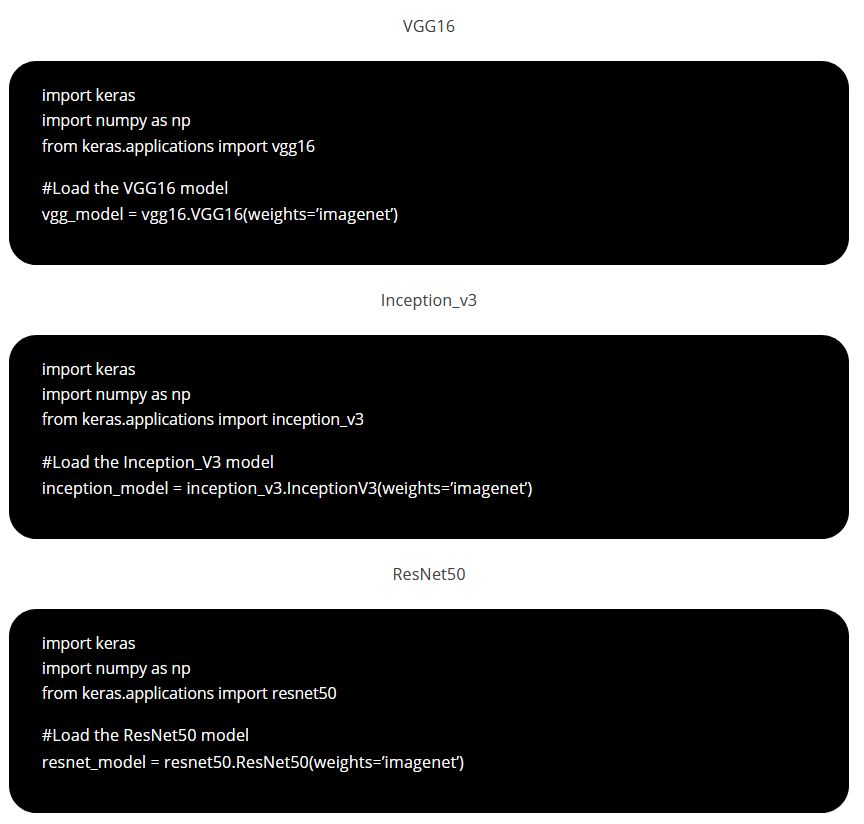

First, we will import the Keras and required model from keras.applications and then we will instantiate the model architecture along with the imagenet weights. If you want only model architecture then instantiate the model with weights as ‘None’.

Note: For below exercise, we have shared the code for 4 different models but you can use only the required one.

Load and pre-process an image

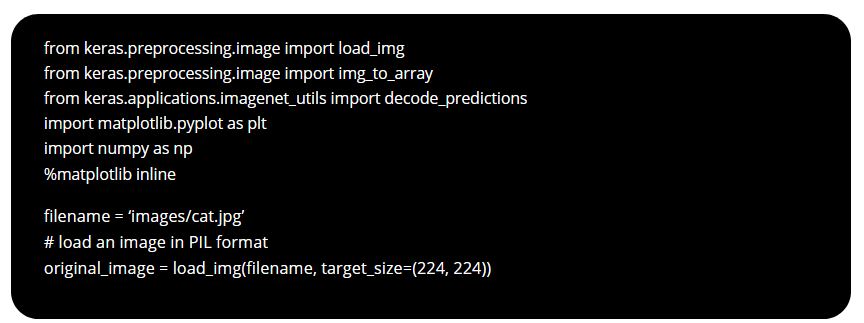

We will use the Keras functions for loading and pre-processing the image. Following steps shall be carried out:

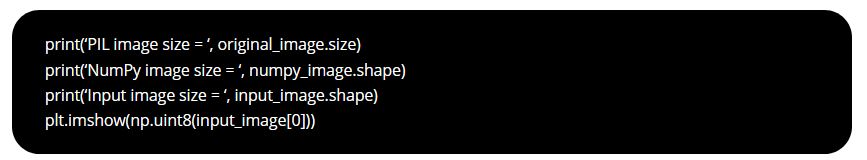

1- Load the image using load_img() function specifying the target size.

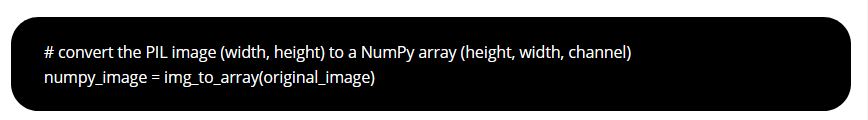

2- Keras loads the image in PIL format (width, height) which shall be converted into NumPy format (height, width, channels) using image_to_array() function.

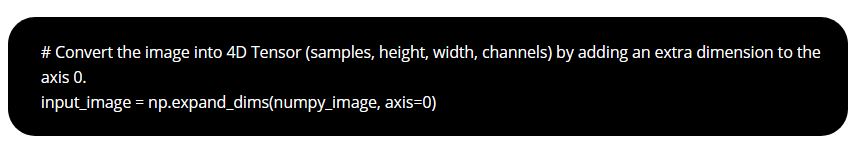

3- Then the input image shall be converted to a 4-dimensional Tensor (batchsize, height, width, channels) using NumPy’s expand_dims function.

You can print the size of image after each processing.

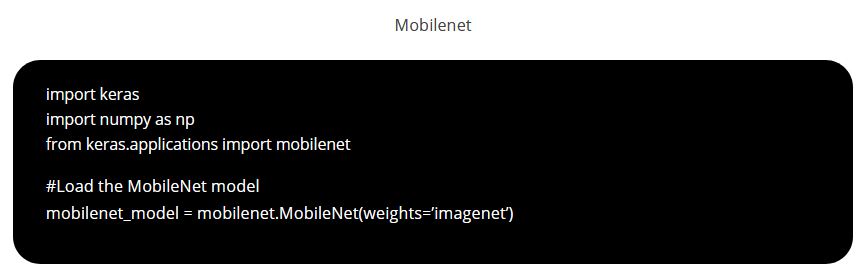

4- Normalizing the image

Some models use images with values ranging from 0 to 1 or from -1 to +1 or “caffe” style. The input_image is further to be normalized by subtracting the mean of the ImageNet data. We don’t need to worry about internal details and we can use the preprocess_input() function from each model to normalize the image.

Note: For below exercise, we have shared the code for 4 different models but you can use only the required one.

#preprocess for vgg16

processed_image_vgg16 = vgg16.preprocess_input(input_image.copy())

#preprocess for inception_v3

processed_image_inception_v3 = inception_v3.preprocess_input(input_image.copy())

#preprocess for resnet50

processed_image_resnet50 = resnet50.preprocess_input(input_image.copy())

#preprocess for mobilenet

processed_image_mobilenet = mobilenet.preprocess_input(input_image.copy())

Predict the Image

Use model.predict() function to get the classification results and convert it into labels using decode_predictions() function.

Note: For below exercise, we have shared the code for 4 different models but you can use only the required one.

# vgg16 predictions_vgg16 = vgg_model.predict(processed_image_vgg16) label_vgg16 = decode_predictions(predictions_vgg16)

print (‘label_vgg16 = ‘, label_vgg16)

# inception_v3 predictions_inception_v3 = inception_model.predict(processed_image_inception_v3) label_inception_v3 = decode_predictions(predictions_inception_v3)

print (‘label_inception_v3 = ‘, label_inception_v3)

# resnet50 predictions_resnet50 = resnet_model.predict(processed_image_resnet50) label_resnet50 = decode_predictions(predictions_resnet50)

print (‘label_resnet50 = ‘, label_resnet50)

# mobilenet predictions_mobilenet = mobilenet_model.predict(processed_image_mobilenet) label_mobilenet = decode_predictions(predictions_mobilenet)

print (‘label_mobilenet = ‘, label_mobilenet)

Summary:

We can use pre-trained models as a starting point for our training process, instead of training our own model from scratch.

3- Then the input image shall be converted to a 4-dimensional Tensor (batchsize, height, width, channels) using NumPy’s expand_dims function.

3- Then the input image shall be converted to a 4-dimensional Tensor (batchsize, height, width, channels) using NumPy’s expand_dims function. You can print the size of image after each processing.

You can print the size of image after each processing.