With more and more people getting conversant in analytics, its demand in every field becoming more pronounced. Product is no different. It is almost inevitable to introduce analytics in some format or other in the products you own. However, before you open the gates to this world of magic, there are three questions you should try answering.

These three basic questions shall help in better planning for your analytics strategy and would act as a compass in times of uncertainty.

Why analytics:

Great that you have decided to embark on the journey – could be because of fear of missing the bandwagon. Nevertheless, without answering this question, your team would always be involved in directionless busy work. It relates to a simple finding of why you want to introduce analytics to product. As Mckinsey puts it, without the right question, the outcome would be marginally interesting but monetarily insignificant.

- Is it to enable end users of your product?

- Will it serve for your internal product intelligence?

- Is it because every other product has some flavor of analytics?

- Investors asking for it?

- Is it the next big strategy for the product roadmap?

- You have hired a data science team; you do not know what to do with?

- Would it provide a better selling proposition for the sales team?

- If your clients are asking for their usage statistics?

What in analytics:

Isn’t it obvious to ask yourself what you want to build, before you actually start building. Similar is true for analytics.

- Do you want to enable reporting of various metrics for admins of your B2B platform?

- Would you leverage AI/ML for a product feature for end users?

- Are you looking for more in-depth product intelligence?

- Is it a good to have feature, without much usability? OR it is going to be the prime feature offering?

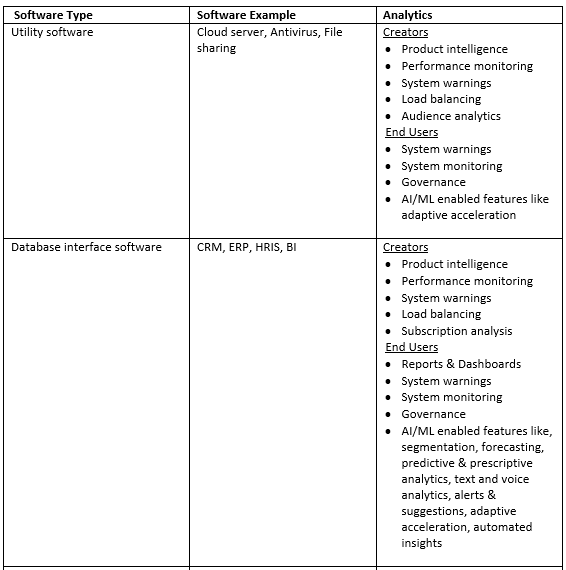

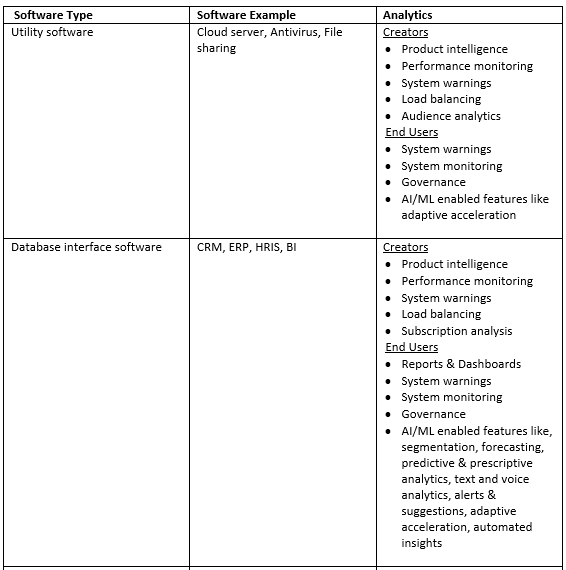

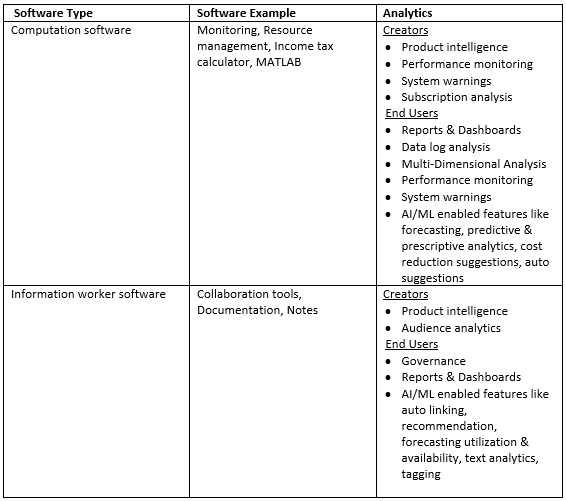

Answer to the above questions, is a function of the product type, its intended use and the users.

How to analytics:

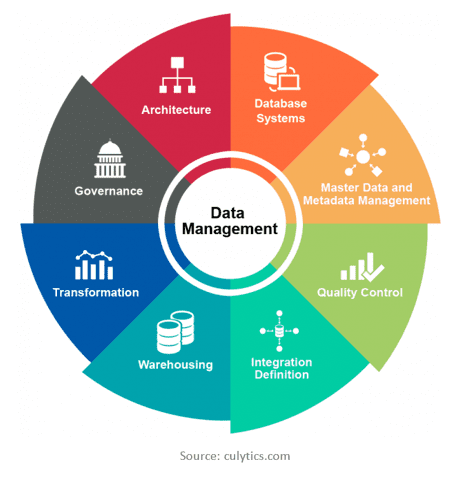

After addressing Why & What to offer in analytics, the logical next step is to plan how to deliver it. Moreover, when we are talking about analytics, data is the centerpiece. Data enforces the need of a completely new ecosystem of processes and practices to meet the regulatory, trust and demand obligations.

Although, every constituent of data management calls for a dedicated article, I shall touch briefly on each and try to illustrate how each influences the analytics strategy of the product.

Database systems: The primary infrastructure that would act as the cornerstone of the analytics strategy: database to store the data. RDBMS, NoSQL, or a Hybrid solution, followed by dozens of companies to choose from.

Master data and metadata management: This is the definition, the identity, the identifier, the reference via which every data call will be directed. It is essential to know and govern extensive data assets.

Quality control: You must have heard of the saying ‘garbage in, garbage out’. Bad data will severely hamper the trust and actionable knowledge in business operations. Data has to be unique, complete and consistent.

Integration definition: For analytics to be practical and actionable, data has to flow in from varied sources. This can be a transfer between different products or join between multiple modules within the platform. A schema is a map or viaduct that enable this unification.

Warehouse: The transactional data or raw data stored from platform might not be ideally designed for analytics. Joining a dozen of tables on the fly would impact not just the throughput but also the very feasibility of insight generation. A purpose built data warehouse is an efficient step towards integrating data from multiple heterogeneous sources. However, this may lead to a near-real-time system with some delay in data availability.

Transformation: Data transformation is an integral part of data integration or data warehousing, where the data is converted from one format/structure to other. It involves numeric/date calculation, string manipulation or rule based sequential data wrangling processes. As a step in ETL (extraction-transformation-load) data transformation cuts down the processing time for end user, thus enabling swift reporting and insight generation.

Governance: Sets the guiding principles, benchmarks, practices and rules for 1) Data policies 2) Data quality 3) Business policies 4) Risk management 5) Regulatory compliances 6) Business process management. Being an essential part of RFPs and government regulations, lack of data governance can expose company to lawsuits, higher data/process costs and complete business failure.

Architecture: According to the Data Management Body of Knowledge (DMBOK), Data Architecture “includes specifications used to describe existing state, define data requirements, guide data integration, and control data assets as put forth in a data strategy.” Simply said data architecture describes how data is collected, stored, transformed, distributed and consumed. Data architecture bridges business strategy and technical execution.

Without the power to derive of information and insights, storing data is of no use. Once the data management is in place, planning is required for processing and representing the data.

Collaboration vs in-house development: There are tons and tons of tools available in market that help making sense out of your data. These can be traditional BI tools like Power BI/ Tableau/ Qlik/ Microstrategy that help make dashboards. Or, there are modern BI tools like Looker/ Periscope/ Chartio which go beyond just dashboarding. Then there are tools like Amplitude/ Firebase/ Google Analytics/ Mixpanel/ Moengage which help with product analytics and understanding user behavior. These tools easily integrate with your product and provide faster go-to-market for your analytics offering. However there is cost associated with this – 1) steep recurring subscription cost 2) lesser control on features. An alternative could be developing the reporting and dashbaording tool in-house. It does come with a very long gestation/development period and operational issues of larger teams to manage. However, these can be off-set by the cost savings and superior control.

Real time or delayed: With business needs driving this decision, comparison could be made between consuming transactional data (real time analytics) or warehouse data (near real time) for analytical purposes. A warehouse definitely has an edge, providing more flexibility and scope but real time reporting has its own charm.

AI/ML: Artificial intelligence and Machine learning are the latest buzzwords, with something as humble as macro automation being classified as AI/ML. However, the sincere AI/ML solutions enable the product a proposition of differentiated offering along with the essential value add for the end users. The only concern with AI/ML implementation is that of cost. Whether human talent or infrastructure, it does not come cheap. Not to forget, the much-needed patience and trust that needs to be invested by the leaders. Hence, the agreement has to be a well thought though business decision, rather than a hasty push from IT department.

Essentially, rather than a knee-jerk reaction, a well thought out plan – considering demand, capabilities, resources, company’s management, business, legal and regulations – ensures the analytics implementation a definite success.