A while back, I was reading an article posted on Facebook, about Clovis people found alive and well living in Florida, with a picture featuring tribesmen (see below.) The quality of the picture was poor, and the URL was very suspicious: baynews9.com.ddwg.clonezone.link, as to make it appear that it was from Baynews9.com. It turned out that the picture (and thus the whole story) was fake: these people are real people living in Peru, see here for a Youtube video about them.

These tribesmen are real, but they live in Peru, not in Florida

My question is how to detect that a story is fake? The picture might have metadata embedded in it, allowing the data scientist to find the real source, unless it is a screenshot. Even if a screenshot, it raises the likelihood that the picture is stolen, as journalists want to make sure that all their pictures are traceable and correctly attributed to them (not least to avoid copyright litigation), which means having the proper metadata. Yet even real journalists make mistakes or borrow images, or sometimes publish images not related to the article: for instance to illustrate a picture of a fire in New York, they use a picture of a fire in Miami.– the picture is fake but the story is true.

In a different case, I was browsing reviews of restaurant Alestine in Inuvik (in the far North) on TripAdvisor, when I came across a review with a picture of the restaurant featuring trees, see below. There is no tree in Inuvik, much less big deciduous trees.

Fake picture: there is no tree in Inuvik

Thus, this restaurant review is either wrong or fake. Indeed, there is supposedly a tree known as the farthest North tree, and it is much more south than Inuvik. That tree was cut recently, see picture below.

How can an algorithm detect such inconsistencies? It seems very difficult to identify them without using a system with millions of rules and advanced image feature extraction. Yet, for fake Amazon reviews, I designed two algorithms: one that can catch them, and one that creates fake reviews that can not be detected.

Finally, the picture below is another example that raises some questions. I investigated it in this article.

A glowing waterfall a few miles away from the (now extinct) famous firefall: is it real? Or exaggerated?

Removing fake accounts: false positives

All the fake-stuff detection algorithms create false positives and false negatives. On Facebook for instance, there is a focus on detecting fake accounts, as they are responsible for spreading the fake stories or the fake “likes” in the first place. Yet recently this algorithm went as far as blocking genuine users. Facebook asks for a cell phone number to send a code to verify that you are real, then still block you nevertheless, forcing you to use a disposable phone number if you really want to sign up on the platform. In short, the algorithm generates an increasingly large number of false positives (people with too few friends for instance, of caring about their privacy and not posting a profile picture) yet fake news still keep flowing unabated.

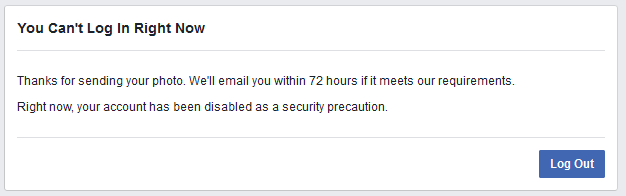

Below is a screenshot of the message displayed to users that are suspected by Facebook to be fake:

The issue is discussed here.

Fake clicks

Along the same lines, Google is facing yet another lawsuit about delivering bad traffic to advertisers. These class action lawsuits pop up every now and then, and maybe Google calculated that it makes more money out of bogus and poor clicks, than it loses in these lawsuits. Maybe, eventually, the fake activity generate profits – not just for the scammers, spammers and fraudsters – but for the platforms hosting their activity. This could be the main issue, as algorithms to detect fraudsters should perform better, especially those implemented by Facebook or Google, with big data science teams working behind the scene.

Finally, not all fake stuff is genuinely fake. For instance, Donald Trump has two people tweeting via his Tweeter account, the real Trump, and another one, who obviously, can’t be real. They have very different communication styles (that’s how they were detected), yet the “fake” Trump is approved to post by the real one: click here for details.

DSC Resources

- Services: Hire a Data Scientist | Search DSC | Classifieds | Find a Job

- Contributors: Post a Blog | Ask a Question

- Follow us: @DataScienceCtrl | @AnalyticBridge

Popular Articles

- Difference between Machine Learning, Data Science, AI, Deep Learnin…

- What is Data Science? 24 Fundamental Articles Answering This Question

- Hitchhiker’s Guide to Data Science, Machine Learning, R, Python

- Advanced Machine Learning with Basic Excel