New & Notable

Decentralized ML: Developing federated AI without a central cloud

Tosin Clement | June 3, 2025 at 9:49 amTop Webinar

Recently Added

Decentralized ML: Developing federated AI without a central cloud

Tosin Clement | June 3, 2025 at 9:49 amIntroduction – Breaking the cloud barrier Cloud computing has been the dominant paradigm of machine learning for y...

How to make AI work in QA

Saqib Jan | June 2, 2025 at 11:23 amAI has radically changed Quality Assurance, breaking old inefficient ways of test automation, promising huge leaps in sp...

How enterprises can secure their communications

Gaurav Belani | May 30, 2025 at 3:31 pmThis post breaks down the biggest threats to enterprise communication and succinctly walks you through the strategies th...

AI skills for the modern workplace: A guide for knowledge workers

Dan Lawyer | May 27, 2025 at 11:03 amWhile AI is being adopted across organizations, knowledge gaps still exist in using it mindfully and effectively in ever...

Stay ahead of the sales curve with AI-assisted Salesforce integration

Anas Baig | May 19, 2025 at 4:52 pmAny organization with Salesforce in its SaaS sprawl must find a way to integrate it with other systems. For some, this i...

Generative AI in software development: Does faster code come at the cost of quality?

Devansh Bansal | May 16, 2025 at 4:39 pmFrom creating comprehensive essays to writing intriguing fiction, there’s hardly anything untouched by the impact of g...

The future of DevOps: Using AI, automation and HPC

Martin Summer | May 15, 2025 at 11:48 amIntroduction DevOps has long been the backbone of modern software development, enabling faster development cycles and gr...

5 challenges in implementing AI in video surveillance

Zachary Amos | May 13, 2025 at 12:56 pmArtificial intelligence (AI) makes security cameras more versatile and useful. It can recognize suspicious behavior in r...

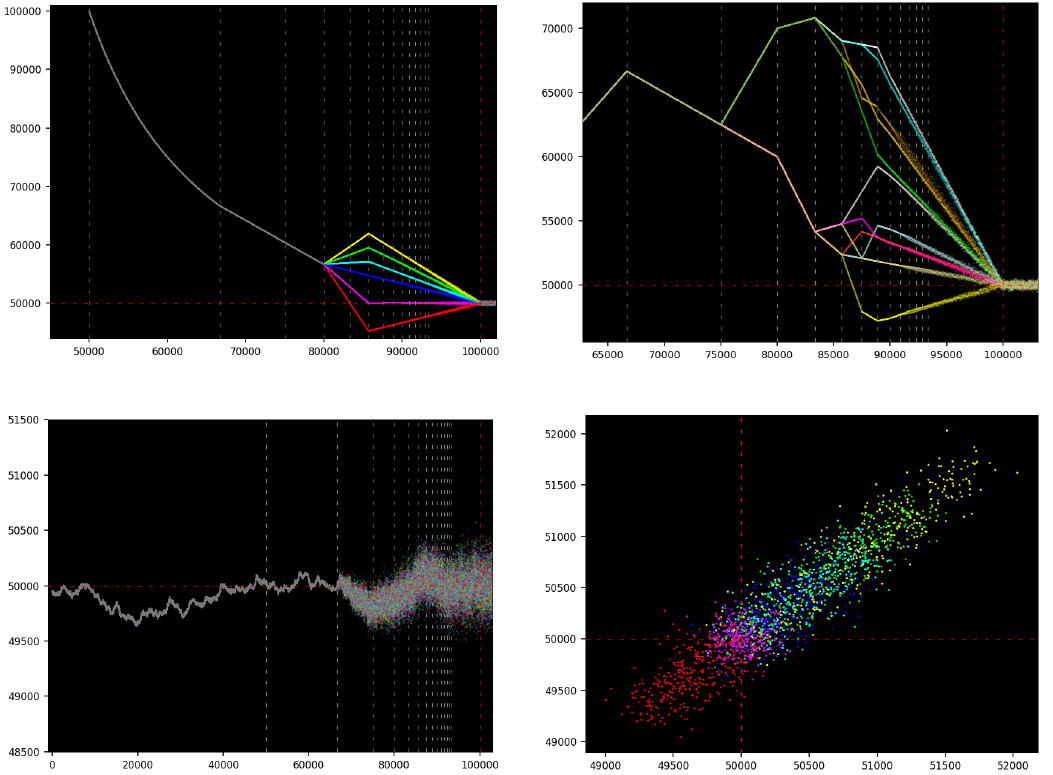

New Book: 0 and 1 – From Elemental Math to Quantum AI

Vincent Granville | May 11, 2025 at 11:41 pmThis book opens up new research areas in theoretical and computational number theory, numerical approximation, dynamical...

The power of distributed data management for edge computing architectures

Edward Nick | May 6, 2025 at 9:37 amA profound transformation occurs as organizations generate unprecedented volumes of data at the network edge. Edge compu...

New Videos

A/B Testing Pitfalls – Interview w/ Sumit Gupta @ Notion

Interview w/ Sumit Gupta – Business Intelligence Engineer at Notion In our latest episode of the AI Think Tank Podcast, I had the pleasure of sitting…

Davos World Economic Forum Annual Meeting Highlights 2025

Interview w/ Egle B. Thomas Each January, the serene snow-covered landscapes of Davos, Switzerland, transform into a global epicenter for dialogue on economics, technology, and…

A vision for the future of AI: Guest appearance on Think Future 1039

As someone who has spent years navigating the exciting and unpredictable currents of innovation, I recently had the privilege of joining Chris Kalaboukis on his show, Think Future.…