Your regulatory data project likely has no use case for design-time data lineage.

tl/dr

Mapping Data Lineage at design time, for its own end, has no regulatory use case or ROI. Buying a specialist tool to support that mapping has even less ROI.

Regulations see that kind of documentary data lineage as ancillary at best. Most regulators won’t ask to see the visualizations but will ask for the specific data values that make up the regulatory reports, i.e., a query time view of the data and where it came from. Put another way, ask for the workings with the constituent values for each reported item when the report was run.

To meet those regulations’ requirements, software vendors will have you believe that buying their lineage tool will do just fine. Rather, you need to invest in capabilities that capture data provenance at query time in a data store. This store will include the data flow path and values used in the calculations, and the results reported. That store will hold the data bitemporally so that the clock can be wound back to when those queries were run. Being able to interrogate, analyze, and publish that data from any point in time will meet the form and the substance of the regulations and, most importantly, give you a host of valuable insights if you hold that data.

Finally, good data lineage visualization is a consequence of a well-managed estate rather than a goal in and of itself. A well-realized API or Data Quality framework will provide data lineage metadata as a byproduct of their delivery.

Introduction

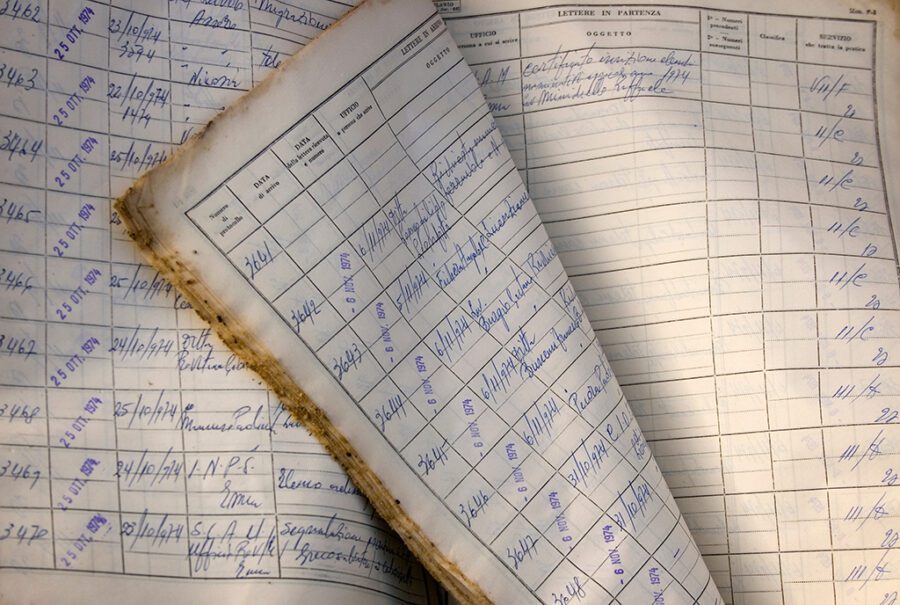

Just after the publication of BCBS 239, I was sitting in a meeting with the Risk function of a large bank. A consultant declared he was “passionate about lineage” and proudly walked us through examples of his work in spreadsheets and drawing tools. All static and manually compiled by an army of graduates. The consultant leaned back in his chair, looking inordinately self-satisfied while I saw six months of work and hundreds of thousands of pounds squandered – leaving the bank no closer to meeting its regulatory requirements.

A couple of years later, in another bank, a vendor presented a slide; their design-time data lineage tool, which worked by scanning platforms’ metadata, and asserted *that it may* be able to help with regulatory compliance, especially BCBS 239, CCAR, and GDPR. When prompted, they could provide no further explanation. The product was rejected.

In 2021, the Fed asked a bank to provide all of the specific numbers used to make up a series of risk metrics on a report, where they came from when the report was generated. This request took that bank eight weeks and many manual queries to comply with.

What does this mean?

Data lineage for its own sake, mapped at design time, has little or no value, and post 2021, has no explicit regulatory justification.

Lineage vendors still invoke BCBS 239, CCAR, IFRS-9, and GDPR as major reasons for buying their tools. As we’ve seen with the Fed’s recent request, buying a specialist data lineage tool that scans systems, code, and related metadata is a waste of time and money if your primary use case is regulatory compliance and all you’re doing is mapping visualizing data flows.

Data Lineage isn’t mentioned at all in the commonly cited regulations. In Dr. Irina Steenbeek’s excellent discussion on Data Lineage, she notes:

My professional journey to data lineage has started with investigating the Basel Committee requirements [for BCBS 239]…. Many specialists consider data lineage the ultimate ‘remedy’ to meet these requirements.

The funny thing is that you never find the term ‘data lineage’ literally mentioned in these regulatory documents.

Dr. Irina Steenbeek, Data Lineage 101

This is the argument advanced by the great Australian jurist* Dennis Denuto: “…It’s the vibe of the thing…”

*not a great jurist

The vendors and experts try to assert, with varying degrees of success, that design-time Data Lineage is implied by both the language of the regulations and the current set of best practices in data management. They also assert that design-time data lineage will be enough for compliance. , Dr. Steenbeek’s series of articles on lineage tries to fit that conclusion by applying best practice models and their descriptions of data flows and movement to selected, relevant bits of legislation.

To their credit, the articles are pretty persuasive, but they are all predicated on the idea that a static, design-time representation of Data Lineage, the sort of Data Lineage offered by the majority of vendors, is enough.

But, as we’ve seen from the Fed’s latest request of that bank, the Fed did not ask for a static representation of the data flows, rather the Fed asked for the actual, contemporaneous values used in the risk reports. A set of static diagrams was not enough.

What now for Design Time Data Lineage?

If we consider the implications of purchasing a tool to solely profile and scan data stores, code repositories to be able to visualize data flows as a pre-emptive attempt to comply with several pieces of legislation, it throws up some questions:

- How often do you scan the enterprise? Weekly? Daily? With each code deployment?

- Do you scan production platforms? If so, what contention does this cause?

- How do you account for End User Computing solutions you don’t know about?

- How do you account for manual interventions you don’t know about?

- What *actual* value is there in seeing a visualization of metadata? (Hint, there’s very little).

If we can satisfactorily answer these and demonstrate immediate business value, then we can proceed, otherwise, we need to consider our next steps carefully.

How do we comply with the legislation if specialist lineage tools are of limited value?

Complying with the legislation is simple; you begin by reading it and making no judgments ahead of time as to what it asks.

For this article, let’s focus on the previously mentioned BCBS 239.

What is BCBS 239?

BCBS 239, or the Basel Committee on Banking Supervision’s Principles for effective risk data aggregation and risk reporting, were written to force the banks’ hands to remediate their data architectures so risk could be reported quickly and accurately. Achitectures that would allow reports to be run in both regular conditions and under “stress” conditions, like the conditions of the 2008 crash, the event that precipitated these regulations. For example, in 2008, I worked for a medium-sized asset manager (£100bn AUM), and it calculated its exposure to Lehmans and AIG over 5 days manually. This was considered excellent at the time. The Fed example above was eight weeks in 2022. This is objectively not excellent.

Two objectives specified in paragraph 10 of BCBS 239 are particularly relevant here:

- Enhance the management of information across legal entities while facilitating a comprehensive assessment of risk exposures at the global consolidated level;

- Improve the speed at which information is available, and hence decisions can be made;

These beg the obvious question, how does a static view of data lineage at design time help with those objectives? It doesn’t. It might help in scoping the work for those objectives or in documentation, but it doesn’t answer the exam question, i.e., do you know where your data is, AND can you quickly and accurately do your risk aggregations?

The Language and Priorities in BCBS 239

While section headings aren’t persuasive in statutory interpretation, they can give you a clue as to the drafters’ intentions for the regulations in question. So, what are the BCBS 239 drafters’ intentions here?

Data Quality. That’s it.

BCBS 239 is about high-quality risk data.

The majority of the principles’ names are redolent of the DMBok data quality dimensions, in particular;

- Principle 3: Accuracy and Integrity

- Principle 4: Completeness

- Principle 5: Timeliness

- Principle 7: Accuracy

- Principle 8: Comprehensiveness

- Principle 9: Clarity and Usefulness

Static data lineage visualization and mapping doesn’t help much with these things.

Let’s dive into specifics.

What are the detailed requirements BCBS 239 is asking for?

Robustness

The most important clue about the priorities of BCBS 239 can be seen in the repetition of the phrase or variations of the phrase; “under normal or stress/crisis conditions” relating to risk reporting. It is repeated 17 times across the whole document. It is referenced specifically in principles 3, 5, 6, 7, 10, and 12. The regulations are challenging the banks to have systems and processes which are robust, flexible, and scalable enough to run under BAU operations or any ad hoc situation, including what-ifs proposed by the regulators.

This underlies everything.

How does a design-time data lineage map help here? It doesn’t. It might give a starting point to scope changes to your infrastructure, but no more. In a crisis, a map of your metadata and systems generated by a tool that ignores EUCs, will be of limited value.

The Why

BCBS 239 is extremely concerned with the why. Can the bank explain their aggregation approach, why inconsistency occurs, and why any errors occurred? Can the bank explain the reason why this manual process was preserved? Is there a clear and consistent understanding of what a term, a KPI, or a value means across the whole data landscape? BCBS 239 cares a lot about the why: why have you made your decision; why you’ve chosen this data; why you call the thing that thing.

How does a design-time view of data flows help with the why? It might give you a place to start with which “whys” to explain, but no more than that.

Efficiency

Can you run your risk reports quickly ‘under normal or stress/crisis conditions’? BCBS 239 views how it might help by rationalizing your IT estate and getting your EUCs under control. Principles 2 and 3 care a great deal about this.

We see that “A bank should strive for a single authoritative source of data for risk data…” and “Data should be aggregated on a largely automated basis…”, while grudgingly accepting the existence of Dave’s magic spreadsheet; “Where a bank relies on manual processes and desktop applications (e.g., spreadsheets, databases)…it should have effective mitigants in place.” This points to the discovery and then rationalization of not just maintained systems and platforms but also EUCs. This appears to be a use case tailor-made for design-time data lineage discovery tools ahead of rationalization. It’s a yes if that tool can find Dave’s magic spreadsheet. But in reality, what the regulation is saying is: rationalize your estate down, get rid of duplicate systems, reduce the dependency on magic spreadsheets and automate, automate, automate.

Focus on the Data

One of the leading vendors of a design-time-based lineage tool focuses on this specific requirement in one paragraph:

“A bank should establish integrated data taxonomies and architecture across the banking group, which includes information on the data characteristics (metadata) and the use of single identifiers and/or unified naming conventions for data, including legal entities, counterparties, customers, and accounts.”

From that one paragraph of the regulation, that vendor extrapolates that their tool will meet all of the requirements for meeting the needs of BCBS 239.

Presumably, the other 88 paragraphs are unimportant.

The vendor hones in specifically on the word “metadata” while ignoring the rest of the requirements in that paragraph. The “integrated data taxonomies” or sets of well-defined terms, definitions, and their relationships suggest “the use of single identifiers”, “unified naming conventions,” and their application to what looks to be master data entities.

It’s safe to say the vendor is spinning this to sell their product, but in actuality, the regulator is highlighting a few important ideas here:

- Define your terms (the taxonomies),

- Simplify your data through consistent use of identifiers,

- Manage your master data effectively,

Delivering all of the above through an architecture that supports and promotes these behaviors. Why are they asking for this?

Because then you can focus on volatile data, the transactional data, and more quickly and accurately do your risk data aggregation ‘under normal or stress/crisis conditions’.

Of course, the meta-data is important because the meta-data (particularly operational meta-data), will help you measure and prove the quality of the data used in the aggregation of risk data. In fact, the next paragraph says: “Roles and responsibilities should be established as they relate to the ownership and quality of risk data and information for both the business and IT functions.” how do you prove data quality and evidence application of controls without operational metadata?

Really, focus on the Data

BCBS 239 fundamentally ensures that banks’ risk data quality is unimpeachable.

That risk data is handled as rigorously as financial data. There are appropriate, documented controls around risk data, especially manually compiled data. That data can be reconciled to its source. That data is quickly and readily available in a timely fashion. The important data components that make up the risk reports are available for scrutiny.

BCBS 239 tells you, that you need to manage your data. It goes so far as describing governance roles and charging them with maintaining quality. It repeatedly challenges you to be able to compile this stuff ‘under normal or stress/conditions’. Even compile risk reports with the same level of rigor if the regulator wants you to test a random ad hoc scenario.

Boiling this down, the regulator expects much more than design time lineage diagrams, much, much more.

Regulators want query-time lineage and full data provenance. The regulator wants to know who is responsible for the quality of each data component for each report compiled. The regulator wants to know if you can show those components for the final report output generated and not just in the case of reporting actual events, but also for any test scenarios. The regulator wants you and them to be confident in your risk reporting capabilities.

This is a long way past the capability of a design-time lineage tool. It’s integrating governance, metadata management, data quality management, and distributed query execution, all at query time.

Tying it all together

The regulations invoked by the lineage vendors will not have their requirements met by the tools they offer. Not even the specific requirement they tend to cite.

In the case of BCBS 239, the regulator wants to encourage a rethink of a bank’s data infrastructure and its ability to provide risk reporting. They want a bank to reduce its dependence on magic spreadsheets and want to understand why a bank has made the decisions it has. They are challenging a bank to pare back its technical debt and manage its master and reference data to reduce the load on producing the reports they need. Most importantly, they want a bank to show its working and see all of the data used at the time the reports were generated and where it went *at the time the queries were run* and be able to run those reports as easily in BAU as in times of stress or crisis.

This all begs the final question: Does a design-time data lineage tool help with this? I’ll leave that to you to answer.

But what about Data Lineage as a concept? It’s useful, but only ever as a means to an end or due to doing other data-related work. For instance, evaluating the consistency of data and its representation between two systems implies knowing data flows between those systems whose consistency you’re evaluating.

Building on that notion, any tools purchased or developing a data transport mechanism such as an API framework that provides data flow or lineage representation as a byproduct of their main purpose is money better spent than a specialist lineage tool.

But make no mistake, your business case solely for data lineage is not based on any real regulatory requirement.

Acknowledgements; Thank you to Paul Jones, Marty Pitt and Ottalei Martin. For a fully cited copy of this post, please get in touch.