The term “digital transformation” has been around now for a couple of years. Like so many such terms, it was something of a neologism, a phrase much like Big Data or, for that matter, Data Scientist, that started out more as market-speak than anything else, but that, for one reason or another, started accreting a second layer that was both more precise, and arguably, more useful.

For purposes of discussion, I’d like to ascribe a definition to the term that may not necessarily be its original meaning, but that more accurately describes that secondary technical intent. Specifically:

A digital transformation is the restructuring of an organization in such a fashion that it makes the most efficient use of the data lifecycle to provide information to that organization for planning, designing, production, monitoring, and decision-making.

Understanding the Modern Data Lifecycle

The Data Lifecycle, in turn, can be thought of as the analog of the software development life-cycle (SDLC) that underlies so many programming methodologies. It is not Agile, because data is not, in general, code, and as such, many preconceptions about Agile methodologies should be thrown out of the window now, to be retrieved (perhaps) once it’s had time to consider it’s recondite past.

The Data LifeCycle (or just DLC) can be broken down into the following stages:

- Acquisition. This is when information is first created as a way of associating a (unique) identifier to a given conceptual entity. The data can come in by human action, through a specific “name” server, or through some kind of transformation on composite keys.

- Accretion and Dynamic Modeling. This phase, which follows shortly after acquisition, involves the process of binding attributes (literals) and relationships between the new entity and existing entities. Note that this already differs from existing data processes in that modeling is not done statically, ahead of time, but instead is an evolutionary process that occurs over time, dynamically, in the same way that languages evolve. In semantic terms, this is often called the Open World Assumption, or, to put in non-technical terms – you don’t necessarily know what information exists about an entity until you need to know it.

- Curation and Classification. This is the process of ensuring that data that is defined serves both human and computational needs. It can be done by a librarian, it can be done via machine learning, but most often it will be done by a librarian armed with machine-learning tools, a combination which will henceforth be known as Curators.

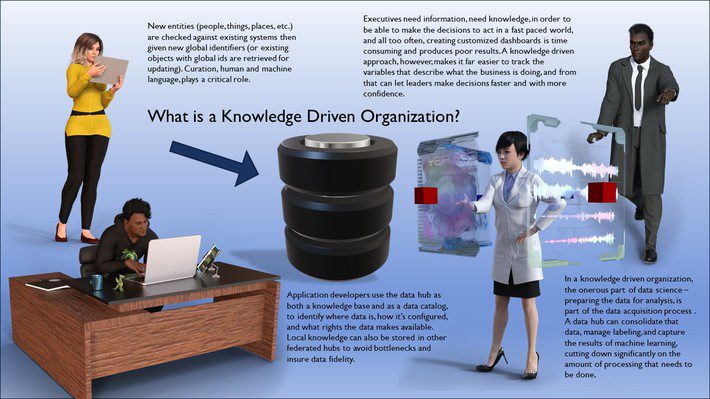

- Tool Building. As information begins to emerge as a specific organizational ontology, several things happen. Data that had previously been produced primarily as artifacts of application development now increasingly become forward leading, where the formal description of entities (and their key identifiers) are the fabric that application developers then use for building out tools to query, accumulate, analyze and display that data. This also shifts the overall emphasis of programmers in an organization from being central to all aspects of that organization to being secondary tool-builders working with the emerging knowledge space, or ontology, of the organization. This, in turn. has a profound impact upon software methodologies.

- Analytics. Most data science initiatives fail. They fail because the data that analysts need is not in a form that they can use, the data is not given critical context, and the benefits of the analysis are basically only given as “one-off” reports that are expensive to produce, difficult to repurpose, and typically require specific interpretation to be useful for decision making. In a data-driven organization, on the other hand, by the time that the data becomes available to the analyst, it has been cleaned, organized and contextualized, which means that analysts can become reporting specialists, can work with tool builders to create more dynamic interpretations, and can do so in a timely matter without the need to spend weeks or months on limited dashboards.

- Matriculation. Data exists for many purposes – to not only describe the outer world (what others are doing outside the organization, such as potential customers or regulatory agencies) but also to locate where resources are within the organization and to make these available to producers – schedules, 3D models, simulations (aka games), story originations, provenance, and so forth. As the products that a company create become more virtual (or develop a digital twin), the data that a company creates exists to support the tools necessary for these creatives to actually create things. This matrix of support activities is a central part of any organization, and hence, this phase is known as the matriculation or scaffolding phase.

- Decision-Making. The ability to manage an organization translates into the need to know, at any given point in the process, the status of these efforts, and the ability to fine-tune these processes with minimal disruption. This means that there is a need to shift away from a project-oriented mode to a process-oriented mode. One impact of this is that data flows need to be monitored as they impact the creation of the enterprise data repositories, an in order to avoid bottlenecking, that such hubs need to be federated, decentralized, and readily mergeable. It requires the advent of dynamic data catalogs, and ultimately, means that the role of various types of data stores is not to serve specific applications, but rather to optimize data storage and retrieval systems so that they can more efficiently work within a broad enterprise level data rubric.

Ultimately, it also means moving to a mode where decisions are made based upon smart contracts working in concert with AIs to determine when specific conditions should result in the initiation of actions. In such a situation decision-making becomes increasingly autonomous, with the role of the decision-maker acting as a check upon errant processes.

Welcome to Post Covid Transformation

The Coronavirus nCov-2, also known as Covid-19, has forced the economy to come slamming to a halt. People have been forced to “shelter-in-place” as the worst of the virus passes, in order to keep health systems from being overwhelmed, and while in the ensuing weeks and months the virus is likely to abate (especially once a vaccine to it can be developed and distributed globally, a daunting task in its downright). However, the damage has been done, and a lot of organizations are going to come out the other side of this (if they come out at all) facing a dramatically changed world.

There are many lessons that are emerging from this period, some of them very uncomfortable indeed.

- The “office” is not only unnecessary but may very well be seen increasingly as a liability.

- Democratized data access is not only achievable but also desirable – it is necessary for people to do their jobs, regardless of where they are or what they are doing.

- Data security is reliant upon trust, not perimeter controls.

- Programmers (tool-builders) were essential to organizations twenty years ago. They occupy a much smaller footprint in today’s world, especially in comparison to the tool users and data curators.

- Data analytics is only meaningful if the end results support either creators or decision-makers.

- Far from protecting jobs, we need to be automating more, while at the same time putting humans into the role of ensuring fairness and data quality in processes and reduced social bias in analytics. Data governance should become simply a byproduct of data strategy.

- We should also recognize that our economic systems will fail if we have no way of ensuring the distribution of benefits of that automation outside of a small group of powerful investors. That is to say data transformations need to be aimed at the social level as well as at the technical, as it is, in essence. a change in the way that we think about work itself.

- That to achieve such digital transformations, we also need to recognize that we can’t continue doing more of the same and expect any different results. Digital transformations require that we rethink our relationship to data, which in turn means that we have to give up the misconceptions such as that we can necessarily recover sunk costs in our data investments.

Summary

The organizations that will successfully make the transition will be ones that are willing to recreate themselves from the ground up, to change the way that they do business. The ones that don’t will disappear. It’s that simple.