This three-part series outlines the challenges and actions that the Board of Directors for organizations must address as they guide their organization’s responsible and ethical deployment of Artificial Intelligence (AI). Part one covered mitigating the impacts of AI Confirmation Bias. In part two, we discuss the potential unintended consequences of AI deployment and how to identify these potential unintended consequences.

Leaders across various business, technology, social, educational, and government institutions are deeply concerned about the potential negative impacts of the untethered deployment of Artificial Intelligence (AI). Efforts are underway to create policies, regulations, education, and tools that stave off dire actions from nefarious actors and rogue nations who might leverage AI for misinformation, malicious use, cyberattacks, and weaponization. But there are other equally dangerous risks from the careless application of AI, including:

- Confirmation Bias from poorly-constructed AI models that deliver irrelevant, biased, unethical outcomes and impact longer-term operational viability.

- Unintended Consequences from decision-makers making decisions without consideration of the second and third (and fourth and fifth…) order ramifications of what “might” go wrong.

In this blog, we will discuss what the Board of Directors can do to prevent unintended consequences from AI’s inappropriate or careless application.

Addressing Unintended Consequences

Unintended Consequences are the unforeseen or unintended outcomes or effects that arise from a particular decision.

As a Board of Directors, one of the biggest challenges in implementing AI is proactively ensuring that the AI models drive ethical and responsible decisions and outcomes. Conducting after-the-fact audits will not suffice, as it can be detrimental to the business if AI models make decisions that harm its business viability or expose the organization to compliance and regulatory risks.

If we had relied on after-the-fact auditing to regulate nuclear power, there’s a good chance we would all be either dead or brightly glowing.

History is full of examples of “the road to hell is paved with good intentions” unintended consequences, such as (Figure 1):

- SS Eastland was made safer by adding several lifeboats, but the extra weight caused the ship to capsize, killing 800 passengers.

- The Treaty of Versailles dictated onerous surrender terms to Germany to end World War I, which empowered Adolf Hitler, leading to World War II.

- The Smokey Bear Wildfire Prevention campaign disrupted normal fire processes resulting in mega-fires that inflected catastrophic damage.

Figure 1: Unintended Consequences: “Good Intentions Gone Wrong”

It is essential for organizations to dedicate time and effort to consider the potential unintended consequences or “unknown unknowns” of AI deployments. This will prevent adverse outcomes that may arise if AI is deployed without proper consideration. To achieve this, it is necessary to understand the Rumsfeld Knowledge Matrix.

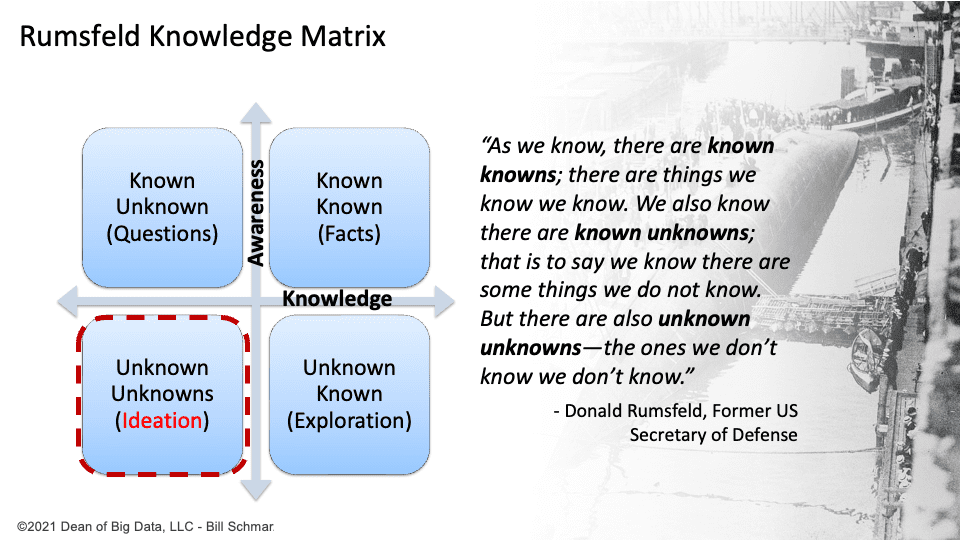

The Rumsfeld Knowledge Matrix is a conceptual framework introduced by Donald Rumsfeld, the former United States Secretary of Defense, to categorize and analyze knowledge and information based on different levels of certainty and awareness. The matrix consists of four quadrants (Figure 2):

- Known knowns: These are things that we know and are aware of. They represent information that is well understood and can be easily articulated. I call these “Facts.”

- Known unknowns: These are things that we know we don’t know. In other words, there are gaps in our knowledge or information which we are aware of and recognize as areas where further research or investigation is needed. We need to ask These ” Questions “ (see my blog about the importance of mastering the Socratic Method of asking questions).

- Unknown knowns: These are things that we don’t realize we know. It refers to the current information or knowledge we are unaware of or have yet to recognize. These could include overlooked facts, hidden patterns, or insights that have not been considered. This is an area requiring guided “Exploration.”

- Unknown unknowns: These are scenarios or outcomes completely unknown to us, or the potential unintended consequences. They represent potential surprises or unforeseen circumstances that could arise from a poorly vetted decision or initiative.

Figure 2: Rumsfeld Knowledge Matrix

To help guide the brainstorming required to identify potential unknown unknowns, assemble a diverse set of internal and external stakeholders and constituents to explore, brainstorm, and ideate the potential unintended consequences of a decision or initiative against the following scenarios:

- Unforeseen adverse outcomes: Sometimes, actions intended to bring about a positive change can inadvertently lead to negative consequences. An example is California’s “Ban the Box” (BTB) policy, which aimed to reduce racial disparities in employment by preventing employers from asking job applicants about their criminal records. However, a study found that this policy actually decreased young, low-skilled black men’s employment rate by 5.1%.

- Unexpected positive outcomes: On the other hand, unintended consequences can also result in positive outcomes. Specific actions or decisions may have unintended benefits that were not initially anticipated. For instance, a new policy to reduce pollution might inadvertently spur innovation and the development of cleaner technologies.

- Ripple effects: Unintended consequences can cascade, triggering a series of additional, often unanticipated, outcomes. These secondary or tertiary consequences can impact different areas or aspects of a system or society.

- Counterproductive results: In some cases, actions to solve a problem can exacerbate it or create new issues altogether. This occurs when the chosen solution needs to account for the situation’s complexity or unintended side effects.

Remember to embrace your expanding Design Thinking skills as you ideate the unknown unknowns. That means that,

- All ideas are worthy of consideration.

- If you don’t have enough “might” moments, you’ll never have any breakthrough moments.”

You never know from whom the best ideas might come. So, empower everyone in the identification and exploration of potential unintended consequences.

Summary: Board of Directors AI Confirmation Unintended Consequences Challenge

In part two of this three-part series, we discussed what the Board of Directors needs to know to identify and explore the potential impacts of unintended consequences.

In part three, we will conclude the series with an updated Unintended Consequences Assessment worksheet plus a checklist that the Board of Directors can leverage when advising senior management on mitigating the impacts of AI confirmation bias and the potential unintended consequences of AI deployment.