We are currently living in such a period where computing has transformed immensely from large mainframes to personal computers to the cloud. The constant technological progress and the evolution in computing resulted in major automation.

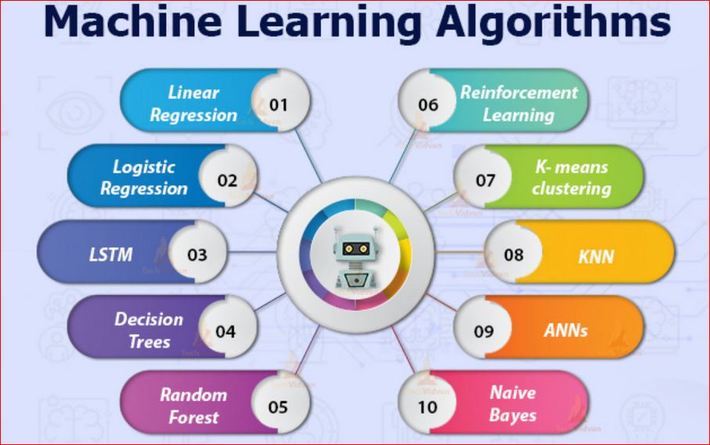

In this article, lets understand few commonly used machine learning algorithms. These can be used for almost any kind of data problem.

- Linear Regression

- Logistic Regression

- Decision Tree

- SVM algorithm

- Dimensionality Reduction Algorithms

- Gradient Boosting algorithms and AdaBoosting Algorithm

- GBM

- XGBoost

- LightGBM

- CatBoost

- Linear Regression

This is used to estimate real values like the cost of houses, number of calls, total sales, and many more based on a continuous variable. In this process, a relationship is established between independent and dependent variables by fitting the best line. This best fit line is called the regression line and is represented by a linear equation Y= a *X + b.

In this equation:

- Y Dependent Variable

- a Slope

- X Independent variable

- b Intercept

The coefficients a & b are derived depending on minimizing the sum of squared difference of distance between data points and regression line.

- Logistic Regression

This is used to evaluate discrete values (mainly Binary values like 0/1, yes/no, true/false) depending on the available set of the independent variable(s). In simple terms, it is useful for predicting the probability of happening of the event by fitting data to a logit function. It is also called logit regression.

These below listed can be tried in order to improve the logistic regression model

- including interaction terms

- removing features

- regularize techniques

- using a non-linear model

- Decision Tree

This is highly used for classification problems. The Decision Tree algorithm is considered among the most popular machine learning algorithm. This perfectly works for both continuous and categorical dependent variables. This is done depending on the most significant attributes/ independent variables to create as distinct groups as possible.

- SVM algorithm

This algorithm is a classification method in which the raw data gets plotted as points in n-dimensional space (where n is the number of features that are present). The value of every feature is being the value to a particular coordinate. This makes it quite easy to classify the data. For an instance, if we consider two features like hair length and height of an individual. First, these two variables will be plotted in the two-dimensional space, where every point has two coordinates, these are called Support Vectors.

- Dimensionality Reduction Algorithms

Over the last few years, huge amounts of data are been captured at every possible stage and are getting analyzed by many sectors. The raw data also consists of many features but the major challenge is in identifying highly significant variable(s) and patterns. The dimensionality reduction algorithms like Decision Tree, PCA, and Factor Analysis help find the relevant details depending on the correlation matrix, missing value ratio.

- Gradient Boosting algorithms and AdaBoosting Algorithm

GBM – These are boosting algorithms that are highly used when huge loads of data have to be taken care of to make predictions with high accuracy. AdaBoost is an ensemble learning algorithm that mixes the predictive power of various base estimators to improve robustness.

XGBoost This has a major high predictive analysis that makes it the most suitable choice for accuracy in events as it possesses both tree learning algorithms and linear models.

LightGBM This is a gradient boosting framework that uses tree-based learning algorithms. The framework is a very quick and highly efficient gradient boosting one based on decision tree algorithms. It is designed to be distributed with the mentioned benefits:

- Parallel and GPU for machine learning supported

- Quicker training speed and better efficiency

- Lower memory usage and enhanced accuracy

- Capable of handling large-scale data

CatBoost This is an open-sourced machine learning algorithm. It can easily integrate with deep learning frameworks such as Core Ml and TensorFlow. It can work on various data formats.

Whoever is seeking a career in machine learning should understand and increase their knowledge of these algorithms.