A significant portion of social media content uses Algorithmic Filtering (AF).

A significant portion of social media content uses Algorithmic Filtering (AF).- AF results in issues with fake news, polarizing opinions.

- A tweak to AF may be the solution for improving the user experience.

According to a recent Pew Research Center Study, 53% of U.S. Adults get their news regularly from social media platforms (SMPs) [1]. The news is mixed with content from advertisers, celebrities, friends, and influencers—making it difficult to separate factual from fake and useful from useless. MIT professors Sarah H. Cen and Devavrat Shah offer a simple solution: smarter algorithmic filtering. In their paper, Regulating algorithmic filtering on social media [2], the authors suggest that the solution to combatting fake news is to adjust newsfeed algorithms to better reflect the types of interactions users experience in real life.

The “News” Experience

Before the advent of social media, the line between gossip, opinion, and news was much clearer. In England, where I grew up, I picked up a copy of The Guardian or watched the BBC to catch up on the news. If I wanted gossip, I called Aunt Edna–who kept up on all the juicy tidbits. I would only retell a tale if it came from a trusted source like a good friend or trustworthy relative (sorry, Aunt Edna, you weren’t on that list).

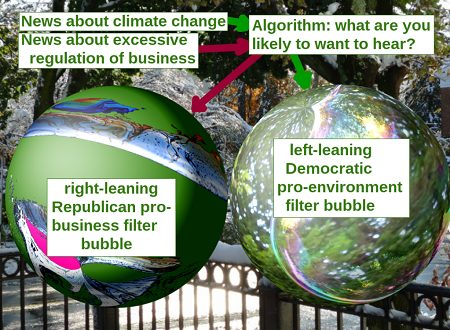

Fast forward to 2021 and the age of social media. Algorithmic filtering (AF) chooses the “news” that you see and don’t see. In other words, the news that ends up in front of you is dictated not by trusted news sources but by big tech companies. While AF has the potential to improve the user experience, it allows for the easy spread of fake news. Anyone with a few pennies can set up a legitimate looking website and tout their opinions; AF might serve you this interesting content simply because it thinks you’ll read it.

The lines have grayed so much that the average user may find it impossible to tell the difference between news and gossip: thanks to shares, reshares and rewrites, the most ridiculous fiction can be labeled as fact. Despite fact checking sites like Snopes and Allsides at out fingertips, many people don’t go that extra mile and aren’t questioning when the AF serves up “News”.

Fixing the Problem

“Online social networks have twisted the flow of information and put the ability to do so in the hands of a few,” says Shah, in an interview on the MIT website [3]. “So, let’s go back to normalcy.”

According to Shah, Fixing the problem of misinformation is “doable through algorithms,” simply by tweaking the way they operate to mimic how the spread of information works in real life: through peer interactions. Shah states that this could be achieved by randomly subsampling posts so that the AF includes “A measured amount of diversity,” pulling news feed content at random from all of a user’s network connections. The general idea is that if a user’s feed only pulled randomly from known contacts, then that user wouldn’t be served polarizing content based on repeat recommendations.

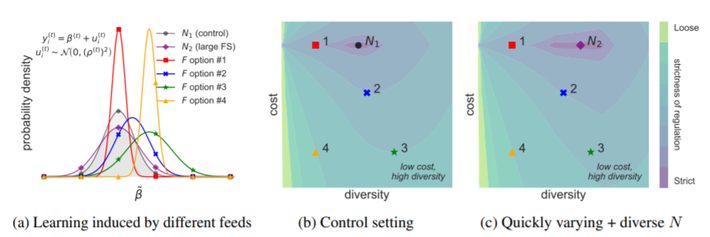

Cen and Shah took steps to mathematically formalize the relationship between how AF affects user learning and decision making, constructing a regulatory procedure that mitigates the AF’s influence on these factors. The team found a “sweet spot” where the Social Media Platform (SMP) was incentivized to increase content diversity for users as doing so led to greater rewards (i.e. profits) for the SMP.

Figure 3: Illustrating regulation’s effect on user learning, content diversity, and the SMP’s reward (Cen & Shah [2]).

Figure 3: Illustrating regulation’s effect on user learning, content diversity, and the SMP’s reward (Cen & Shah [2]).

Should we stop looking at SM for “News”?

It’s great to hear that steps are being taken to solve the current problems we have with fake news today. Tweaking AFs to deal with the drivel and clutter on my News Feed would be a welcome step. However, there are obvious ethical problems any way you look at tweaking algorithms that may limit free flow of information and commerce. So it may be a while before we see any substantial tweaks implemented.

In the meantime, here’s an even simpler solution: ban social media from calling it a “News” feed, when it clearly isn’t. Seeing as it’s an algorithmically served collection of gossip, news, unsubstantiated opinions and social commentary, why not call it the GNUS instead?.

References

News Image at top: Tomwsulcer, CC0, via Wikimedia Commons

[1] Pew: More than eight-in-ten Americans get news from digital devices

[2] Regulating algorithmic filtering on social media

[3] MIT: 3 Questions: Devavrat Shah on curbing online misinformation

A significant portion of social media content uses Algorithmic Filtering (AF).

A significant portion of social media content uses Algorithmic Filtering (AF).