Last year I started developing a Face Recognition model. I started with static pictures and using Wolfram Mathematica. This year I found out we can do the same job using OpenCV in Python, or creating specific filters in R and applying Weierstrass and Gaussian transformation.

There are lots of difficulties in recognizing faces of the same person, like: position, rotation of face, age, feeling, brightness, gamma, contrast, gamma, saturation, obstacles like hands,hair and so on.

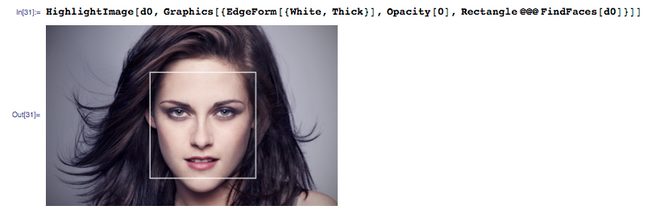

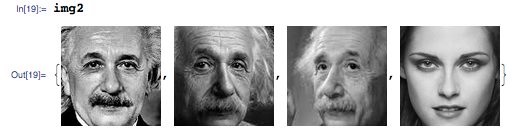

Beginning with a static picture, my idea was to identify Kristen Stewart among 4 possibilities.

Image Key Points were identified and face was highlighted:

Adjustments to grayscale were done and image parameters were adjusted

Adjustments to grayscale were done and image parameters were adjusted

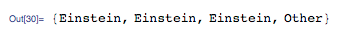

And then, we have the output based in the Key Points and K Nearest Neighbors:

And then, we have the output based in the Key Points and K Nearest Neighbors:

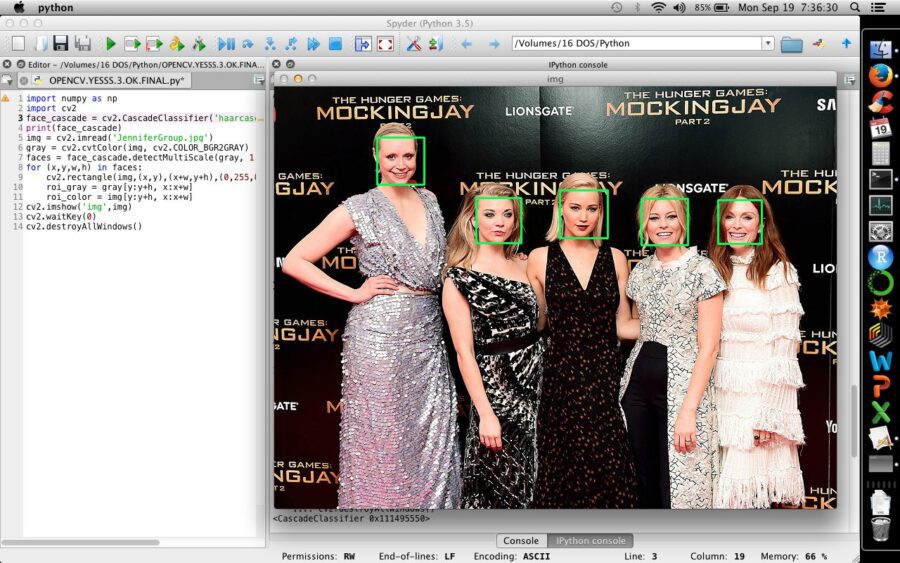

Below and example of Find Faces in a group of people in Python using OpenCV:

Below and example of Find Faces in a group of people in Python using OpenCV:

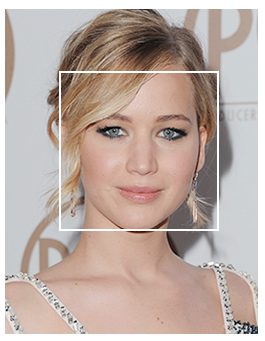

Find Faces is not a hard problem, Mathematica and Python do this task automatically. The big issue is to compare an existing photo to another one, like comparing the photo below, of Jennifer Lawrence, with the Jennifer Lawrence above, wher there is clearly a difference of age:

Find Faces is not a hard problem, Mathematica and Python do this task automatically. The big issue is to compare an existing photo to another one, like comparing the photo below, of Jennifer Lawrence, with the Jennifer Lawrence above, wher there is clearly a difference of age:

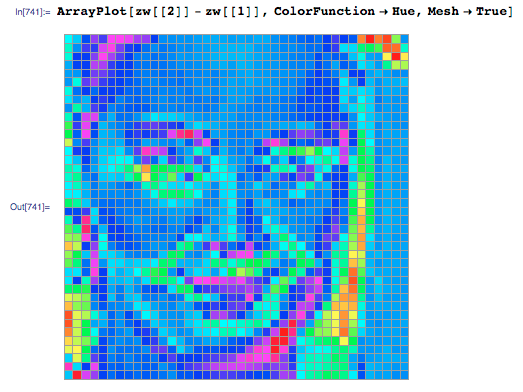

You can find, for instance, the pixel difference between two pictures:

You can find, for instance, the pixel difference between two pictures:

Then, by comparing Key Points and centroids, the algorithm identifies Jennifer Lawrence:

Then, by comparing Key Points and centroids, the algorithm identifies Jennifer Lawrence:

My next step was to identify a given face in movement, using a YouTube video. Mathematica and Python make simple to find faces:

My next step was to identify a given face in movement, using a YouTube video. Mathematica and Python make simple to find faces:

The hard work is to identify the actress in movement. In this case, I used the simple difference between images and matching image key points, but one can also use K Nearest Neighbor or even better, a Convolutional Neural Network to increase accuracy, but I didn’t have a GPU. I got the following result: no mistakes, but the face has to be in a specific position to be recognized.

Another possibility is to do sentiment analysis based in face recognition:

Check the level of stress in the faces:

Check the level of stress in the faces:

And assign a specific centroid to each feeling so that they can be classified. According to the euclidean distances of the face centroid, you have a certain amount of a given feeling. For instance, the person centroid can be closer to Happy, but it can be also close to angry. So, she is 80% happy and 15% angry. This finding create a HUGE opportunity to identify consumer confidence, emotional involvement and satisfaction.

This last model used a Machine Learning technique (K Nearest Neighbor) and pictures were used to train the model, in a supervised Machine Learning task, given that the output was known.