Is there a single “best” way to visualize data in a particular scenario and for a particular audience, or are there multiple “good enough” ways?

That’s the debate that has resurfaced on Stephen Few’s and Cole Nussbaumer’s blogs recently.

In summary, Stephen says, “Is there a best solution in a given situation? You bet there is.”

In contrast, Cole says, “For me, though, it is possible to have multiple varying visuals that may be equally effective”.

Could Both be Right?

This is going to sound strange, but I think both are right. There is room for both approaches in the field of data visualization. Let me explain.

Lucky for us, really smart people have been studying how to choose between a variety of alternatives for over a century now. Decision-making of this sort is the realm of operations research (also called “operational research,” “management science” and “decision science”). Another way of asking the lead-in question is:

When choosing how to show data to a particular audience, should I keep looking until I find a single optimum solution, or should I stop as soon as I find one of many that achieves some minimum level of acceptability (also called the “acceptability threshold” or “aspiration level”)?

The former approach is called optimization, and the latter was given the name “satisficing” (a combination of the words satisfy and suffice) by Nobel laureate Herbert A. Simon in 1956.

So which approach should we take? Should we optimize or satisfice when visualizing data?

I believe there is room for both approaches. Which approach we take depends on three factors:

- Whether the decision problem is tractable

- Whether all of the information is available

- Whether we have time and resources to get the necessary information

But What Is the ‘Payoff Function’ for Data Visualizations?

This is a critical question, and where I think some of the debate stems. Part of the challenge in ranking alternative solutions to a data-visualization problem is determining what variables go into the payoff function, and their relative weight or importance. The payoff function is how we compare alternatives. Which choice is better? Why is it better? How much better?

Stephen says, “We can judge the merits of a data visualization by its ability to make the information as easy to understand as possible.” By stating this, he seems to me to be proposing a particular payoff function: increased comprehensibility = increased payoff.

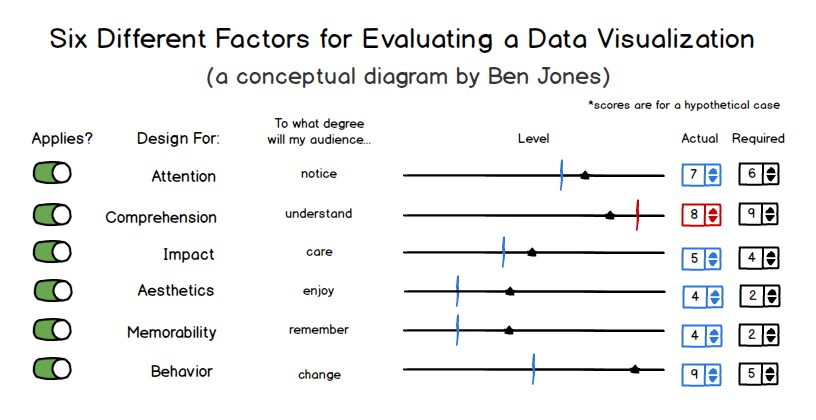

But is comprehensibility the only variable that matters (did our audience accurately and precisely understand the relative proportions?) or should other variables be factored in as well, such as attention (did our audience take notice?), impact (did they care?), aesthetics (did they find the visuals appealing?), memorability (did they remember the medium and/or the message some time into the future?) and behavior (did they take some desired action as a result?).

Here’s a visual that shows how I tend to think about measuring payoff, or success, of a particular solution with hypothetical scores (and yes, I’ve been accused of over-thinking things many times before):

It’s pretty easy to conceive of situations, and I’d venture to say that most of us experienced this first-hand. A particular visualization type may have afforded increased precision of comparison, but that extra precision wasn’t necessary for the task at hand, and the visualization was inferior in some other respect that doomed our efforts to failure.

Comprehensibility may be the single most important factor in data visualization, but I don’t agree that it’s the only factor we could potentially be concerned with. Not every data visualization scenario requires ultimate precision, just as engineers don’t specify the same tight tolerances for a $15 scooter as they do for a $450 million space shuttle. Also, visualization types can make one type of comparison easier (say, part-to-whole) but another comparison more difficult (say, part-to-part).

Trade-Offs Abound

What seems clear, then, is that if we want to optimize for all of these variables (and likely others) for our particular scenario and audience, then we’ll need to do a lot of work and it will take a lot of time. If the audience is narrowly-defined (say, the board of directors of a specific non-profit organization), then we simply can’t test all of the variables (such as behavior—what will they do?) ahead of time. We have to forge ahead with imperfect information, and use something called bounded rationality—the idea that decision-making involves inherent limitations in our knowledge—and we’ll have to pick something that is “good enough.”

And if we get the data at 9:30 a.m. and the meeting is at 4 p.m. on the same day? Running a battery of tests often isn’t practical.

But what if we feel that optimization is critical in a particular case? We can start by simplifying things for ourselves, focusing on just one or two input variables, making some key assumptions about who our audience will be, what their state of mind will be when we present to them, and how their reactions will be similar to or different from the reactions of a test audience. We reduce the degrees of freedom and optimize a much simpler equation. I’m all for knowing which chart types are more comprehensible than others. In a pinch, this is really good information to have at our disposal.

There’s Room for Both Approaches

Herbert A. Simon noted in his Nobel laureate speech that “decision-makers can satisfice either by finding optimum solutions for a simplified world, or by finding satisfactory solutions for a more realistic world. Neither approach, in general, dominates the other, and both have continued to coexist in the world of management science.”

I believe both should coexist in the world of data visualization, too. We’ll all be better off if people continue to test and find optimum visualizations for simplified and controlled scenarios in the lab. And we’ll be better off if people continue to forge ahead and create good-enough visualizations in the real world, taking into account a broader set of criteria and embracing the unknowns and messy uncertainties of communicating with other thinking and feeling human minds.

Note: This article first appeared on the Tableau blog.