As COVID-19 rampages across the globe it is altering everything in its wake. For example, we are spending on essentials and not on discretionary categories; we are saving more and splurging less; work-life balance has a deeper focus on mental health; we are staying home more and traveling less. Our priorities have changed. If you look at this unfolding scenario wearing a data hat, the facts and knowledge we relied upon to forecast markets have been rendered practically useless.

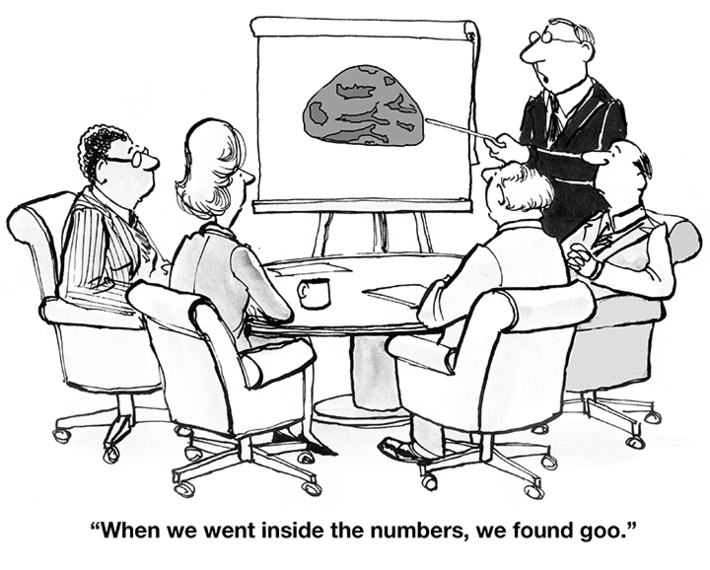

We saw evidence of this in late March when the pandemic took root in western nations. There was a surge in demand for toilet paper, fueled by panic-buying, leading to an 845% increase in sales over last year.[i] The most powerful analytic engines belonging to the worlds largest retailers could not forecast the demand. Reason: models used by analytical engines are trained on existing data and there are no data points available to say, Here is COVID-19; expect a manic demand for toilet paper. Businesses know that their investments in digital technology turned out to be the silver lining in the new normal, but they also learnt that depending on the current stockpile of data can lead to blind spots, skewed decisions and lost opportunities.

While the pandemic will leave a profound impact on how the future shapes up, it is providing data scientists with plenty to think about. They know that the traditional attributes of data need to be augmented to deliver dependable and usable insights, to deliver personalization and to forecast the future with confidence.

When the underlying data changes, the models must change. For example, in the wake of a crisis, consumers would normally choose more credit lines to tide over the emergency. But they arent doing that. This is because they know that their jobs are at risk. They are instead reducing spends and dipping into their savings. Here is another examplesupply chain data is no longer valid, and planners know the pitfalls of using existing data. It is a dangerous time to depend on (existing) models, cautions Shalain Gopal, Data Science Manager at ABSA Group, the South Africa-based financial services organization. She believes that organizations should not be too hasty to act on information (data) that could be half-baked.

There is good reason to be wary of the data organizations are using. Models are trained on normal human behavior. Given the new developments, it must be trained on data that reflects the new normal to deliver dependable outcomes. Gopal says that models are fragile, and they perform badly when they have to handle data that is different from what was used to train them. It is a mistake to assume that once you set it up (the data and the model) you can walk away from it, she says.

There are 5 key steps to accelerating Digital Transformation in the new normal which dictates how an organization sources and uses data. These provide a way to reimagine data and analytics that lays the foundation for an intelligent enterprise and helps derives maximum insights from data:

- Build a digitally enabled war room for real-time transparency, responsiveness and decision-making

- Overhaul forecasting to adapt to the rapidly changing environment with intelligent scenario-planning

- Rebuild customer trust with personalized digital experiences

- Invest in technology for remote working, operational continuity and security

- Accelerate intelligent automation using data

Events like the Great Depression, 9/11, Black Monday, the 2008 financial crisis, and now the COVID-19 pandemic, are opportunities to create learning models. Once the Machine Learning system ingests what the analytical models should see, forecasting erratic events becomes easier. This implies that organizations must build the ability to maintain and retrain the models and create the right test data with regularity.

ITC Infotech recommends 6 steps to reimagine the data and analytics approach of an organization in the new normal:

- Harmonize & Standardize the quality of data

- Enable Unified data access across the enterprise

- Recalibrate data models on a near real-time basis

- Amplify data science

- Take an AI-enabled platform approach

- Adopt autonomous learning

The ability to make accurate predictions and take better decisions does not depend solely on connecting the data dotsit depends on the quality, accuracy and completeness of the data. Organizations that bring data to the forefront of their operations also know that it is important to understand the right dataset, what the data is being used to solve. In effect, data and analytics have many moving parts. These have become especially important in the light of the changes being forced by COVID-19. Now, there is a rare window of opportunity in which organizations can rapidly adjust their approach to dataand gain an advantage that conventional business wisdom cannot match.

[i] https://www.chron.com/business/article/Toilet-paper-demand-shot-up-…

Co-Authored by :

Shalain Gopal

Data Science Manager, ABSA Group

Kishan Venkat Narasiah

General Manager, DATA, ITC Infotech