The Jupyter notebook at this link contains a tutorial that explains how to use the lxml package and xpath expressions to extract data from a webpage.

The tutorial consists of two sections:

- A basic example to demonstrate the process of downloading a webpage, extracting data with lxml and xpath and analysing it with pandas

- A comprehensive review of xpath expressions, covering topics such as “axes”, “operator” etc

The “Basic Example” section below goes through all of these steps to illustrate the process. To download a page the “get” http method is required, I have used the requests package to implement the get. Parsing the page and using xpath expressions to navigate the tree is accomplished with classes within the lxml package. Cleaning and data analysis has been performed with string manipulation and the pandas package. The appendix section contains untility functions that are used to help with the analysis presented but are not central to it. THE CELLS IN THE APPENDIX SHOULD BE RUN FIRST!!

The “xpath Tutorial” section below is based on the w3 schools xpath tutorial, with the xpath commands performed via the lxml package.

lxml general information

The lxml Package is a Pythonic binding for the C libraries libxml2 & libxslt, it is compatible but similar to the well known ElementTree API. The latest release works with all CPython versions from 2.6 to 3.6.

For more information about this package including installation notes visit the links below:

Basic Example

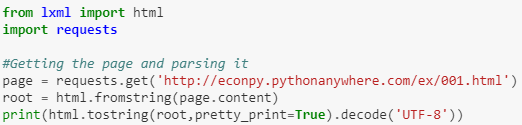

Downloading and Parsing

The code below uses the requests package to download a html page from the internet. The html class within the lxml library is then used to parse the content of the download into an object suitable for lxml manipulation. The page has been “pretty printed” for reference. The data is then extracted using an xpath expression, from within the lxml library. It is then cleaned and loaded into a pandas data frame and used to produce a simple bar plot.

Read the full tutorial, with source code, here.