This is the first part of a 2-part series on the growing importance of teaching Data and AI literacy to our students. This will be included in a module I am teaching at Menlo College but I wanted to share the blog to help validate the content before presenting it to my students.

Wow, what an interesting dilemma. Apple plans to introduce new iPhone software that uses artificial intelligence (AI) to churn through the vast collection of photos that people have taken with their iPhones to detect and report child sexual abuse. See the Wall Street article “Apple Plans to Have iPhones Detect Child Pornography, Fueling Priva…” for more details on Apple’s plan.

Apple has a strong history of working to protect its customers’ privacy. Apple’s iPhone is basically uncrackable, which has put it at odds with the US government. For example, the US Attorney General asked Apple to crack their encrypted phones after a December 2019 attack by a Saudi aviation student that killed three people at a Florida Navy base. The Justice Department in 2016 pushed Apple to create a software update that would break the privacy protections of the iPhone to gain access to a phone linked to a dead gunman responsible for a 2015 terrorist attack in San Bernardino, Calif. Time and again, Apple has refused to build tools that break its iPhone’s encryption, saying such software would undermine user privacy.

In fact, Apple has a new commercial where they promote their focus on consumer privacy (Figure 1).

Figure 1: Apple iPhone Privacy Commercial

Now, stopping child pornography is certainly a top society priority, but at what cost to privacy? This is one of those topics where the answer is not black or white. A number of questions arise, including:

- How much personal privacy is one willing to give up trying to halt this abhorrent behavior?

- How much do we trust the organization (Apple in this case) in its use of the data to stop child pornography?

- How much do we trust that the results of the analysis won’t get into unethical players’ hands and be used for nefarious purposes?

And let’s be sure that we have thoroughly vetted the costs associated with the AI model’s False Positives (accusing an innocent person of child pornography) and False Negatives (missing people who are guilty of child pornography), a topic that I’ll cover in more detail in Part 2.

What is Data Literacy

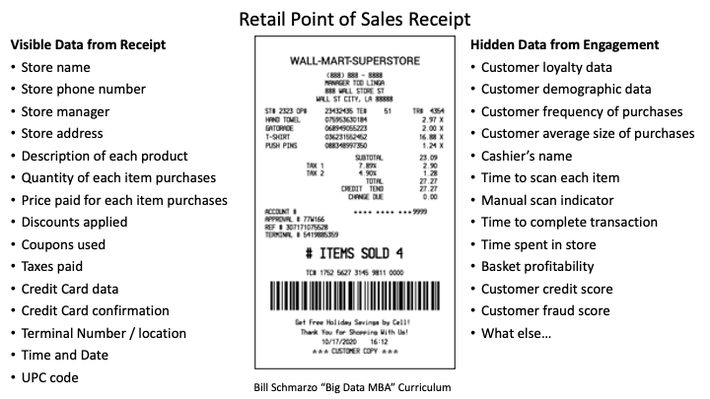

Data literacy starts by understanding what data is. Data is defined as the facts and statistics collected to describe an entity (height, weight, age, location, origin, etc.) or an event (purchase, sales call, manufacturing, logistics, maintenance, marketing campaign, social media post, call center transaction, etc.). See Figure 2.

Figure 2: Visible and Hidden Data from Grocery Store Transaction

But not all data is readily visible to the consumer. For example, from the Point-of-Sales (POS) transaction on the right side of Figure 2, there is data for which the consumer may not be aware that is also captured and/or derived when the POS transaction is merged with the customer loyalty data.

It is the combination of visible and hidden data that organizations (grocery stores in this example) use to identify customer behavioral and performance propensities (an inclination or natural tendency to behave in a particular way) such as:

- What products do you prefer? And which ones do you buy in combination?

- When and where do you prefer to shop?

- How frequently do you use coupons, and for what products?

- How much does price impact your buying behaviors?

- To what marketing treatments do you tend to respond?

- Do the combinations of products indicate your life stage?

- Do you change your purchase patterns based on holidays and seasons?

The sorts of customer, product, and operational insights (predicted behavioral and performance propensities) are only limited by the availability of granular, labeled consumer data and the analysts’ curiosity.

Now there is nothing illegal about the blending of consumer purchase data with other data sources to uncover and codify those consumer insights (preferences, patterns, trends, relationships, tendencies, inclinations, associations, etc.). The data these organizations collect is not illegal because you, as a consumer, have signed away your exclusive right to this engagement data.

Third-party Data Aggregators

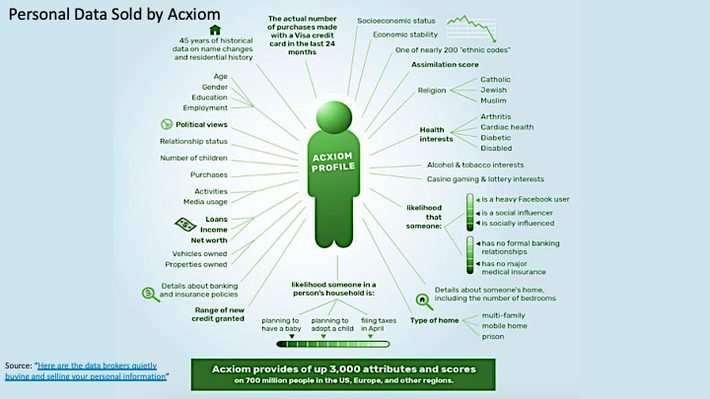

There is a growing market of companies that are buying, aggregating, and reselling your personal data. There are at least 121 companies, such as Nielsen, Acxiom, Experian, Equifax, and CoreLogic, whose business model is focused on purchasing, curating, packaging, and selling your personal data. Unfortunately, most folks have no idea how much data these data aggregators are gathering about YOU (Figure 3)!

Figure 3: Source: “Here are the data brokers quietly buying and selling your personal information”

Yes, the level of information that a company like Acxiom captures on you and me is staggering. But it is not illegal. You (sometimes unknowingly) agree to share your personal data when you sign up for credit cards and loyalty cards or register for “free” websites, newsletters, podcasts, and mobile apps.

Companies then combine this third-party data with their collection of your personal data (captured through purchases, returns, marketing campaign responses, emails, call center conversations, warranty cards, websites, social media posts, etc.) to create a more complete view of your interests, tendencies, preferences, inclinations, relationships, and associations.

Data Privacy Ramifications

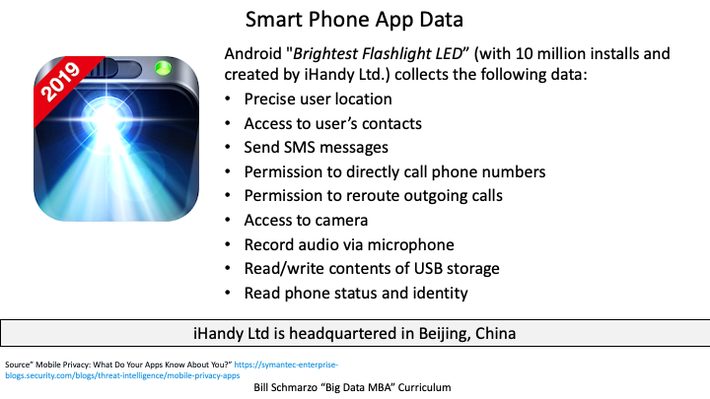

One needs to be aware of nefarious organizations that are capturing data that is not protected by privacy laws. For example, iHandy Ltd distributes the Brightest Flashlight LED Android app with over 10 million installs. Unfortunately for consumers, iHandy Ltd is headquartered in Beijing, China, where consumer privacy laws are very lax compared to privacy laws in America, Europe, Australia, and Japan (Figure 4).

Figure 4: iHandy Ltd Brightest Flashlight LED Android App

But wait, there’s more. A home digital assistant like Amazon Alexa or Google Assistant and their always-on listening capabilities are listening and capturing EVERYTHING that is being said in your home… ALL THE TIME!

And if you thought that conversational data was private, guess again! Recently, a judge ordered Amazon to hand over recordings from an Echo smart speaker from a home where a double murder occurred. Authorities hope the recordings can provide information that could put the murderer behind bars. If Amazon hands over the private data of its users to law enforcement, it will also be the latest incident to raise serious questions about how much data technology and social media companies collect about their customers with and without their knowledge, how that data can be used, and what it means for your personal privacy.

Yes, the world envisioned by the movie “Eagle Eye,” with its nefarious, always-listening, AI-powered ARIIA is more real than one might think or wish. And remember that digital media (and the cloud) have long memories. Once you post something, expect that it will be in the digital ecosystem F-O-R-E-V-E-R.

Monetizing Your Personal Data

All this effort to capture, align, buy, and integrate all of your personal data is done so that these organizations can more easily influence and manipulate you. Yes, influence and manipulate you.

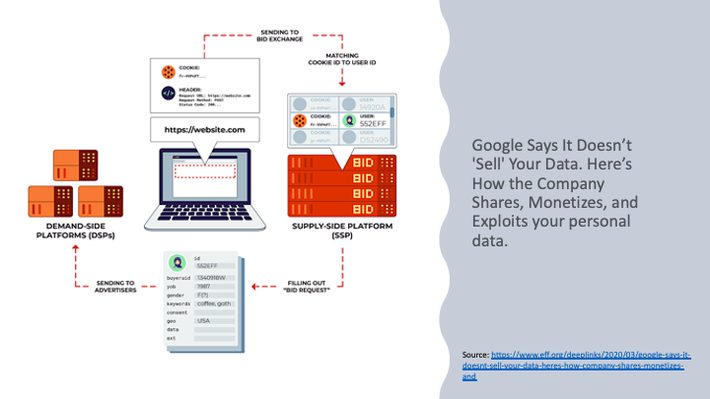

Companies such as Facebook, Google, Amazon, and countless others leverage your personal propensities, that is, the predicted behavioral propensities gleaned from the aggregation of your personal data, to sell advertising and influence your behaviors. Figure 5 shows how Google leverages your “free” search requests to create a market for advertisers willing to pay to place their products and messages at the top of your search results.

Figure 5: Heres How Google Shares, Monetizes, and Exploits Your Data

All of your personal data helped Google achieve $147 billion in digital media revenue in 2020. Not a bad financial return for a “free” customer service.

Data Literacy Summary

What can one do to protect their data? The first step is awareness of where and how organizations are capturing and exploiting your personal data for their own monetization purposes. Be aware of what data you are sharing via the apps on your phone, the customer loyalty programs to which you belong, and your engagement data on websites and social media. But even then, there will be questionable organizations that will skirt the privacy laws to capture more of your personal data for their own nefarious acts (spam, phishing, identity theft, ransomware, and more).

Part 2 of this series will dive into the next aspect of this critical “AI Literacy and AI Ethics” conversation