This article is also available as a podcast on Spotify. and was originally published on The Cagle Report.

Education, especially college education, is facing an existential crisis. Partially due to demographic factors, and in part due to decisions made by policy-makers at national, local, and academic levels, colleges and universities are struggling to stay afloat. What’s more, there are signs that conditions are likely to get far worse for the academic world for at least the next couple of decades. The question this raises ultimately comes down to “what is it that we as a society want out of our education institutions, and what is likely going to need to change for them to survive moving forward?”

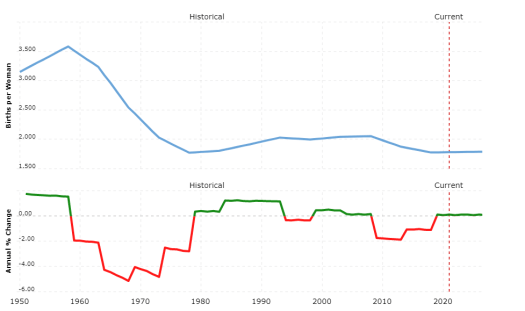

We are less than half way through a broad decline in the birth rate globally since the early 1990s, with the worst yet to come.

The Looming Baby Bust

While there are many factors that influence the future, there are a few indicators that futurists watch as closely as the birth rate. This measure – the number of births per 1000 women per year – has a profound influence upon everything from the economy to the rate of innovation to social trends. If you know how many people are born today, you have a surprisingly good idea about what the world will look like in 30-40 years, more even than technological trends or sociological shifts are likely to influence.

There have been a few major inflection points in the birth rate over the last century. The rate had increased and decreased with regularity in the United States in particular (where this article will remain focused) until the Great Depression in 1929, where it was at an historical low. However, by 1936 the population had started to increase dramatically, ultimately resulting in the single largest increase percentage wise in population of any generation in the last four hundred years. By 1955, when the population known as the Baby Boomers peaked, there were 3.9 births per 1000 women, which translated roughly to a family size of almost four children per set of parents.

For reference purposes, a population needs to have 2.1 births per 1000 women for the population to otherwise remain stable (for births to exceed deaths). It’s worth noting that the United States has not been above this reproduction rate since 1972, which means that the entire increase in population in the United States since then has been due to immigration.

There was a second echo boom that peaked about 1993 (when the birth rate was just a hair under the stability rate at 2.07), plateaued before peaking again in 2008, then started declining dramatically thereafter before hitting bottom (?) in 2019. The birth rate for 2020 was only slightly above where it was in 1972, at 1.78 births per woman. We could be looking at the start of a new cycle at this point, but the stability point is also determined by the death rate, which has taken a significant hit due to Covid-19.

There are a number of implications that this brings, especially with regards to the educational system. Someone born in 1991 is now 30. By 2010, the number of entering college students peaked and plateaued for about four years. The students entering college today were born after 9/11, and colleges and universities are already seeing a drop-off in the number of students. However, in four more years, we will start seeing students reaching 18 (nominally college age) who were born on or after 2007.

What’s significant about that year? That was the year the Great Recession hit, when there was a sharp drop off in the birth rate that would end up lasting more than a decade. Federal funding of post-secondary education had been declining, leaving states to pick up the slack at precisely the time they were already hammered by lower tax revenues due to declining enrollment from the recession. This pushed more students (and their families) into taking out student loans, saddling those same students with higher school debt even as jobs remained scarce, and putting a damper on college for those coming in since then.

In 2025, those born in 2007 will start going to college, but there will be fewer of them, not just in relative terms but even in absolute terms, and this trend will continue until at least 2039, when those born today start college. What that means in practice is that colleges and universities are going to be facing the biggest student drought in history, with enrollment down by as much as 25% from current levels by the end of it, assuming the current composition of students.

Covid has forced remote learning to advance societally by at least five, and maybe ten years, catching many schools flat-footed.

The Pandemic Challenges Academia

The Covid-19 pandemic is another system-level shock, albeit one that will likely fade over time in terms of impact. One thing that it has done, however, is to force the adoption of online telepresence to happen five to ten years sooner than it would have otherwise. Put another way, without the influence of the pandemic, social inertia would have likely meant that it would take another decade to get to a point where we’d be interacting at the same level we are now. Once the threat of Covid-19 finally fades (hopefully by the end of 2021), there will be some return to older patterns, but far less than many managers today currently believe.

For businesses, the move towards working from home has been mixed, especially outside the digital space. Digitally oriented businesses have generally thrived during the pandemic, but physically based businesses, from restaurants to manufacturing to entertainment to mostly brick and mortar retail, have been hit hard. In theory, colleges and universities should have been able to adapt, but while most universities would have seen themselves as being digital in nature, the physical constraints and tacit assumptions that colleges work under proved far more limiting than expected.

Covid uncovered an uncomfortable realization. It was perfectly possible for students to attend remotely, assuming that most universities have only a small percentage of students attending remotely. However, all too few schools actually had the infrastructure to go wholly virtual, and the requirements and complexities inherent in maintaining a large scale telepresence operation went far beyond what all but the most far-sighted of university chancellors had foreseen. Without the inadvertent cocooning effect that many schools had because of these assumptions, the attractiveness of universities as institutions was called into question. With tuition dropping, other sources of income – from football game revenues to the chance to appeal to alumnae – also suffered, making it evident just how dependent these institutions were on the notion of geophysicality, and the funding that came as a consequence of that.

Many colleges are also facing lawsuits from students or their families as those students were already charged for classes that were cut short, and tuition revenue has continued to drop as students became unsure whether or not they would, in fact, be returning to physical campuses in the fall of 2020 (or the winter of 2021, or the spring of 2021, for that matter). This has starved university budgets that were already being overtaken by administrative and facility costs, with the very real likelihood that by the end of the 2022 school year, many colleges and universities will be hopelessly in the red with little in the way of support revenue.

Covid-19 hit at the worst possible time for Universities, ironically because of the effects of the Internet (itself largely an academic innovation) on the availability and dissemination of information. One of the primary values of universities has long been its role in the acquisition of specialized knowledge by students, with a secondary value being that such schools also provided the means to certify that a person had a sufficient grasp on that knowledge to be able to employ it. Yet while university costs have climbed, overall the availability of that knowledge outside the formal educational system has also increased, raising the appearance that universities are less about teaching than they are about certification and gate-keeping. Given the fact that wages have, outside of a few in-demand verticals, remained largely stagnant, this has caused a growing number of students to question the value of that education in the face of rising tuition.

To make matters worse, corporate training programs and certifications are now competing with university degrees as sources of accreditation, and are increasingly becoming preferred over four-year or higher degree programs by employers, especially in areas where technology is changing so quickly. These programs also typically attract teachers that may not necessarily have advanced degrees, but often have developed industry knowledge through experience in the field.

Finally, there has been a hollowing out of the pipeline of graduate students and assistant professors as wages have generally not kept pace with even the tepid rise in wages in the private sector for skilled talent, and as the ultimate academic prize – tenure – has been phased out in university after university. While associate professorships and above have generally paid moderately well, typically the principal benefits that have accrued there come not to those who publish, but to those who patent, usually with the university claiming a significant chunk of license royalties.

These were existential problems even before Covid-19 manifest itself, but with the severe market downturn in 2020, potential students and their parents began to raise the question most university provosts dreaded hearing – was the value to be gained by the students worth the cost, especially when that cost might take decades to repay in full?

A university is a business, and any business that fails to adapt to changing market conditions will likely fail. The triple threat of demographically-induced declining student enrollment, the rise of the Internet-enabled competitors challenging the fundamental nature of education, and an overreliance upon external factors such as sports revenues and aggressive claims on patents (exacerbated by the Pandemic undercutting both), have all contributed to a situation where the question comes down to not if education is likely to collapse, but when.

Universities bring together experts to provide them the forum for expressing their knowledge. As the Internet makes expertise available at the click of the button, what impact will that have on traditional schools.

The Democratization of Expertise

In any discussion about academia – colleges, universities and post-graduate schools – one of the usually-unasked questions involves the role that these institutions need to play in a healthy society. Do we need a post-secondary education system as a society? I would argue that, if anything, we need it now more than ever because the need to learn has only grown in the last half-century.

The skills that you need for almost any job today have certainly changed wildly even from what they were a decade ago. Even in fields that would seem on the surface to be fairly timeless – such as archeology – changes in other disciplines (such as genetics, computer visualization, the rise of drones and satellites, and material science) have forced a radical re-evaluation of the models that we’d assumed fixed even a few decades before. The rise of ever more powerful digital tools is giving experts within any given field lenses that would have seemed fantastical at the start of their career.

Indeed, one of the hallmarks of the early twenty-first century is the emergence of the subject matter expert or research specialist as a key part of any organization. Similarly, while information technologies are eroding the traditional role of librarians in society, what they do today – helping to create information systems, establish classification models and taxonomies, and provide the infrastructure to better perceive insight from that information system – is critical for every organization. The technologies to manage these things are young, many appearing in the years since these data curators went to school and without an educational infrastructure in place, there is little cohesiveness for gaining the skills that are necessary to work with these tools.

Moreover, what that education should provide is not necessarily how to use the tools, but rather the context to make the most use of those tools. It has been well demonstrated that most innovations, in science, technology, the arts and elsewhere, occur when disciplinary domains collide. The most exciting discoveries in the world of archeology, for instance, are not coming about because of an uptick of new digs, but because archeologists are now able to synthesize their own domain knowledge with what’s coming out of population genetics, are able to visualize what ancient cultures look like with the use of artificial intelligence and architectural visualization, and able to take advantages of advances in climatology to get a better sense of the overall gestalt of those long-gone cultures.

These discoveries and innovations are occurring not because John, a geneticist, met Jane, an archeologist, at the rare university interdisciplinary luncheon, but because the Internet has made it possible to be aware of what’s going on outside of one’s discipline and having done so, encouraged communication between potential colleagues. To use a knowledge management metaphor, expertise has become siloed in universities.

This is not just an academic issue, admittedly. The same expertise has also become siloed within corporate organizations, as organizations, including publishers, want to be able to monetize their experts by controlling access to them. This has always been a thin edge for organizations to walk, as expertise is also typically expensive, but at the same time, the value of that expertise is not so much in what the expert can do but in the reputation that they bring to an organization.

As the reputation economy becomes more dominant, this too provides a conundrum for universities and corporations alike. Reputation comes about due to exposure, and one role that universities in particular play is to provide that forum for exposure to expertise. However, pre-Internet, that exposure came about primarily by being able to reach out to 200-300 people in a small amphitheater on a weekly or biweekly basis. Today, a high school student can have a following of a million people in a live stream, and the wages that the university would pay to that professor are dwarfed by what can be made from advertising revenues on YouTube.

Not surprisingly, those at the lowest rungs of the the educational hierarchy have taken a keen interest in what’s happening here. Ironically, tenure may be to blame here. If you think of a university as a network, advancement takes place through vacancies. In most corporations vacancies take place all the time: people leave for higher paying positions or are promoted up through the ranks, and the need to fill those positions ensures a certain degree of mobility within the organization.

In universities, on the other hand, tenure means that available positions open up far more slowly, not just within any given university but in all of them where tenure is present. This in general creates a Hobson’s choice for senior administrators – expel professors for real or imagined wrongs (creating a public relations nightmare), push more tenure track professors into administrative roles that they may be ill-suited for (and in general adding to the administrative overhead while reducing the reputation value of that professor), or live with a certain degree of churn at the bottom as grad students and non-tenured professors become disillusioned. As the goal of hiring very intelligent people is to increase the reputation of the college, none of these is or should be palatable choices, but they are all too often made without much strategic thought.

The ability to virtualize and componentize books has already had a profound impact upon school life (and reduced an epidemic of lower back pain) but this also makes it easier for non-universities to compete for resources.

The Decoupling of The Textbook

A similar factor involves the production of textbooks. The more forward-thinking textbook publishers came to the realization fifteen to twenty years ago that with the advent of the Internet (and eBooks), their business needed to adapt, or it would die. Textbooks are expensive to publish, require a huge amount of work to pull together, and typically have a comparatively small audience, all of which result in textbooks often costing from $50 to $250 or more. On the other hand, professors and universities both wanted to have their names on a textbook, especially if it became a de-facto standard, because it increased their respective authority in the field. The high costs of producing such textbooks also provided yet another gating factor towards keeping out competitors, as you had to be fairly large to field both the financial and production wherewithal to create them.

The eBook, digital production, and the Internet as a distribution platform, changed that equation completely. Instead of focusing on the book, the publisher began focusing on the chapter, with the idea being that good editing could make chapters become largely stand-alone entities. Digital production meant that you could aggregate different sets of chapters together, possibly with some intelligence in those chapters to allow for differences in graphical branding, as well as the use of specific metadata that would control how content would be displayed in different contexts. Semantic linking to tie related content together and the introduction of search capabilities have also been deployed, neither of which existed in any meaningful way for printed books.

Digital, distributed publishing further meant that such chapters could be combined with others upon request (or even made available as solo content), and eBook distribution platform meant that books could be made available upon your phone, laptop, or tablet as necessary, which reduced both distribution costs and cut down dramatically on student chiropractic bills. That such an approach gave instructors much more say in presenting the material that they felt was relevant was a nice side benefit, while also giving professors the chance to publish parts of more work while still focusing on teaching and/or research, rather than having to take sabbaticals (and the likely financial hit that would come because of it) to solely author a single textbook. These approaches increased their citation count, while at the same time facilitating revenue earlier and faster.

Not surprisingly, as the Internet has made the dissemination of ever more complex forms of media trivial, this has also meant that many instructions now regularly supplement their income with the creation not just of written text but of full curriculum materials, adding to their reputation in the process. This has changed the nature of the relationship between instructor and university, shifting from being an employment arrangement to an affiliation relationship. Not surprisingly, while this may reduce the direct costs to the University, it comes at the expense of a weakening in the relationship between the two agents, part of a broader trend occurring between organizations and the people who work with (rather than ostensibly for) them.

Of course, it should be noted that the publishers that didn’t adapt are no longer around, having been acquired for their catalogs by those that did.

Therein lies a strong cautionary tale for universities. The Case of Too Many MBAs provides another such tale. The very first Master of Business Administration, or MBA, was presented by Harvard University in 1908. For a number of years, the exclusivity of the degree meant that it was a highly sought-after certification. By the 1970s, most major universities with graduate schools had MBA programs, and there was a growing backlash as people who were hired because of the MBA were proving unable to be effective managers in those businesses that required specialized technical knowledge – which usually meant most companies. By 2020, MBAs were given about the same weight as a Master’s degree in any other field, and in many cases, less.

The MBA was devalued by ubiquity. Unfortunately, the Internet is all about ubiquity.

The ivory towers may still remain, but geolocation-based mass-education is already fading compared to personalized education – tailored to the individual, focusing more on certification than credentialism, and no longer dependent upon being there.

The Future of College Education

Given all of these factors, it is a safe bet to expect that Academia will need to change dramatically to survive. There are several trends that will shape what the education of tomorrow (and it may be a very soon tomorrow) will look like. This includes many or all of the following:

- Distributed and De-Localized Education. The trend of untying education from a physical place and making it virtual was already underway even before Covid-19, but it has accelerated dramatically in the aftermath of the Pandemic. In effect, universities are becoming media publishing companies, with the media in question being “educational” in nature. Hybrid models may very emerge as a consequence, but such a hybrid would treat universities as being more like retreats rather than physical institutions.

- Decommissioning Campuses. Just as many banks are becoming nervous about commercial downsizing of both office space and production facilities, so too should university boards of directors be sweating the closure and decommissioning of campuses in favor of virtual instruction. A significant number of college campuses were either constructed or expanded in the 1960s and early 1970s as the Boomer generation started to go to college. These facilities are now 50-60 years old and showing their age as the costs to keep these campuses functional continue to climb. As classes empty, the incentive to just sell off the campus property outright will become unavoidable.

- Become Global. The flip-side of this is that the location (or even nationality) of the student should not be a gating criterion. The European Union already provides an example of this, where anyone within the EU can attend an EU university without penalty for being “out of state” or an “international student”.

- A Move Towards Certification Rather Than Credential Oriented. In many respects, academia needs to become more agile, and one way it can do that is to reduce the overall time it takes to receive some form of certification, possibly down to the half-quarter or quarter level (i.e., six weeks to three months).

- Make All Education Continuing. One major problem with the credential approach is that it tends to force education into the period from age 18 to 25 with continuing education often given short shrift by universities, which sees it as not profitable. However, by moving towards a certification model, you give people who are in the midst of their career the opportunity to learn from the best, without forcing them to take a hiatus from their careers. It also increases the “fatness” of the tail, so that the drop in immediate post-secondary students can be offset by more, older students.

- Sell Certification, Not Education. Colleges need to acknowledge what has largely been unsaid for decades – you go to school to get the certificate, not to get an education. Once you do that, it opens up an alternative way towards paying for education: you can take the class repeatedly for free, but, especially with advanced classes, you only get credit (and only pay for credit) when you pass.

- Move Towards Electronic Mediation. Teaching a class with ten thousand students is far different from teaching with thirty, especially when it comes to grading homework and tests. Using a combination of AI for essays, electronic mediation of tests, crowd-sourcing, and rules-based analysis, an instructor can more readily identify where students are having trouble, which is the real value of tests. Currently, most of this work is done by graduate students, at the cost of their own studies.

- Individualized Curricula of Study. Most traditional universities work upon the assumption that there are specific courses that you have to have, in a specific sequence, in order to achieve a degree. This may force a student to take courses that they have already mastered, that are outside of their desired area of expertise, and in general serve to reduce the possibility of cross-specialization, at a time when cross-specialized is a highly desired trait. If a student lacks the skills to pass an advanced class, then they will drop back to a simpler class at no penalty.

- Student Community MOOCs. Give more senior students the tools to create educational materials for junior students. Build an active community of users, combining the features of MOOCs and virtual worlds, with some mediation from instructors, and give credit for this in completing advanced classes.

- Drop Educational Degree Requirements for Instructors. Teaching is a skill, like any other, and you do not need a Master’s (a six-year degree) or Ph.D. (an eight-year degree) to teach. There are a great number of people with real-life skills and experiences that are prohibited from teaching because they cannot take the time from already busy careers to spend two years learning what can be taught in six months. They are going elsewhere to teach, to academia’s loss.

- Spin Off Research Institutes. This focuses on the fact that all too frequently professors treat their graduate students as free labor, often taking advantage of their ideas and input while delivering little of value in return. This is a toxic relationship that has become institutionalized. Universities would be better served spinning off their research work as separate businesses and then hiring graduate students as associate researchers. There is nothing that says that a professor cannot be employed in both capacities, if he or she so chose, but by making these two different roles, you don’t end up with brilliant researchers but awful teachers being forced to teach (or vice versa). This also holds true for the arts and humanities (consider the Clarion Writer’s conference as being a graduate school for writers).

- A League of Their Own. Football has been a defining trait of universities in the US for decades, but that’s an aberration. Baseball, hockey, and similar team sports have a tiered farm-team system where young players are able to concentrate on learning the basics, why not football? As with research institutes and retreats, it’s possible for a university to spin off its football team into an (already extant) league, letting them negotiate both physical and pay-per-view rights independently of students seeking an education while still maintaining branding ties with them and supporting revenues.

- Integrate Vendors and Companies. Education is an expensive proposition for most corporations, and many are loathe to create extensive training processes without some kind of financial backstop to make training revenue neutral at a minimum. One idea that may come about from reimagining the post-academic world is to build out a conceptual platform that both governmental and private organizations can plug into. This way, organizations can specialize in providing education that may be of value to both customers and users while at the same time being able to be findable within a broader network of curricula.

- Begin With the Community Colleges. Most community colleges are already experimenting with several facets described here, and there’s a push at the federal and increasingly the state level to make at least the first two years of community college free. This is a good thing, but it also needs to be done in such a way as to be both sustainable long term and largely protected from shifts in the direction of political winds.

Speculative author William Gibson has been attributed as saying “The future has arrived its just not evenly distributed yet.” This holds very much true for post-secondary education. No, we’re not going to see a wasteland of boarded up university campuses any time soon, but it is very likely that even while ivy-covered halls will continue to stand for decades, its important to realize that the underlying nature of how we educate people is undergoing radical change right now, and that what education will look like a decade from now may look very, very different than it is today.

Kurt Cagle is the editor of The Cagle Report, and is the Community Editor for DataScienceCentral.com. He lives in Issaquah, Washington with his family and his cats.

Cool!