In the media and communication industry, writers are frequently confronted with huge volumes of textual material. They are having significant difficulty extracting structured knowledge from these papers, and the text is being underutilized, perhaps leaving critical information unknown.

Machine learning techniques can assist, but they require a thorough understanding of the information required and manual annotation of the corpus. Before going further, let’s understand what annotation, types, and how it is helping machine learning models to perform accurately.

What are annotations?

Annotation is the process of labeling data which are in the form of image, video, text annotation, or object in order to use Machine Learning to train a model. In simple words, it is the process of transcribing, identifying, and labeling key characteristics in your data. These are the characteristics that you simply want your machine learning system to recognize on its own, with unannotated real-world data.

Annotation can assist in the cleaning up of a dataset. It has the ability to fill in any gaps that may exist. Annotation of data can be used to recover data that has been incorrectly labeled or has missing labels and replace it with new data for the Machine Learning model to utilize.

Types of Annotations

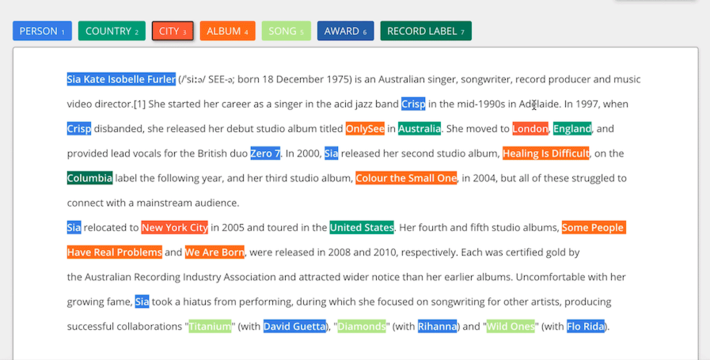

1. Text Annotation

2. Video Annotation

3. Image annotation

4. Named Entity Annotation

5. Audio Annotation

6. Semantic Annotation

7. Intent Annotation

8. Sentiment Annotation

Annotation of text in the media industry

The process of gathering, editing, and publishing newspaper stories is a complex and highly specialized task that frequently operates within specific publishing constraints. News isn’t necessarily written in a neutral tone; it might depart from the usual by employing certain vocabulary, a particular writing style, or a particular author’s point of view. Media bias, and news bias in the context of news stories, are terms used to describe certain qualities of the stories. To avoid news bias, accuracy, and balanced viewpoints have been emphasized in the context of news reporting, because news can have a large influence on readers, forming people’s viewpoints and attitudes toward social issues, and ultimately changing political views and society.

With such a huge amount of text data being used in the industry, annotating text and each sentence is a time-consuming and laborious task which raises the need for professional annotators who can correctly annotate the text.

How it is done

Data selection

First, the raw data set is collected from the internet. It is impossible to label every sentence in those articles. Instead, annotation companies use several methods to choose a subset of articles for each categorization challenge and then only labeled or annotate those subsets.

Data Processing

When data is collected and converted into useful information, it is called data processing. It should be corrected so that the end product, or data output, is not harmed. Missing values must be addressed, special characters must be removed, irrelevant phrases must be eliminated, and so on. The list could go on and on. A thorough and succinct exploratory data analysis (EDA) can reveal the issues that need to be addressed and lead the data preparation and cleaning process. Most HTML elements were removed and no further text processing was done, such as lower case, removing stop words, or even lemmatization or tokenization, because the sentences would have become hard to read, comprehend.

Data Labeling

The data that the models are trained with must be labeled data as correctly as possible to achieve the best possible prediction accuracy by the ML models afterward. As a result, it’s critical that those who label the data understand the categorization categories and how to give the relevant category to a sentence, i.e., how to accurately label the phrase.