In this blog I will go a bit more in detail about the K-means method and explain how we can calculate the distance between centroid and data points to form a cluster.

Consider the below data set which has the values of the data points on a particular graph.

Table 1:

We can randomly choose two initial points as the centroids and from there we can start calculating distance of each point.

For now we will consider that D2 and D4 are the centroids.

To start with we should calculate the distance with the help of Euclidean Distance which is

√((x1-y1)² + (x2-y2)²

Iteration 1:

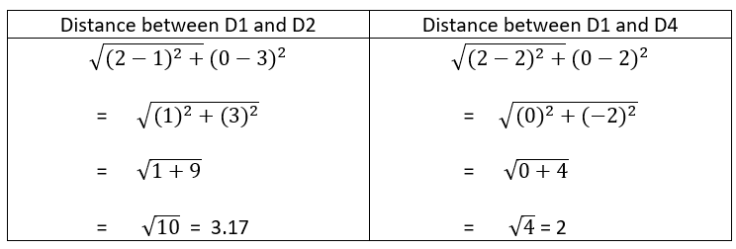

Step 1: We need to calculate the distance between the initial centroid points with other data points. Below I have shown the calculation of distance from initial centroids D2 and D4 from data point D1.

After calculating the distance of all data points, we get the values as below.

Table 2:

Step 2: Next, we need to group the data points which are closer to centriods. Observe the above table, we can notice that D1 is closer to D4 as the distance is less. Hence we can say that D1 belongs to D4 Similarly, D3 and D5 belongs to D2. After grouping, we need to calculate the mean of grouped values from Table 1.

Cluster 1: (D1, D4) Cluster 2: (D2, D3, D5)

Step 3: Now, we calculate the mean values of the clusters created and the new centriod values will these mean values and centroid is moved along the graph.

From the above table, we can say the new centroid for cluster 1 is (2.0, 1.0) and for cluster 2 is (2.67, 4.67)

Iteration 2:

Step 4: Again the values of euclidean distance is calculated from the new centriods. Below is the table of distance between data points and new centroids.

We can notice now that clusters have changed the data points. Now the cluster 1 has D1, D2 and D4 data objects. Similarly, cluster 2 has D3 and D5

Step 5: Calculate the mean values of new clustered groups from Table 1 which we followed in step 3. The below table will show the mean values

Now we have the new centroid value as following:

cluster 1 ( D1, D2, D4) – (1.67, 1.67) and cluster 2 (D3, D5) – (3.5, 5.5)

This process has to be repeated until we find a constant value for centroids and the latest cluster will be considered as the final cluster solution.