WOLFRAM MATHEMATICA

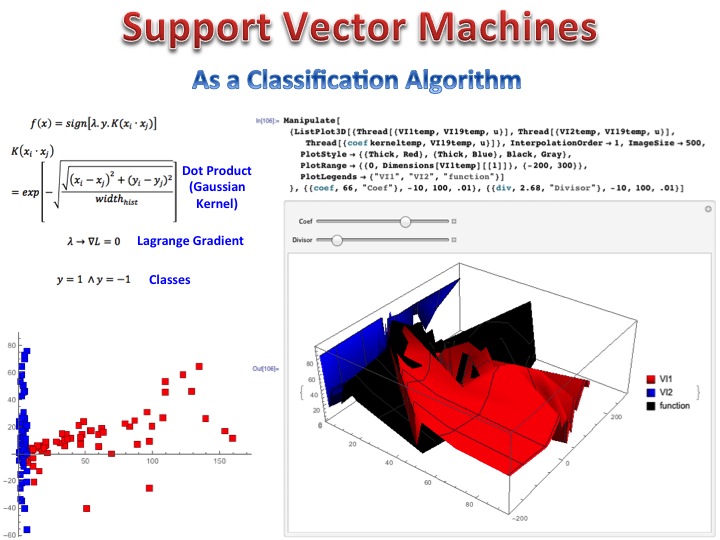

Before assessing R and Python, I will start with Wolfram Mathematica. It’s a powerful software, similar to MatLab. You can handle lists and matrices easily, you have all the best mathematical functions, backup of Wolfram Alpha and extremely sophisticated graphics visualizations, that allow you, for instance, to make and visualize an animated gradient descent, animate different weights for a given neural network, choose a specific Machine Learning algorithm and automatically classify your dataset in classes, plot stunning 3D visualizations, make animations and manipulate variables values dynamically at the same time you see the output of your calculation. It has 4.65 Gb size and comes with all libraries integrated. It’s a great program when you know the formulae for Machine Learning algorithms, so you can build them from scratch, in a completely customized way. You can also do face recognition, geolocation of objects with 3D plots of map surface, handle cellular automata like any other and develop social networks models with artificial intelligence completely customized. You can even develop a self driving car project, see the work on the YouTube video. Below you can see a Support Vector Machine in 3D.

R

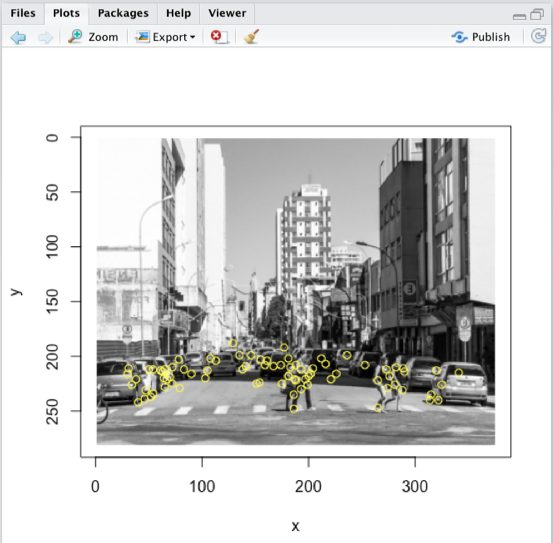

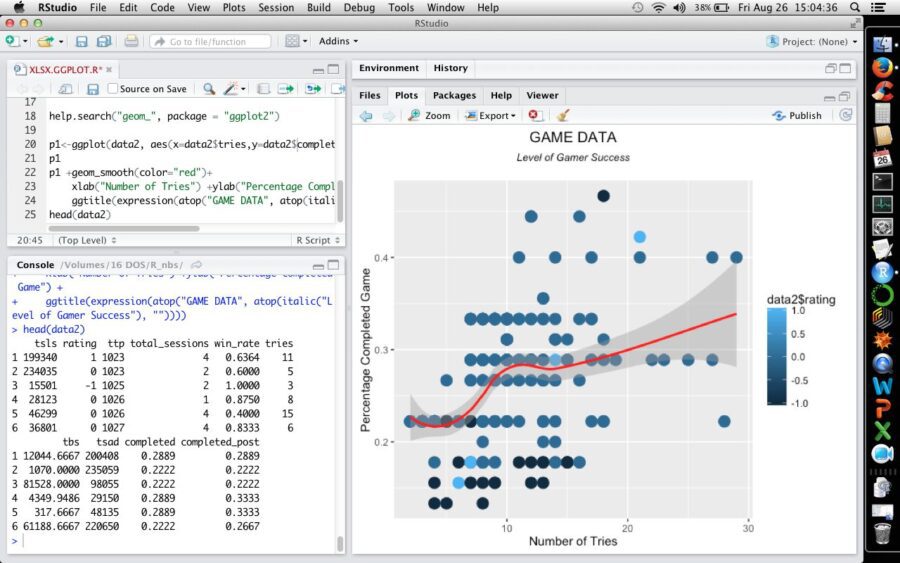

However, market demands a skill set where R and/or Python are essential. R is extremely easy to learn and free program, with lots of libraries (on demand, what makes the software faster) including ALL Machine Learning algorithms, including Neural Networks and Deep Learning. You can also build models from scratch (like face recognition), faster than a software like MatLab or Mathematica. Almost everything is automatic. All Machine Learning Algorithms have hyper parameter tuning, what makes extremely easy to build a model. But as you add more libraries, the software becomes slower, also affected by for/while loops. Statistics is an extremely strong feature, better than any other software. You can draw maps, make geolocation easily, animations. Besides, stackoverflow, CRAN and R-bloggers also help a lot. Ah, you can also handle missing values and outliers very easily, rather than just replacing my mean. R may be connected to Microsoft Azure Machine Learning or RapidMiner.

In my point of view, R is perfect when you have a quite large dataset (you can also run calculations in the cloud), for any business that really prefer quality of analysis over quantity. If you want details, R is the right choice. You can even detect faces and objects with R.

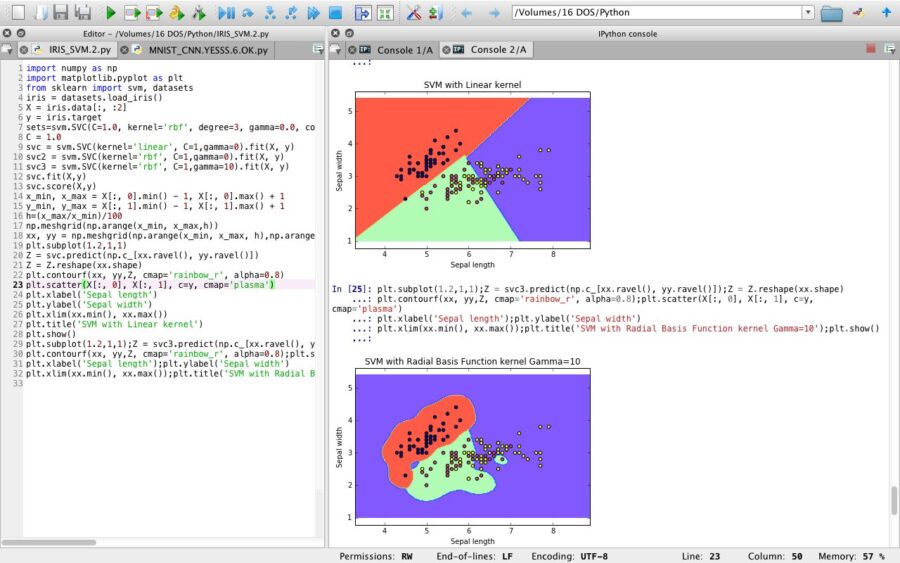

But then comes Python, where you can customize everything.

PYTHON

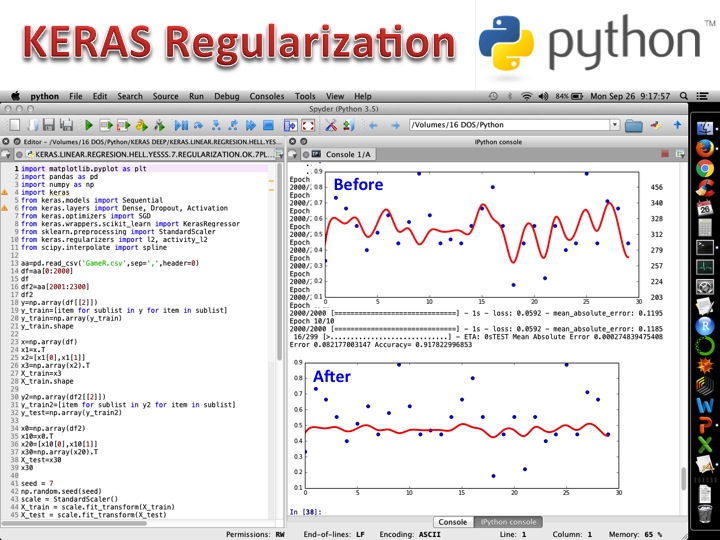

Python is excellent when you want to create APIs, handle large datasets 2 X (or more) faster than R and Mathematica, when you want o build models from scratch, if you have knowledge about Machine Learning algorithms, their formulae. It’s easy to learn by yourself and have a good online support (stackoverflow, github, etc). Neural networks modeling is a piece of cake, once you learn to get the best from online sources. Python escalates easily, it’s a slim software (I mean not fat), but lacks a more detailed description regarding statistical analysis. You can do face recognition in full, using OpenCV, Convolutional Neural Networks, pattern recognition, also geolocation using Jupyter and use all Machine Learning softwares. Graphics in Python is not advanced and in my point of view, it’s the perfect software for handling big data and automating Data Science tasks. Python may run with Tensorflow and Microsoft Azure Machine Learning and use a cloud service like Amazon. Definitely a software to fall in love with.