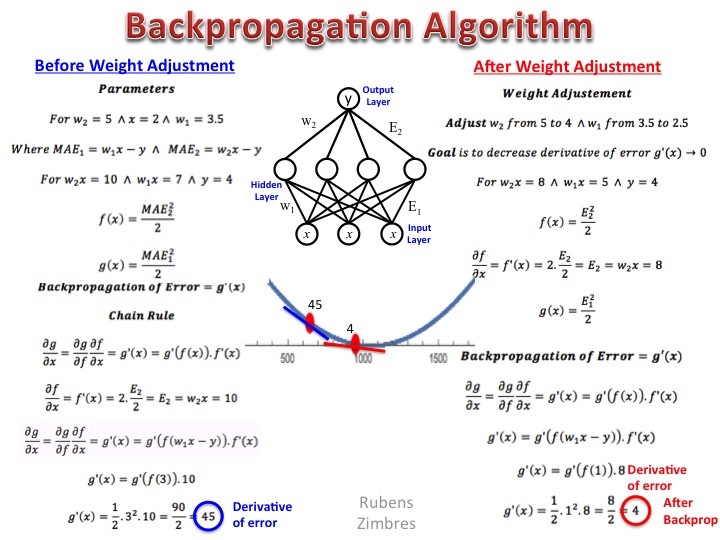

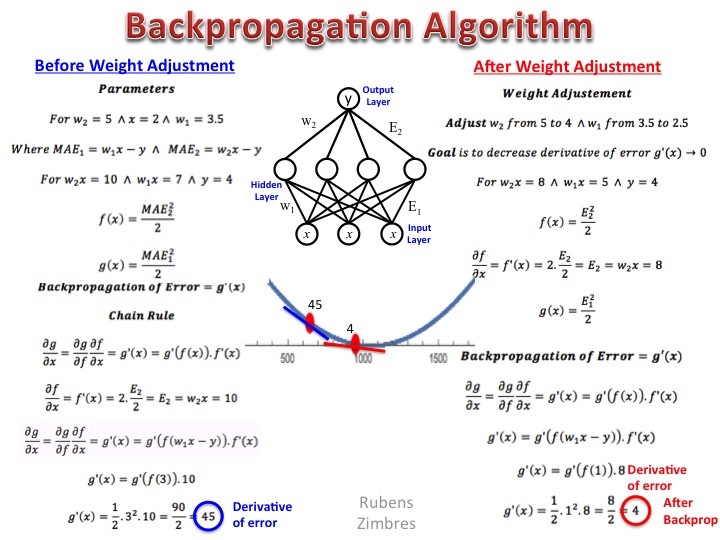

Here I present the backpropagation algorithm for a continuous target variable and no activation function in hidden layer: although simpler than the one used for the logistic cost function, it’s a proficuous field for math lovers.

Here I present the backpropagation algorithm for a continuous target variable and no activation function in hidden layer: although simpler than the one used for the logistic cost function, it’s a proficuous field for math lovers.