When I tell people that I work at an AI company, they often follow up with “So what kind of machine learning/deep learning do you do?” This isn’t surprising, as most of the market attention (and hype) in and around AI has been centered around Machine Learning, and its high profile subset, Deep Learning, and around Natural Language Processing, with the rise of the chatbot and virtual assistants. But while machine learning is a core component for artificial intelligence, AI is in fact more than just ML.

So what does it really mean for an application to be “intelligent”? What does it take to create a system that is “artificially intelligent?

In Blade Runner, Harrison Ford and co. used the Voight-Kampf machine to see if a suspect is a replicant. In the real world, the late and great Alan Turing came up a test to measure whether a machine is able to exhibit behaviour is that equivalent to that of a human, aptly known as the Turing Test. “A computer would deserve to be called intelligent if it could deceive a human into believing that it was human,” said Turing. Since its advent in the 1950s, it has been the most common litmus test employed in AI. The test is rudimentary but it presents a baseline that needs to be reached before it can be considered intelligent.

Fooling Turing — Components of an AI system

Now, let’s look behind the curtain to see how to can create a system that can pass the Turing Test.

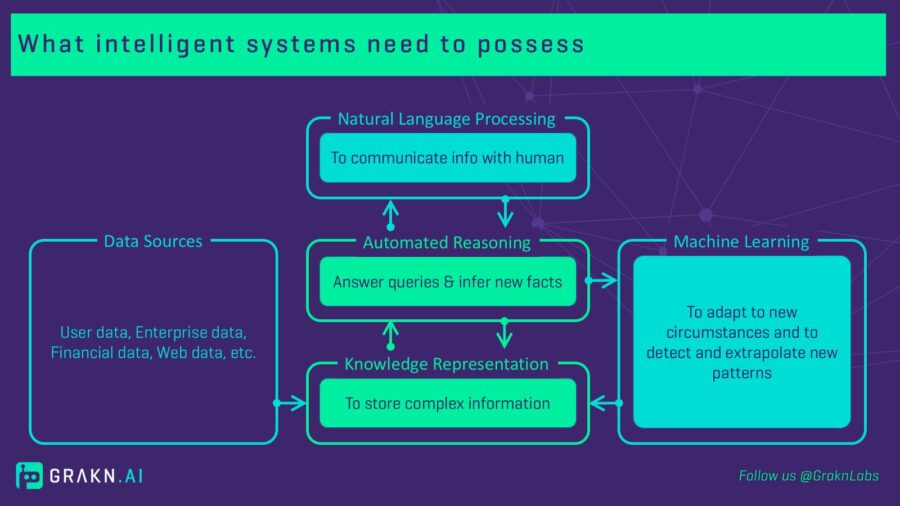

According to Norvig and Russell, In order to pass the Turing Test, the computer would need to possess the following capabilities:

- natural language processing

- knowledge representation,

- automated reasoning, and

- machine learning

Now, I assume you already trust that I’m quoting from an authoritative source, but in case there’s any doubt (or if your memory needs jogging), Norvig and Russell are AI heavyweights. Dr Norvig is currently Director of Research at Google, and Dr Russell is a Professor of Computer Science at UC Berkeley. They wrote the textbook on artificial intelligence used in more than 1100 universities in the world.

What intelligent systems need to possess

Each component outlined above serves a different purpose to create a system that mimics human-like intelligence. Natural Language Processing allows a machine to communicate and receive information in an organic human form, rather than as unwieldy lines of code. Machine Learning systems gives it the ability to adapt to new circumstances and detect new patterns and facts.

Knowledge Representation and Automated Reasoning

As the lesser-known components of AI, Knowledge Representation and Automated Reasoning aren’t as commonly spoken about in the press but nonetheless play a key role in the creation of intelligent systems.

Knowledge Representation allows us to make sense of the complexity in information. I like to think of it like a “mental map” of how things are related to each other in the real world and its context.

In the world of bubbly alcoholic drinks, Champagne is a type of sparkling wine but it is also a region that spans across several departments in France. You only have to look at the number of disambiguation pages on Wikipedia to realize how many items share the same name but represent totally different concepts, which is something that a human can recognize naturally, but needs to be made explicit to a machine. As another example, consider “face” — this could be, among other things, a clock face, a person’s face, the side of a cliff, a magazine, an album or the verb representing the direction something is pointing.

So now that we have a model of how the world works, what is Automated Reasoning? Similar to the Type 2 thinking that Kahneman describes, automated reasoning capabilities allows a system to “fill in the blanks” as there is no such thing as complete information or data with no gaps. So when tasked with the question of finding out in what country Dom Perignon is made, the system would be capable of automatically inferring that it is in France. The logic chain here would be Dom Perignon is a type of champagne, champagne is only produced in the region of Champagne which lies in the country of France.

Knowledge Representation + Automated Reasoning = Knowledge Base

Just as behind every winning trivia/pub quiz team lies a knowledge base composed of multiple human brains, there is an synthetic knowledge base behind an intelligent system. It allows the storage of complex information, answers queries and infers new facts from existing data. It doesn’t mean that you don’t need NLP and ML capabilities to effectively communicate and to ingest new information, — these are crucial elements of any intelligent system. However, an intelligent storage layer where knowledge can be stored and retrieved efficiently and systematically, is also fundamental.

For Original article, click here.