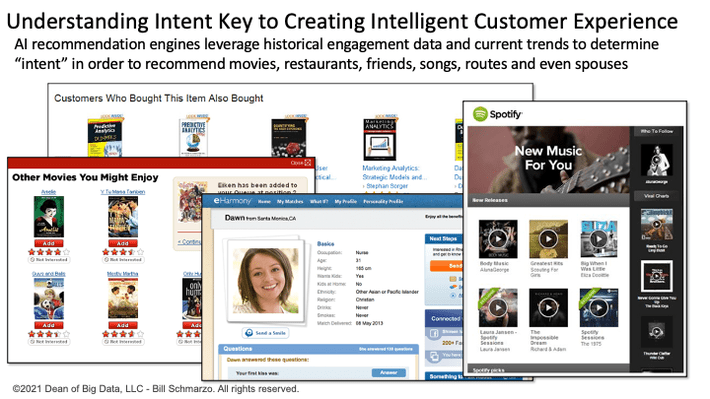

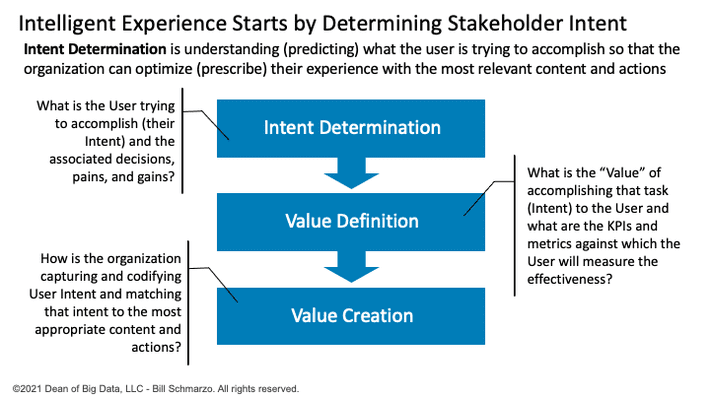

In my blog “The Importance of Determining Intent”, I discussed the importance of determining user intent to create an “intelligent” user or stakeholder experience. Analytics-centric organizations specialize in determining and codifying a user’s intent in order to provide a more engaging, relevant, hyper-personalized experience (Figure 1).

Figure 1: Using “Intent Determination” to Create an Intelligent Customer Experience

To create an “intelligent” user experience requires leveraging AI/ML to analyze a deep history of the user’s interactions to determine the user’s intentions, and then coupling those intentions with current trends, patterns, and relationships to match those intentions with a deep understanding of the available content to recommend the most relevant action.

We reviewed how digital marketing companies, such as those featured in Figure 1, determine user intent. These companies accumulate a deep history of each individual user’s interactions including what sites or content they visited or viewed, how long they spent with each site or piece of content, what they clicked on, what they did not click on, and their contextual search requests. They analyze the user’s interaction history, and match that with current trends and behaviors of similar cohorts, to determine and codify (think propensity scores) the user’s intentions (areas of interest) that drives real-time recommendation decisions.

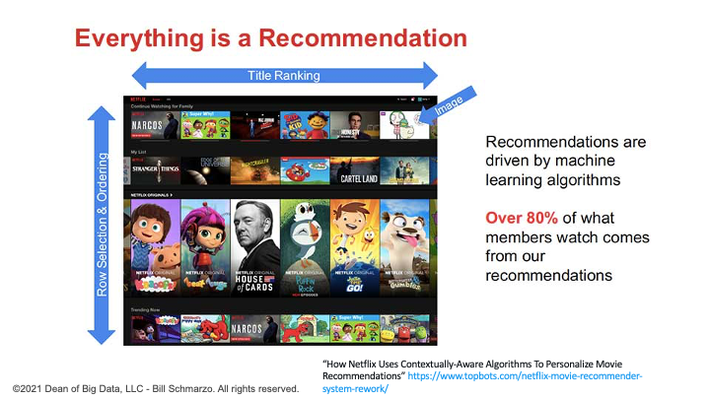

For example, the Netflix recommendation system gathers interaction data from each of their customers. Every time you press play and spend some time watching a TV show or a movie, Netflix is collecting data that informs the algorithm and refreshes it. The more you watch, the more accurate the algorithm becomes in understanding your likely viewing intent (Figure 2).

Figure 2: How the Netflix Recommendation Engine Works

What can we in the corporate world learn from these B2C-centric organizations? To be successful in determining user intent and creating an intelligent user experience starts with data observability.

What is Data Observability?

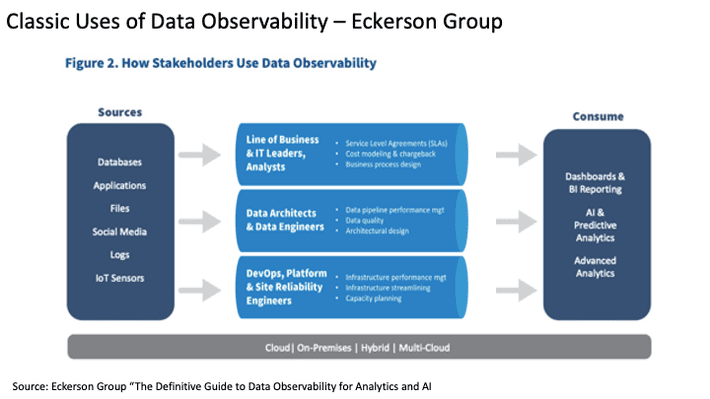

“Data observability means that business and IT can monitor, detect, predict, prevent, and resolve issues from source to consumption across the enterprise data pipelines that power analytics and AI workloads.” – Eckerson Group “The Definitive Guide to Data Observability for Analytics and AI”

Typical components of data observability include[1]:

- Instrumentation (Tagging): instrumentation is the ability to collect system telemetry data to monitor and measure performance, detect errors, and get system and user engagement data that represents the application’s state.

- Event and engagement correlation: The telemetry data collected from across your system is processed and correlated, which creates context and enables automated or custom data curation for time series visualizations.

- Labelled outcomes: These automation technologies are intended to gather outcomes so that ML models can be used to correlate events and actions to outcomes and attribute the value of those outcomes to the individual or collection of events and actions.

- AI-drive recommendations: AI / ML models leverage the correlations of the labelled events at the individual entity level to drive customer, product, and operational recommendations.

Figure 3 shows the classic data observability use cases, courtesy of the Eckerson Group.

Figure 3: Classic Uses of Data Observability – Eckerson Group

This is a great foundation, but we must take Data Observability one step further. We can expand Data Observability to not just optimize system performance, but also expand how we are instrumenting or tagging the environment to understand how our individual users are interacting the system to determine user intent around which we can provide a more relevant, more meaningful user experience.

What can we learn from the world of web analytics and digital engagement about instrumenting their environments to determine user intent?

Web Analytics Learnings

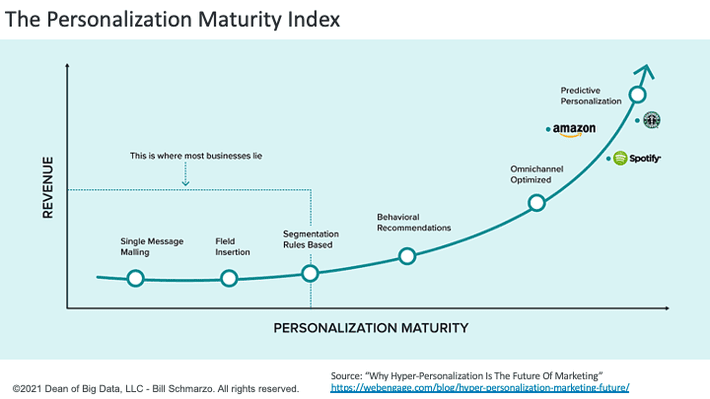

In web analytics, a tag is a small piece of code inserted in the page’s source code. Tagging facilitates the data collection and sharing between your website (or your app) and the various technologies, so you observe your users and determine what they’re trying to do (intent). All this data and analysis is in to support of hyper-personalization, which is a more advanced next step to personalized marketing where it leverages AI and real-time streaming data to supply more relevant content, product, and service information to every user (Figure 4).

Figure 4: The Personalization Maturity Index

For example, to support Amazon’s hyper-personalization efforts, Amazon leverages a multitude of user engagement data including Full name, Search Query, Average time spent on search, Past purchase history, Brand affinity, Category browsing habits, Time of past purchases, average spend amount, and more.

Then Amazon’s recommendation engine algorithm makes individualized product recommendations based on 4 data points[2]:

- Your previous purchase history

- The items that you have in your shopping cart

- Items that you have rated and liked

- Items that have been liked and purchased by other customers

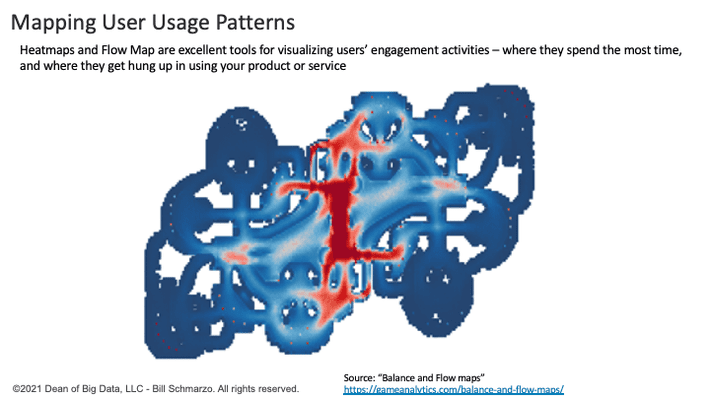

Some digital media companies also employ user engagement heat maps and flow maps to guide their personalization efforts. Heat maps and flow maps are excellent tools for visualizing spatial user behavioral data. For example, flow maps are used for evaluating death events in video games to alleviate player frustration which might lead to game abandonment (Figure 5).

Figure 5: Mapping Users’ Usage Patterns

And while our data consumers may not have death events (though sitting there and waiting for your query to return can certainly feel like a death event), the ability to get the right data to our users in a timely manner can impact the user experience.

Data Observability Summary

Data Observability to support creating an intelligent user experience means capturing and monitoring the interactions that are the result of the operations of a system/ecosystem to uncover and codify customer, product, and operational insights (predicted behavioral and performance propensities) to predict what’s likely to happen next so that they can prescribe recommended actions that optimize (continuously-learn and adapt) the operations of the system/ecosystem in support of an intelligent user experience.

If our goal is to create an intelligent user experience that helps our users accomplish the jobs at hand with accurate data and analytics in a timely manner, then data observability is the starting point for determining intent necessary for creating that intelligent user experience (Figure 6).

Figure 6: Role of Intent to Create an Intelligent User Experience

Determining User Intent is the starting point for creating that intelligence user experience, and data observability at scale is a “must have” to make that happen.

[1] Splunk: “What is observability?”https://www.splunk.com/en_us/data-insider/what-is-observability.html

[2] “Why Hyper-Personalization Is the Future of Marketing” https://webengage.com/blog/hyper-personalization-marketing-future/