Artificial Intelligence – Cloud and Edge implementations takes an engineering-led approach for the deployment of AI to Edge devices within the framework of the cloud.

We often use the word ‘engineering’ in casual conversation. However, in this context, we attach a specific meaning to Engineering. Engineering is the use of scientific principles to design and build machines, structures, and other items, including bridges, tunnels, roads, vehicles, and buildings. The American Engineers’ Council for Professional Development defines engineering as: (specific emphasis of interest highlighted)

The creative application of scientific principles to design or develop structures, machines, apparatus, or manufacturing processes, or works utilizing them

- singly or in combination; or

- to construct or operate the same with full cognizance of their design; or

- to forecast their behavior under specific operating conditions;

all as respects an intended function, economics of operation and safety to life and property.

Engineering has many disciplines such as Mechanical Engineering, Chemical engineering etc.

(definitions and descriptions of engineering adapted from Wikipedia)

But when we consider the deployment of AI to Edge devices, we consider an interdisciplinary engineering approach. AI on Edge devices could include many areas like Drones, Edge analytics, embedded FPGA etc.

Engineering Methodology

Some initial comments:

- Science seeks boundary conditions so that the body of knowledge can be expanded beyond these conditions in an incremental manner. Engineers identify boundaries (constraints) and work within these constraints to create a product that can be used by people to solve problems.

- Science takes major steps forward (scientific revolution/paradigm shifts) through Paradigm shifts as postulated by Kuhn

- Engineering also creates its own body of new knowledge that includes applied science but is not limited to applied science. Ref What Engineers Know and How They Know It. Both science and engineering involve problem solving tools like TRIZ

Thus:

- Engineers apply mathematics and sciences to find novel solutions to problems or to improve existing solutions.

- If multiple solutions exist, engineers weigh each design choice based on their merit and choose the solution that best matches the requirements.

- Engineers identify, understand, and interpret the constraints on a design in order to yield a successful result. Constraints may include available resources, physical, imaginative or technical limitations, flexibility for future modifications and additions, and other factors, such as requirements for cost, safety, marketability, productivity, and serviceability.

- The technical solution is just the first step. It is generally insufficient to build a technically successful product, rather, it must also meet further requirements.

- By understanding the constraints, engineers derive specifications for the limits within which a viable object or system may be produced and operated.

- Unlike scientists, Engineers operate within a social context. By its very nature engineering has interconnections with society, culture and human behavior. The results of engineering activity influence changes to the environment, society and economies, and its application brings with it a responsibility and public safety.

- To embody an invention the engineer must put his idea in concrete terms, and design something that people can use

- Since a design should be realistic and functional, it must have its geometry, dimensions, and characteristics data defined.

- Engineering relates to art and aesthetics – ex Leonardo Da Vinci work and Steve Jobs approach

(above section adapted from wikipedia)

Implications for AI and Edge

Looking back at the definition of Engineering above, we can infer some key themes which apply for deployment of AI and Edge computing in the cloud:

- Creative application of technology (in our case AI, Edge, Cloud)

- Solve business problems i.e. understanding business problems and using AI, Edge and Cloud to improve a business process (Digital transformation)

- Applying to a value chain (end to end deployment of AI)

- Design considerations including performance considerations

- Working within constraints / tolerances and specific operating conditions

- Considering safety and security

- Understanding scale

- Working with physical constraints – for example device constraints

- Understanding and managing the full value chain across multiple platforms

- A design driven by AI i.e. self-learning

- Edge (IoT) provides a feedback loop to the process to drive processes

- The model is trained in the cloud and deployed on the edge

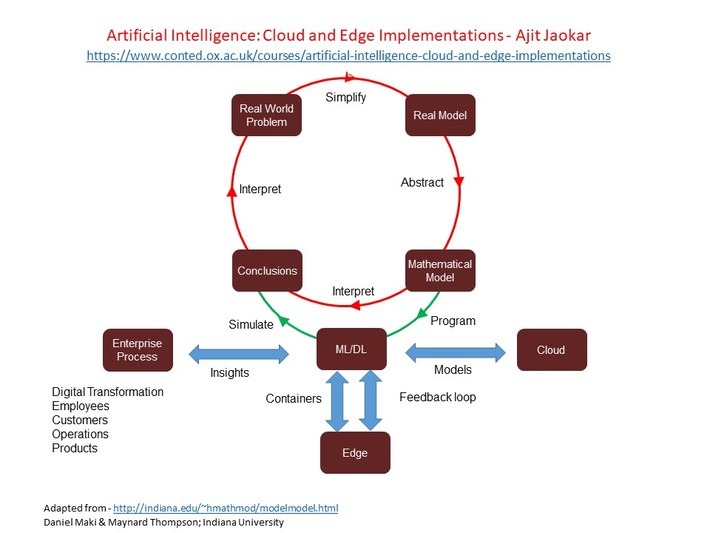

Hence, we have a big picture view as below. We model problems in the real world through ML/ DL algorithms and implement the model through AI and Cloud. The model is deployed on the edge and the edge device provides a feedback loop to improve the business process.

In this model, IoT / Edge extends beyond basic telemetry. The telemetry function captures data from edge devices and stores it in a data based in the cloud. We can then perform analytics on that data and build models based on the data. The models could be trained and deployed to edge devices. The architecture could also include streaming analytics and also include microservices/ serverless design principles. Finally, CICD / DevOps philosophy is a key part of the process as we explain below.

CICD for Edge devices

In this vision, containers are central to the whole process.

When deployed to edge devices, containers can encapsulate deployment environments for a range of diverse hardware.

CICD (Continious integration – continuous deployment) is a logical extension to containers on edge devices. Essentially, CICD and the DevOps philosophy streamlines software development. Through collaboration and automated testing, the quality of the software is improved. (CI part i.e. continuous integration). The CD (continuous deployment) part enables you to rapidly update Edge devices – either for patch / code updates or model updates,

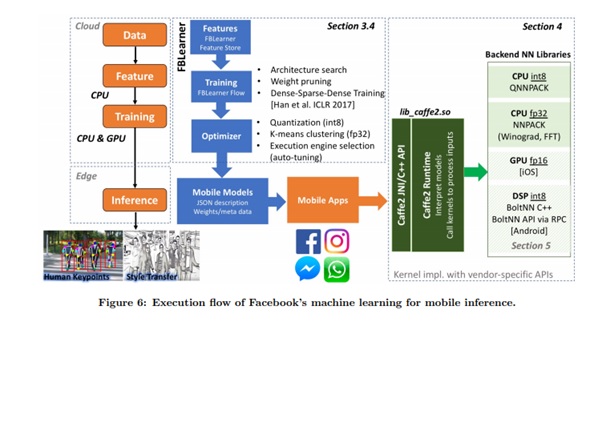

Edge devices need to also cater for a wide range of execution environments such as CPUs, GPUs, FPGAs etc. Containers lend themselves well to this philosophy. We can see this from the facebook paper below Machine Learning at Facebook Understanding Inference at the Edge

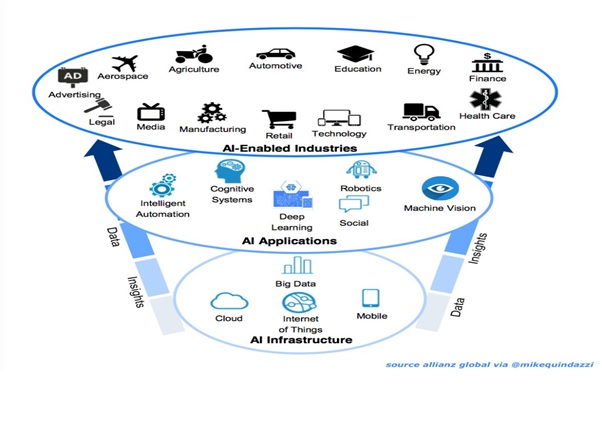

Ultimately, we see this philosophy (AI + Cloud + Edge) deployed as containers in CICD mode to transform whole industries as industry specific containers – spanning cloud and the edge

Ultimately, we see this philosophy (AI + Cloud + Edge) deployed as containers in CICD mode to transform whole industries as industry specific containers – spanning cloud and the edge

Conclusion

Artificial Intelligence – Cloud and Edge implementations takes an engineering-led approach for the deployment of AI to Edge devices within the framework of the cloud. The interplay between AI, Cloud and Edge is a rapidly evolving domain. Ultimately, we see this philosophy (AI + Cloud + Edge) deployed as containers in CICD mode to transform whole industries as industry specific containers – spanning cloud and the edge.