- The LIGO observatory can detect astronomical events from billions of light years away.

- Terabytes of complex daily data makes human analysis impossible.

- New study applies neural network with up to 97% classification accuracy.

Caltech/MIT’s LIGO, the largest gravitational-wave observatory in the world, collects data on minute space-time ripples from cataclysmic astronomical events like colliding black holes or supernovae. Classifying LIDO’s data as either an event of interest or an unknown “glitch” with high accuracy poses a challenge, due to the volume of highly complex data collected by the observatory. A recent dissertation by Columbia University’s Robert Colgan [1] proposes a neural network to accurately separate non-astrophysical glitches, achieving significantly higher classification accuracy than previous methods.

About gravitational waves and glitches

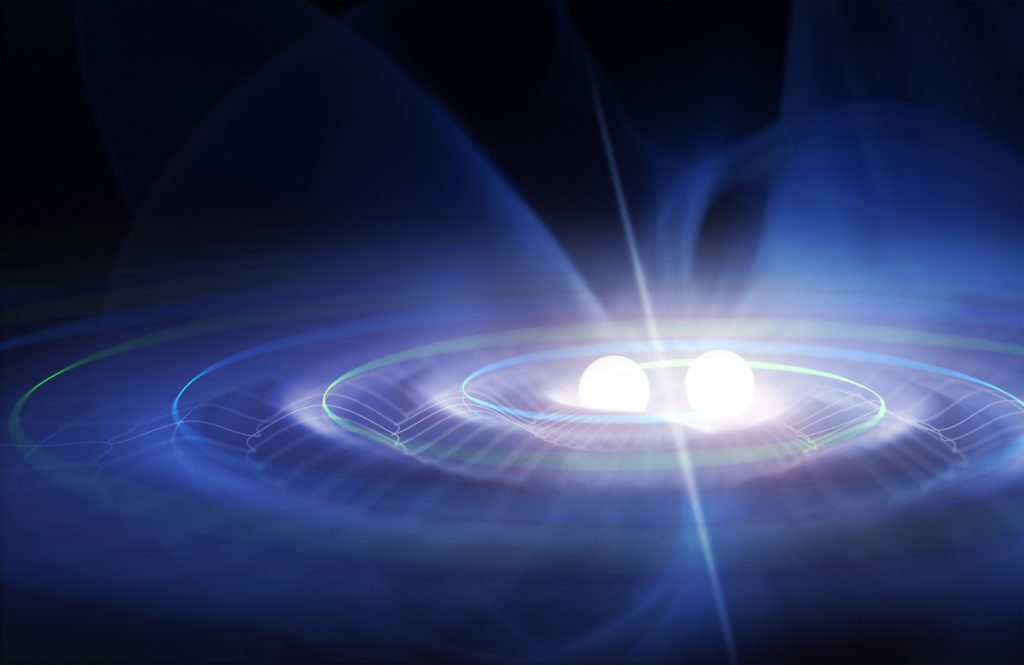

Gravitational waves, first proposed by Einstein in his general theory of relativity, are caused by massive objects — like black holes—curving spacetime. The waves ripple through the universe at the speed of light, distorting space and time as they compress and stretch distances.

In 2015, LIGO made history when it directly detected gravitational waves for the first time — from two colliding black holes almost 1.3 billion light years away [2]. Identifying the event was no easy feat — terabytes of astronomical and non-astronomical data collected from LIGOs dual 4 km-long vacuum chambers had to be analyzed and sorted.

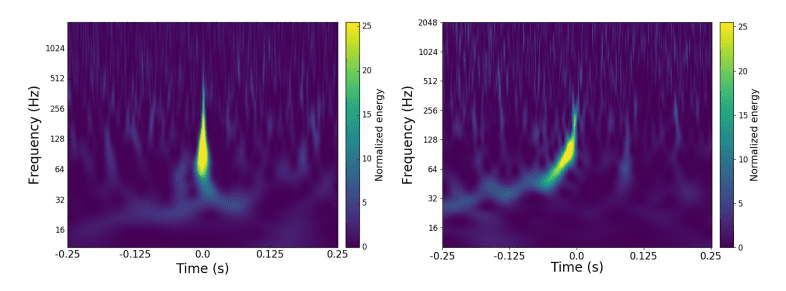

LIGO is so sensitive it doesn’t just pick up gravitational radiation — it also picks up noises from a wide range of sources. Stationary background noise, like noise from power lines, is relatively easy to identify and remove with subtraction [3]. However, noise or “glitches” that are not astrophysical in origin —like ringing telephones or pecking birds — pose significant challenges for classifiers as they can have a similar signature to gravitational waves.

Machine learning and classification for glitches

It is impossible for humans to analyze or comprehend the amount of data produced by LIDO, making ML a necessity for classification. The usual method of identifying glitches is by analyzing the gravitational wave data stream for power spikes. This new study was different because a classifier predicted whether a glitch was occurring based only on data obtained from the gravitational-wave detectors auxiliary channels.

More than 200,000 time series of auxiliary data is continuously collected on internal components and sensors, the environment in and around the detector, and various other metrics. While most of these channels are known phenomena producing consistent patterns, around 10,000 of the channels are poorly understood and studied. It is this source of potential useful information that Colgan hoped to leverage to provide additional corroboration for potential glitches derived from the gravitational-wave data stream.

The first ML-based method described by Colgan was a non-neural network where features were hand selected. Although the method obtained reasonable classification accuracy of up to 80%, the author noted the hand-picked features may not be optimal for all channels. Colgan then employed a convolutional neural network (CNN), which is not limited by hand-selected features. The lowest layer in the CNN can be thought of as an “automatic feature extractor” that learns which transformations are most useful—a step that is missing in the hand-crafted feature selection model. The CNNs gave remarkable results, with a test accuracy of 94.7%—a roughly 63% reduction in the test error rate compared to the fixed-feature model.

Limitations of CNNs for astronomical data

Tackling the terabytes of daily data requires a deep model with many layers. This comes with the significant downside of requiring more resources for training and computations. In addition, higher classification accuracy comes at the expense of clear interpretability by humans. This is a particular problem for LIGO data because the engineers and scientists responsible for diagnosing and eliminating glitches are not usually machine learning experts. The author writes that as raw accuracy is not always the most important goal, a greater degree of “explainability” might be preferable to higher accuracy.

As a final note, Colgan writes that the methods he developed aren’t limited to gravitational-wave detectors; it is a general approach that can be applied to any complex system with many input features. Therefore, it has the potential to be applied to the study of other complex machines and applications with high-dimensional data.

References

[3] Improving the Sensitivity of Advanced LIGO Using Noise Subtraction