The question of Copyright protection is important for generative models

In this two part blog, I explore this question based on a paper called “Provable Copyright Protection for Generative Models”

To summarise the ideas in this paper:

- Generative models hold much promise for novel content creation(code, text, images, video)

- However, these models are trained on vast quantities of data, much of which is copyrighted.

- According to the paper, due to precedents such as Authors Guild vs Google, many legal scholars believe that training a machine-learning model on copyrighted material constitutes fair use.

- However, the question of the legal permissibility of using the sampled outputs of such models remains unsolved.

There are examples of this concern

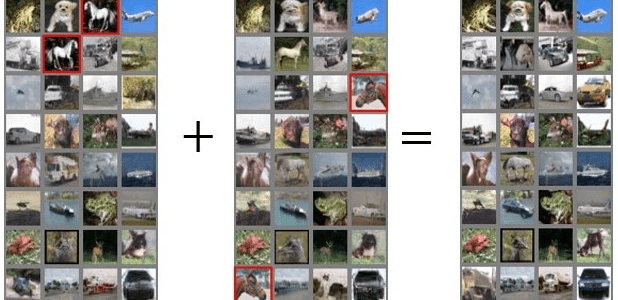

As shown by Carlini et al, diffusion models can (and do) memorize images from their training set as well; see this figure from their paper:

Source: Carlini et al.

Left: an image from Stable Diffusion’s training set (licensed CC BY-SA 3.0). Right: a Stable Diffusion generation when prompted with “Ann Graham Lotz.”

Hence, if we use generated code or images in our code, we cannot be sure if the image is substantially similar to copyrighted work from the training set.

According to the authors:

The paper provides a formalism that enables rigorous guarantees on the similarity (and, more importantly, guarantees on the lack of similarity) between the output of a generative model and any potentially copyrighted data in its training set.

They also give algorithms that can transform a training pipeline into one that satisfies our definition with minimal degradation in efficiency and quality of output.

The paper does not cover a number of ethical and legal issues in generative models such as intellectual property, including privacy, trademarks, or fair use.

Instead, the paper focuses solely only on copyright infringements by the outputs of these models.

In the second part of this blog, we will discuss the mechanisms outlined in this paper in more detail

References: https://windowsontheory.org/2023/02/21/provable-copyright-protection-for-generative-models