Summary: Whether you are a startup person or data science-minded executive in a larger organization what logic can you apply to spot the most compelling opportunities for AI in your organization.

In 2014 Kevin Kelly, founder of Wired magazine and prolific futurist famously said, “The business plans of the next 10,000 startups are easy to forecast: Take X and add AI.” Kevin you were clearly right. The Silicon Valley and every other tech startup haven are awash in companies trying to fulfill his vision.

In 2014 Kevin Kelly, founder of Wired magazine and prolific futurist famously said, “The business plans of the next 10,000 startups are easy to forecast: Take X and add AI.” Kevin you were clearly right. The Silicon Valley and every other tech startup haven are awash in companies trying to fulfill his vision.

However, whether you are a startup person or data science-minded executive in a larger organization that’s not enough information to spot compelling opportunities. What are the rules or guidelines you can apply to identify these transformative applications?

Although there are by my count seven critical technologies in AI today (with neuromorphic computing on the horizon as number 8) only two or three are sufficiently developed to warrant wide spread application.

- Convolutional Neural Nets have surpassed human ability to recognize and categorize still and video images.

- Recurrent Neural Nets have brought us to 99%+ accuracy in natural language processing and allow us to interact naturally with all manner of devices with simple speech.

- Question Answering Machines like Watson are the third. While these are ready to be commercialized they require much more effort and upkeep than the first two. All the same, if you are willing to put in the effort they will and are finding their way into our everyday life.

After reviewing a large number of AI startups and listening to their founders, the VCs that fund them, and leading thought leaders it turns out there are three rules you can apply. Two relate to spotting tasks done by humans that can be replaced. One deals with the fact that AI is now superior to humans in some circumstances.

One Second of Mental Attention – Andrew Ng

You should know the name Andrew Ng. He’s Chief Scientist at Baidu and co-founder of Coursera, the go-to MOOC for data science. At Baidu his primary focus is looking for applications for AI focusing on each industry vertical one at a time. On March 7 he gave an interview in the Wall Street Journal that revealed the secret of his AI selections.

Mr. Ng: “Things may change in the future, but one rule of thumb today is that almost anything that a typical person can do with less than one second of mental thought we can either now or in the very near future automate with AI. This is a far cry from all work. But there are a lot of jobs that can be accomplished by stringing together many one-second tasks.”

What are some examples of ‘one second tasks’?

What are some examples of ‘one second tasks’?

Almost any work requiring a human to view and react to a video or other ‘single input’ situation.

- Security guards as they monitor security camera video feeds.

- Parking enforcement officers (meter maids) and the folks that collect coins from parking meters.

- Identification and rejection of faulty parts on an assembly line. (This AI started several decades ago and really caught on with improvements in machine vision not related to AI.)

Many human tasks are wholly or partly strings of ‘one second tasks’ that taken together may look complex but can be deconstructed into a series of receive-input-and-respond steps. For example:

- Air traffic controllers.

- Heavy equipment operators.

- Warehouse pack and ship workers.

Customer Service Reps of all shades are listening to a customer, categorizing the problem into one of dozens or a few hundred similar problem-response pairs, and providing what is essentially a standardized answer lightly (if at all) customized to the specific customer. Where the earlier examples were more reliant on visual inputs, this CSR example is already being exploited by QAMs like Watson.

For example, the City of Surrey Canada has converted from human CSRs to a Watson based 311 system to answer citizens’ questions about government services. (When is recyclables pickup?) The app can answer more than 10,000 questions, more efficiently and at lower cost than humans. Here are 30 other examples of where Watson QAMs are being deployed and already replacing low-skilled humans.

Analyzing the Atomic Level of Work – David Beyer

David Beyer is a serial entrepreneur, now a Partner at VC Amplify Partners. In a recent video interview by Jenn Webb for O’Reilly he talked at length about his rules for identifying AI opportunities.

First, David observes something that may seem obvious but has probably been lost by some starry-eyed visionaries. Exploit AI opportunities only insofar as they can be addressed by the current state of technology.

Seems obvious but coming from a VC he’s saying he wouldn’t put his money behind an AI opportunity where the underlying technology wasn’t already well defined (see our primary three described earlier).

This leaves out some opportunities that may look tempting using Generative Adversarial Learning, most reinforcement learning, and pretty much all of Spiking Neural Nets (neuromorphic computing). Yes there are a handful of startups pursuing these and the payoff is potentially large if only they can bring the underlying AI technology home first.

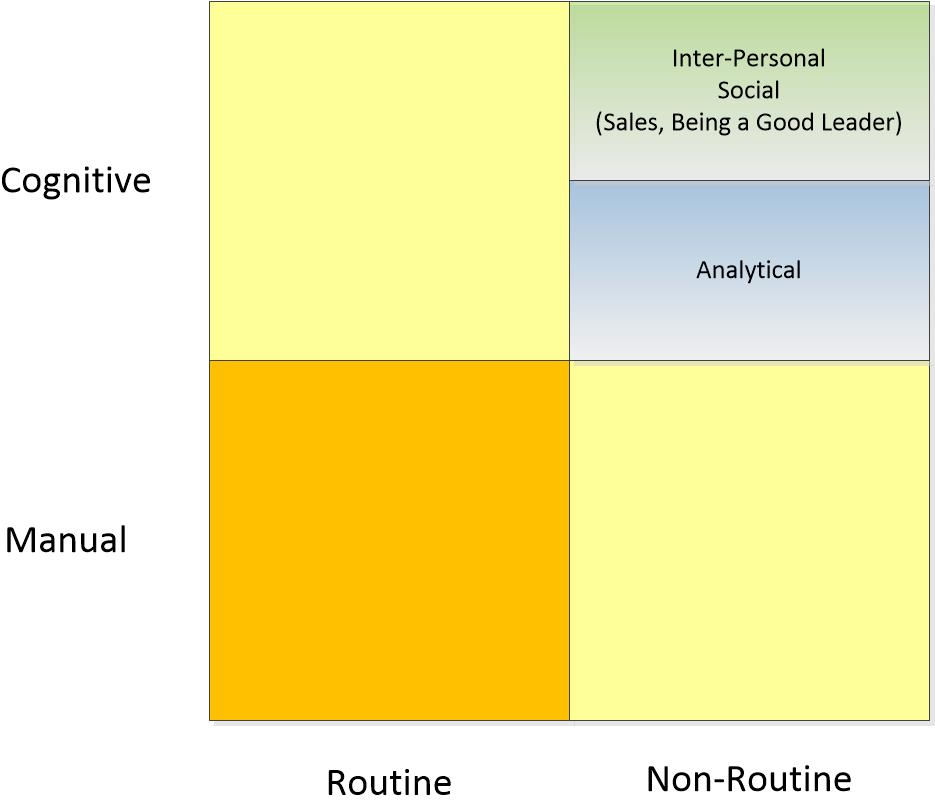

But perhaps the most interesting thought structure that David provides is how to deconstruct work to identify targets of opportunity. He uses this 4 X 4 matrix.

Just as Andrew Ng suggested, an entire task may not be homogenously in a single quadrant. The skill is in dissecting the work into its atomic components.

Clearly Manual/Routine tasks would be subject to automation but so might Routine/Cognitive (Watson applications) or Non-Routine/Manual (NLP or image processing). A good example of Routine/Cognitive that’s being automated in paralegal research.

The only tasks that seem safe for now are the Cognitive/Non-Routine which encompasses not only interpersonal tasks and leadership, but thankfully, pretty much all of data science.

Where Machines are Demonstrably Better than Humans

Here we are not talking about our AI being almost as good as humans. Although speech and text processing are now at about 99% accuracy they can still make mistakes, especially regarding context.

It may be that a revolution can be built on 99%. There are plenty of visionaries who believe that it’s only a matter of a few years until we will all be completely used to controlling all our devices by simple voice command.

But there are a handful of examples where current state AI consistently outperforms humans. Most of these examples are narrow.

Game Play:

There are a handful of games including chess where AI regularly beats humans.

There are a handful of games including chess where AI regularly beats humans.

Interesting chess factoid:

- Feb 10, 1996 first win by a computer against a top human.

- Nov 21, 2005 last win by a human against a top computer.

This type of game-level learning can be based on recurrent neural nets or custom developed reinforcement learning. Just be aware that your game play AI won’t necessarily play the same way as humans. IBM’s Deep Blue Chess machine utilized its ability to project tens of thousands of potential move combinations and evalute the statistical value at each step. This behavior may not seem human-like when working along side humans and that may be a consideration.

Image Recognition and Classification

It was two years ago that Microsoft first surpassed human ability to classify images with its 152 hidden layer convolutional neural net. In this famous ImageNet annual competition humans routinely scored 95% correct. Now AI is better.

Simple tasks like facial recognition can now be performed more reliably by AI than by humans. Importantly, when the image processing is combined with Watson-like QAMs they show the ability, for example, to more accurately identify cancerous growths from radiology images compared to trained radiologists.

It’s a Race

The great thing about working in data science here at the emergence of AI is that things change so quickly. Yes it’s a challenge to keep up but every day seems to bring some major new advance.

From a business perspective this creates a problem. How to know when to invest or when to at least put a stake in the ground. From a larger business planning perspective it’s probably better to maintain a constantly fluid plan that is updated several times a year. Some investment in what has become core AI might be reasonable. But there’s no substitute for a knowledgeable advisor who keeps track of the evolution of each of these underlying AI technologies.

This is a three part series on the impact of AI and automation on the future of work.

A Robot Took My Job – Was It a Robot or AI?

Keeping Your Job in the Age of Automation

You may also be interested in this earlier article:

Data Scientists Automated and Unemployed by 2025!

About the author: Bill Vorhies is Editorial Director for Data Science Central and has practiced as a data scientist and commercial predictive modeler since 2001. He can be reached at: